Q1. What Is the Real Difference Between an AI SOC and a Traditional SOC?

⚠️ The Fragmented Security Reality No One Talks About

Here’s the operational reality most vendors won’t acknowledge: your security stack is already complex. You’re running CrowdStrike for endpoints, Splunk or Elastic for logs, Okta for identity, separate AWS and Azure consoles for cloud, and every one of those tools generates its own alerts, in its own format, with its own context. Alerts are everywhere, but understanding is nowhere.

This fragmentation creates a fundamental architectural question that every CISO, IT Director, and SOC manager eventually faces: should your detection and response infrastructure be built on traditional SOC architecture (rules, signatures, manual correlation) or on an AI-native SOC (behavioral analytics, automated triage, cross-telemetry reasoning)?

The answer affects everything downstream: detection accuracy, response speed, analyst retention, compliance posture, and ultimately whether a breach gets contained in minutes or festers for months. This article breaks down the comparison across four pillars: Rules vs. Intelligence, Manual vs. Automated Triage, Non-Deterministic Risk, and Organizational Readiness, the four dimensions that actually separate these two architectures in production.

🔍 The Black-Box Escalation Trap

Traditional SOCs operate on a straightforward model: static SIEM correlation rules, signature-based detection, IOC feeds, and manual Tier-1 triage. Analysts spend the bulk of their time, often 70–80%, investigating alerts that turn out to be false positives. That’s not a people problem; it’s an architectural one.

Traditional MDR providers like Arctic Wolf and ReliaQuest still rely heavily on this model. They detect threats through rigid playbooks, then escalate “please investigate” tickets back to customer teams. Detection without context creates noise, not security, and it’s the reason so many security leaders feel like they’re paying for an alert feed, not managed detection and response.

Legacy MSSPs compound this further by providing monitoring without intelligence: checkbox coverage based on static playbooks rather than real-time threat context.

✅ The AI SOC Transformation Thesis

Detection without response is noise. Response without context is risk.

That’s the paradigm shift. AI SOCs use machine learning to correlate signals across endpoints, identity, cloud, and network, reasoning across data sources rather than matching signatures. Behavioral anomaly detection, dynamic risk scoring, and probabilistic intelligence replace rigid “if-then” rules that can only catch what’s already been seen.

The competitive advantage is no longer having security tools; it’s having a system that can reason across them. The “AI SOC + Human Ally” model represents this new standard: a system that doesn’t just generate alerts but understands the causal link between user behavior, threat indicators, and organizational context.

🛡️ UnderDefense’s AI SOC + Human Ally Approach

We built the UnderDefense MAXI platform around a simple principle: your security tools should work together, not in parallel. That means vendor-agnostic integration across 250+ tools, connecting CrowdStrike alerts → Splunk logs → Azure AD events in one context-aware layer. No proprietary tool replacement. No rip-and-replace.

On the detection side, proactive threat hunting with 96% MITRE ATT&CK coverage runs 24/7. On the response side, concierge analyst response through ChatOps (Slack, Teams, email) means when something suspicious happens, say a flagged login from an unusual location, our analysts reach out directly to the affected user to verify. Confirmed threats get contained immediately. No escalation back to your team required.

Stop renting alert dashboards. Start hiring an AI SOC with a dedicated security ally.

Traditional SOC vs. AI SOC: Quick Comparison

| Dimension | Traditional SOC | AI SOC (UnderDefense Model) |

|---|---|---|

| Detection Method | Static rules, signatures, IOC feeds | Behavioral analytics + rules (hybrid) |

| Alert Triage | Manual Tier-1 investigation | AI-automated enrichment + human validation |

| Response Speed | Hours to days (escalation-dependent) | Minutes (concierge analyst action) |

| Cost Model | Opaque; “contact sales” pricing | Transparent ($11–15/endpoint/month) |

| Compliance | Separate tools/workflows required | Built-in compliance kits with MDR |

| Analyst Role | Alert investigator (reactive) | Decision-maker on confirmed threats (proactive) |

While traditional MDR tells you “suspicious login detected, please investigate,” UnderDefense tells you who logged in, confirms with the user directly, and contains the threat before your team wakes up, with documented response times 2 days faster than CrowdStrike OverWatch.

Q2. Rules vs. Intelligence: Why Signature-Based Detection Can’t Keep Up with Modern Threats

📋 How Rule-Based Detection Actually Works

Traditional SOCs depend on a stack of known-threat databases: SIEM correlation rules, signature matching against IOC feeds, YARA rules for malware classification, and static detection logic. Detection only fires when a pattern matches a pre-written rule, which means your entire security posture depends on someone having already documented the exact threat you’re facing.

The scale of this limitation is now quantifiable. According to CardinalOps’ Fifth Annual Report on the State of SIEM Detection Risk, enterprise SIEMs have detection coverage for just 21% of MITRE ATT&CK techniques, leaving 79% uncovered. On top of that, 13% of existing SIEM rules are broken and will never trigger due to misconfigured data sources and missing log fields.

The maintenance burden is equally unsustainable. Large enterprises manage 5,000–15,000+ SIEM correlation rules, each requiring constant tuning as environments change. SIEMs now process an average of 259 log types and nearly 24,000 unique log sources, more than enough telemetry to detect over 90% of ATT&CK techniques, but manual, error-prone detection engineering practices limit actual coverage to a fraction of what’s possible.

🧠 How Intelligence-Based Detection Changes the Game

AI-native SOCs flip the model. Instead of asking “Does this match a known signature?”, behavioral analytics engines ask: “Is this normal for this user, this device, at this time, in this context?”

User and Entity Behavior Analytics (UEBA), ML-driven anomaly detection, dynamic risk scoring, and entity-relationship mapping build behavioral baselines per user, device, and network segment. Deviations get flagged, catching insider threats, credential abuse, and lateral movement that signature-based systems structurally cannot detect.

The living-off-the-land (LOLBins) example makes this concrete: a legitimate Windows utility like certutil.exe used for data exfiltration looks “normal” to rule-based systems because it matches no malicious signature. But behavioral AI that tracks baseline usage patterns for that binary, who runs it, when, how often, and with what parameters, immediately flags the anomaly. Same binary, same environment. One system sees legitimate tool usage; the other sees exfiltration.

Rules vs. Intelligence: Side-by-Side Comparison

| Dimension | Rule-Based Detection | Intelligence-Based Detection |

|---|---|---|

| Detection Scope | Known threats with existing signatures | Known + unknown threats via behavioral deviation |

| Zero-Day Coverage | ❌ Minimal, requires signature update | ✅ Catches anomalous behavior regardless of novelty |

| False Positive Rate | High, rigid thresholds trigger on benign activity | Lower, contextual baselines reduce noise |

| Maintenance Burden | 5,000–15,000+ rules requiring constant tuning | Self-learning baselines adapt automatically |

| Scalability | Linear, more rules = more overhead | Sub-linear, ML models improve with more data |

| Adaptability | ❌ Static until manually updated | ✅ Evolves with threat landscape and environment |

It’s worth noting: rules still serve an important purpose. Compliance-mandated detections, known-bad IOC blocking, and baseline threat coverage all require deterministic rules that fire reliably and predictably. The argument isn’t rules or intelligence; it’s that rules alone create a system that can only defend against yesterday’s threats.

How UnderDefense Bridges Both Approaches

UnderDefense’s MAXI platform combines rule-based detection (for known threats and compliance requirements) with AI-driven behavioral intelligence, ensuring coverage across the full threat spectrum without the unsustainable maintenance burden of managing thousands of static rules. This hybrid detection approach means your compliance baselines stay intact while behavioral AI catches the novel threats rules were never designed to find.

Q3. Manual vs. Automated Triage: How Much Time Is Your SOC Wasting on False Positives?

⏰ The 2:47 AM Scenario Every Security Leader Knows

It’s 2:47 AM on a Tuesday. Your phone buzzes with the 14th critical alert this week from your SIEM. You log in, spend 45 minutes investigating, and discover it’s another false positive, a developer running a legitimate script that triggered a PowerShell execution rule. You’ve been awake for an hour. You still don’t know if you missed something real buried in the noise.

This happens because traditional triage requires humans to be the manual correlation layer between siloed tools. Your EDR sees endpoint behavior, but your identity tool sees user context, and neither can reason across both. You become the integration engine, investigating alerts that should be auto-verified.

❌ Why This Problem Exists, and What It Really Costs

The root cause is architectural: fragmented tools without cross-correlation force manual investigation at every step. The numbers paint an operational crisis:

- ⏰ 25–27% of analyst time wasted chasing false positives every shift

- ⚠️ 51% of SOC teams feel overwhelmed by daily alert volume

- 💸 71% of SOC analysts report burnout, citing alert fatigue as the primary driver

- ❌ 74% of breaches had alerts generated but ignored because analysts were overwhelmed

- ⏰ Average SOC analyst tenure is now under 18–24 months before turnover

- ⚠️ 13% of SIEM rules are broken and will never trigger, creating invisible coverage gaps

Every departed analyst takes institutional knowledge with them: the context about what’s normal in your environment that no playbook captures. That’s the hidden cost nobody puts in the budget, the erosion of organizational defense memory.

✅ How Automated Triage Should Actually Work

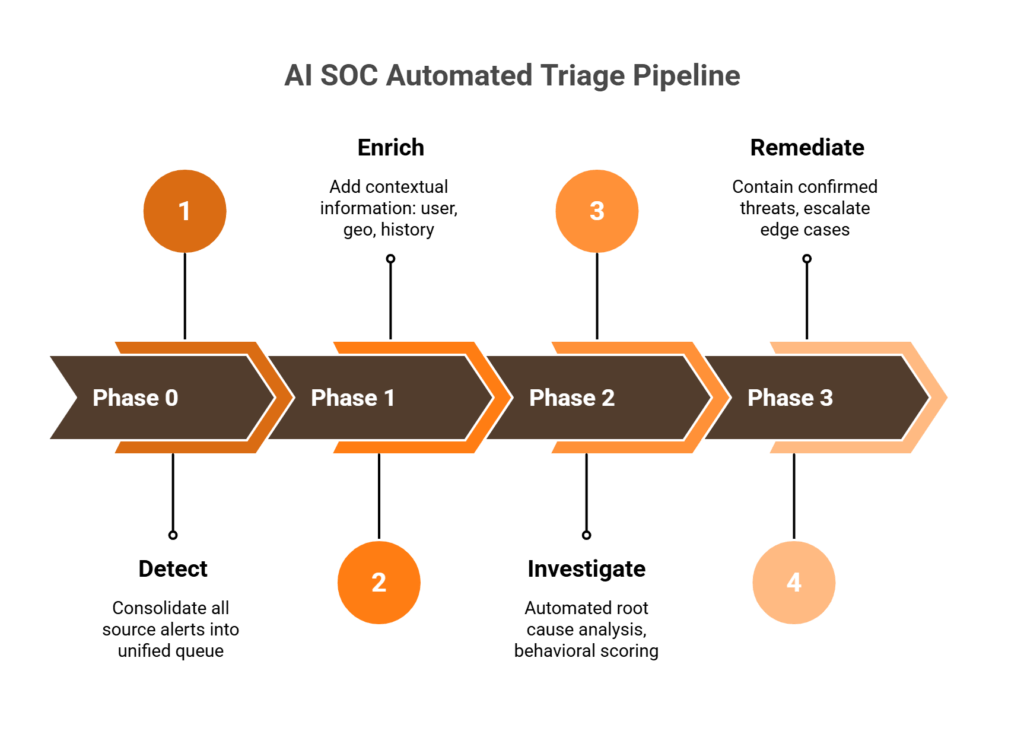

The right system doesn’t eliminate human judgment; it focuses judgment on decisions that actually matter. Here’s how the four-phase automated triage process works:

- Phase 0, Detect: Consolidate alerts from all sources (EDR, SIEM, cloud, identity) into a unified queue with normalized severity

- Phase 1, Enrich: Add contextual information: threat nature, severity, affected assets, user history, geolocation, and recent access patterns

- Phase 2, Investigate: Automated root cause analysis using playbooks, IOC correlation, behavioral scoring, and cross-telemetry reasoning

- Phase 3, Remediate: Automated containment for confirmed threats (credential revocation, endpoint isolation) with human escalation reserved for edge cases and high-severity executive decisions

AI triage reduces MTTR from hours to minutes while achieving 100% alert coverage versus the 30–50% typical of manual triage, because machines don’t get tired at 2 AM and they don’t skip alerts during shift changes.

🛡️ UnderDefense’s Approach: From Alert Noise to Managed Outcomes

The UnderDefense MAXI platform ingests alerts from your existing stack, CrowdStrike, Splunk, Microsoft Defender, Okta, and 250+ more, correlates through AI-driven enrichment, and when behavioral alerts need context (“Did Jane run that PowerShell script?”), our analysts reach out directly via Slack or Teams to verify with the user. Confirmed threats get contained immediately: compromised credentials revoked, endpoints isolated, while you sleep. You review the incident report in the morning, not the raw alert at 2 AM.

The result: 99% reduction in customer-facing alerts through custom detection tuning and direct user verification.

“The biggest win for me was getting actual control over our security alerts. Before the guys from UD stepped in, we were getting bombarded with alerts from all our security tools. Their team cleaned up our configurations and got the noise under control within the first week.”

— Verified User in Marketing and Advertising UnderDefense – G2 Verified Review

“Before MaxiMDR, we were slightly overwhelmed with alerts and often unsure of how to prioritize or respond to them. Now, not only do we get alerts, but we also get clear guidance on how to handle them. This has significantly reduced our response time… false positives have become a rarity.”

— Valeriia D., Marketing Specialist UnderDefense – G2 Verified Review

“Started out well but over the years the service has consistently not met expectations. The issues that we have experienced has greatly outweighed the benefits… Analysts provide little context, and when asked for more information in the investigation nothing is ever provided.”

— CISO, Manufacturing Arctic Wolf – Gartner Verified Review

From 45-minute 2 AM investigations to morning incident summaries: that’s the shift from alert noise to managed response.

Q4. What Is Non-Deterministic Risk in AI SOC, and Why Should Your Security Team Care?

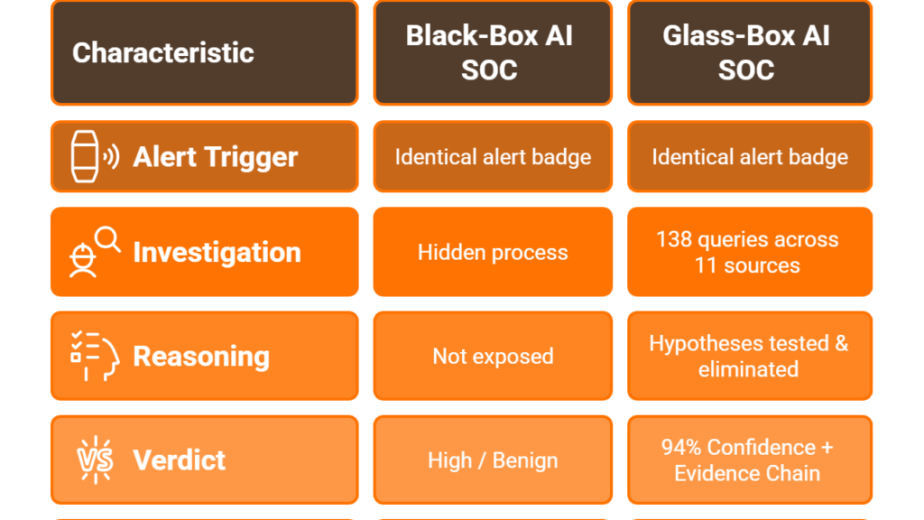

🔬 Deterministic vs. Non-Deterministic AI: Why the Distinction Matters in Security

In most technology discussions, the difference between deterministic and non-deterministic AI is academic. In security operations, it’s operational, and getting it wrong has direct consequences for your threat posture, compliance standing, and incident response reliability.

Deterministic AI produces the same output for the same input, every time. Rule-based systems, decision trees, and static SIEM playbooks are deterministic. A SIEM correlation rule either fires or it doesn’t. You can trace exactly why, reproduce the decision, and explain it to an auditor.

Non-deterministic AI produces probabilistic outputs. Large language models, generative systems, and neural networks can produce different outputs from identical inputs based on model state, training drift, or stochastic inference. In a SOC context, this means a non-deterministic AI model might classify the same alert as “critical” today and “low” tomorrow, not because the threat changed, but because the model’s internal state shifted.

That inconsistency has direct operational consequences that most AI SOC vendors aren’t discussing openly.

⚠️ Security Implications of Non-Deterministic AI in the SOC

Here’s where the rubber meets the road. When you deploy non-deterministic AI in security operations, you introduce five categories of risk:

- Inconsistent triage decisions: The same alert classified differently across runs creates unpredictable security posture and unreliable SLAs. If your board asks “Will this alert always be caught?”, the honest answer with pure non-deterministic systems is “probably.”

- Auditability gaps: Regulators (HIPAA, SOC 2, ISO 27001) require explainable, reproducible security decisions. Non-deterministic models challenge this fundamental compliance requirement because you can’t replay a neural network’s reasoning the way you can trace a SIEM rule.

- Hallucinated remediation: AI generating false remediation steps, misidentifying threat actors, or fabricating security context that doesn’t exist. In a SOC context, hallucinated remediation can mean isolating the wrong endpoint or revoking the wrong credentials.

- Model drift: Detection accuracy degrading over time as the threat landscape evolves faster than retraining cycles. What worked in Q1 may miss critical threats by Q4 without active monitoring.

- Adversarial exploitation: Attackers crafting inputs that exploit probabilistic classification boundaries, including prompt injection against AI SOC agents and adversarial samples that sit exactly at the decision threshold of behavioral models.

🛠️ A Practical Framework for Managing Non-Deterministic Risk

The goal isn’t to avoid non-deterministic AI; behavioral detection genuinely catches threats that rules structurally miss. The goal is to contain the risk. Here’s a six-step framework grounded in operational reality:

- Human-in-the-loop for high-severity decisions: Critical alerts never get auto-actioned without analyst confirmation. Automation handles Tier-1; humans own Tier-3+.

- Reasoning traces for every triage decision: Every AI-generated classification should include an explainable audit trail, what data was considered, what the confidence score was, and why that classification was chosen. Explainability isn’t optional; it’s a compliance requirement.

- Confidence thresholds and guardrails: Auto-act only above 95% confidence. Below that threshold, escalate to a human analyst. This creates a “safety cage” around probabilistic decisions.

- Deterministic fallback rules for compliance-critical scenarios: Known compliance violations (e.g., PCI data exposure, unauthorized PHI access) always trigger deterministic response, regardless of what the behavioral model says.

- Continuous model monitoring for drift: Track classification consistency over time with automated retraining triggers when accuracy drops below baseline.

- Adversarial testing: Red-team AI models regularly for prompt injection, evasion techniques, and boundary exploitation. If you’re not testing how your AI fails, you don’t understand how it works.

The Cloud Security Alliance (CSA) guidance on deterministic vs. generative AI for automated security reinforces this hybrid approach: use deterministic systems for predictable, auditable baseline defense, and layer non-deterministic intelligence on top with appropriate guardrails.

How UnderDefense Addresses Non-Deterministic Risk

UnderDefense’s AI SOC architecture addresses non-deterministic risk through a hybrid approach: AI-driven detection and triage with mandatory human analyst validation for critical decisions. Every triage decision in the UnderDefense MAXI platform includes a reasoning trace for full auditability, and concierge analysts provide the human judgment layer that pure AI cannot guarantee, ensuring both regulatory compliance and operational reliability.

Q5. AI SOC vs. Traditional SOC: The Complete Head-to-Head Comparison with Performance Metrics

You’re evaluating whether your current SOC architecture, built on SIEM rules, manual triage, and tiered escalation, still meets the threat landscape in 2026. The question isn’t whether AI will transform SOC operations but whether your organization can afford to wait while attackers already use agentic AI that operates 24/7 without fatigue.

Both models have legitimate use cases. But the false dichotomy of “security vs. efficiency” dissolves the moment you combine AI-driven detection with experienced human analysts. Here’s the honest breakdown: qualitative architecture comparison and quantitative performance evidence combined.

✅ Traditional SOC: Real Strengths, Real Breaking Points

Strengths are real: predictable, deterministic detection; established compliance frameworks; deep human analyst judgment for complex investigations; and full auditability of every decision. These matter, especially in heavily regulated environments.

But the limitations compound fast:

❌ Cannot scale with alert volume. Mid-market teams face 10,000+ daily alerts, yet only 22% require genuine investigation

❌ False positive rates of 52–80% consume analyst time on noise, not real threats

❌ SOC analyst turnover hit 28% annually in 2024, with 67% reporting severe burnout

❌ MTTR measured in 4–24 hours; MTTD measured in hours to days

❌ Cost scales linearly with headcount: $150K+ per analyst × 3 shifts × 365 days

❌ Organizations lose approximately $1.4M annually per burned-out senior analyst through recruitment, training, and knowledge transfer costs

When containment takes over 200 days, the average breach cost reaches $4.88M. That’s not a theoretical number; it’s the documented operational reality that keeps CISOs awake at night.

✅ AI SOC: Speed, Scale, and the Right Guardrails

AI SOC closes the structural gaps traditional models can’t address at any headcount level:

✅ Behavioral detection catches novel threats that signature-based rules consistently miss

✅ Automated triage reduces false positives by 60–90%, reclaiming analyst hours for strategic work

✅ MTTR measured in minutes, not hours. MTTD in minutes versus days

✅ 100% alert coverage: every single alert gets investigated, not just the 22% humans can reach

✅ 24/7 coverage without shift fatigue, weekend gaps, or holiday blind spots

✅ Organizations extensively using AI and automation save an average of $2.2M per breach compared to those without

⚠️ Important considerations remain: non-deterministic risk requires guardrails (confidence thresholds, reasoning traces, human-in-the-loop for high-severity decisions), an initial tuning period of 2–4 weeks is expected, organizational readiness matters (see Q6), and human oversight stays essential for critical decisions.

The Head-to-Head Performance Table

| Dimension | Traditional SOC | AI SOC + Human Ally |

|---|---|---|

| Detection Method | Signature/Rule-Based | Behavioral/ML + Rules |

| Alert Triage | Manual Tier-1 | AI-Automated |

| Response Speed (MTTR) | 4–24 hours | < 30 minutes (UnderDefense: 0.5h) |

| Mean Time to Detect | Hours to Days | Minutes |

| False Positive Rate | 52–80% | 5–15% (UnderDefense: 99% noise reduction) |

| Alert Coverage | 22–50% investigated | 100% investigated |

| Scalability | Linear headcount growth | Elastic with data volume |

| Annual Cost Model | Per-analyst × shifts ($450K+/yr) | Per-endpoint ($11–15/mo) |

| Compliance Evidence | Manual collection (20+ hrs/audit) | Automated audit trails |

| Analyst Role | Tier-1 triage grunt work | Strategic threat hunting |

| MITRE ATT&CK Coverage | Varies by rule library | 96% (UnderDefense documented) |

Who Should Choose What

Choose traditional SOC if you operate in a heavily regulated environment with minimal alert volume under 500 endpoints and an established in-house team with low turnover. Choose AI SOC if alert volume exceeds team capacity, false positives dominate triage time, or you need 24/7 coverage without tripling headcount. Choose the AI SOC + Human Ally model if you need the efficiency of AI with the judgment of experienced analysts, especially mid-market companies, scaling tech firms, healthcare organizations, and PE portfolio companies that need enterprise-grade protection without building a 20-person SOC.

Q6. Is Your Organization Ready for an AI SOC? A 10-Point Readiness Scorecard

Here’s the thing about SOC upgrades: timing matters as much as the technology itself. Upgrade too early without operational readiness, and you waste the investment. Wait too long, and you’re accumulating security debt that compounds daily. I’ve seen both failure modes across hundreds of engagements, and neither is pretty.

Score your SOC operations against these 10 readiness criteria to determine whether you’re ready for an AI SOC upgrade, and how urgently you need to move.

The 10-Point Readiness Checklist

- ☐ Alert volume exceeds 1,000/day with <50% investigation rate

- ☐ False positive rate above 50%

- ☐ MTTR exceeds 4 hours for critical incidents

- ☐ 3+ unfilled SOC analyst positions or >30% annual turnover

- ☐ Analysts spending >60% of time on Tier-1 triage instead of threat hunting

- ☐ No true 24/7/365 coverage (gaps on nights, weekends, and holidays)

- ☐ SIEM rule count exceeds 5,000 with monthly tuning consuming analyst time

- ☐ 3+ siloed security tools with no unified correlation or single-pane visibility

- ☐ Compliance evidence requires manual collection taking 20+ hours per audit cycle

- ☐ Security team focused entirely on reactive response with zero proactive hunting capacity

⚠️ Score Interpretation

8–10 checks = CRITICAL: Your SOC is structurally overwhelmed and accumulating security debt daily. An AI SOC upgrade is urgent. Begin vendor evaluation immediately and target a 30-day pilot deployment.

4–7 checks = HIGH PRIORITY: Significant operational gaps exist, and they compound over time. Begin AI SOC evaluation, run a targeted pilot on your highest-pain workflow (typically Tier-1 triage automation), and plan phased migration within 90 days.

1–3 checks = MODERATE: Your SOC has foundational capabilities, but specific gaps persist. Consider targeted AI augmentation for triage automation, compliance evidence generation, or after-hours coverage before committing to a full migration.

0 checks = OPTIMIZED: Your SOC is performing well. Focus on advanced threat hunting, red team exercises, and continuous detection engineering.

How UnderDefense Closes Every Gap

We designed UnderDefense to turn every checked box into a resolved gap. Our platform delivers 24/7/365 monitoring with 0.5-hour MTTR for critical incidents and AI-automated triage that reduces noise by 99%. UnderDefense MAXI integrates vendor-agnostically across 250+ existing tools, with no stack replacement required. Automated compliance evidence generation covers SOC 2, HIPAA, and ISO 27001 frameworks. Dedicated concierge analysts provide proactive threat hunting with 96% MITRE ATT&CK coverage. Most organizations go from 6–8 checked gaps to full operational coverage within 30 days of onboarding.

“UnderDefense act as an extension of our team, so we don’t need additional resources, ensuring 24/7 protection. It also solved our problem of having separate security tools that didn’t work well together. Now, everything is connected and easier to manage.”

— Inga M., CEO UnderDefense – G2 Verified Review

“Not having to worry about ransomware, alert overload and reporting. Getting a clear view of my security posture, where the threats are coming from and how they are handled. They literally took care of all our problems.”

— Arlin O., Enterprise UnderDefense – G2 Verified Review

“Our IT team was overwhelmed by the sheer volume of security alerts and doesn’t have the resources for 24/7 monitoring.”

— Andriy H., Co-Founder and CTO UnderDefense – G2 Verified Review

📝 Next Step

Scored 4 or above? Book a 15-minute security gap assessment to see exactly where UnderDefense closes the holes, or start with a 30-day pilot to validate AI SOC results against your current metrics before committing. No stack replacement required.

Q7. What Are the Real Risks of an AI SOC Upgrade, and What Does a Phased Migration Look Like?

Every enterprise purchase has a moment of hesitation. For AI SOC upgrades, that hesitation usually comes down to three specific concerns. Rather than dismissing them, let me address each one directly, because the objections are legitimate, even if the conclusions people draw from them aren’t always correct.

Objection 1: “We can’t trust non-deterministic AI with security-critical decisions.”

This is the most sophisticated concern, and it’s well-founded. Non-deterministic models can produce inconsistent outputs, and in security, inconsistency equals risk. But the solution isn’t avoiding AI altogether. It’s architecting proper guardrails: human-in-the-loop for critical decisions, confidence thresholds that trigger manual review, deterministic fallbacks for high-severity events, and reasoning traces you can audit after the fact. Our concierge analyst model ensures human validation on every high-severity decision. AI accelerates the investigation; humans make the call.

Objection 2: “We’ve invested $500K+ in our SIEM/EDR stack. Switching means writing that off.”

💰 Sunk-cost concern is real and rational. Adding another vendor feels like complexity, not simplification. Here’s the critical difference: UnderDefense doesn’t require stack replacement. UnderDefense MAXI integrates with 250+ existing tools, including CrowdStrike, Splunk, SentinelOne, Microsoft Defender, Okta, and Palo Alto. Your current investments become more effective, not obsolete.

Contrast that with providers who force proprietary SIEM replacement. As one experienced CISO shared in a conversation with us: when you switch vendors, “all of my business logic, the correlation rules, the automation rules, that does not come with.” You start over from zero on tuning. We built UnderDefense specifically so your business logic stays yours. Our analysts log into your system; we don’t force you onto ours.

Objection 3: “What if AI misses something a human would catch?”

⚠️ The “automation paradox.” Valid concern, wrong framing. AI SOC doesn’t replace analysts but redirects them from Tier-1 triage to strategic threat hunting where human judgment matters most. Our 100% ransomware prevention record across 500+ clients over 6 years proves this model works precisely because it combines AI detection with human validation, not AI-only decision making.

⏰ The Phase-by-Phase Migration Roadmap

Phase 1 (Weeks 1–2): Discovery & Integration: Connect existing security tools to the AI SOC platform, establish behavioral baselines, and configure detection tuning. Decision gate: validate data ingestion and initial correlation accuracy.

Phase 2 (Weeks 3–4): AI-Assisted Triage Pilot: AI handles Tier-1 triage in parallel with your existing SOC in shadow mode. Measure false positive reduction and alert accuracy side-by-side. Decision gate: compare AI triage accuracy vs. manual triage on the same alert set.

Phase 3 (Weeks 5–8): Graduated Handoff: Progressively shift Tier-1 triage to AI with human oversight. Activate ChatOps user verification. Enable automated containment for high-confidence threats. Decision gate: MTTR improvement + analyst time reclaimed metrics.

Phase 4 (Weeks 9–12): Full Operational Mode: AI SOC handles Tier-1/Tier-2 at scale. Analysts focus on Tier-3 threat hunting and strategic security. Compliance automation active. 24/7 coverage fully operational.

UnderDefense Compresses the Timeline

We compress this into a 30-day turnkey deployment because MAXI’s vendor-agnostic architecture eliminates the integration complexity that stretches other migrations to 6+ months. You validate results within 30 days, before full commitment.

“The speed of onboarding was a delightful surprise. In times where integrating new systems can take weeks, UnderDefense had us up and running in no time.”

— Valeriia D., Marketing Specialist UnderDefense – G2 Verified Review

“Despite the complexity involved, they delivered the deployment to 1,200 endpoints in just 23 business days.”

— Oleksii M., Mid-Market UnderDefense – G2 Verified Review

UnderDefense detected threats 2 days faster than CrowdStrike OverWatch in a documented head-to-head comparison, while integrating with, not replacing, the customer’s existing CrowdStrike deployment. That’s the difference between rip-and-replace and upgrade-in-place.

Q8. How Should You Evaluate AI SOC Platforms? A 7-Criteria Decision Framework

Choosing an AI SOC platform means committing to a security architecture that will protect your organization for years. Pick wrong, and you’re locked into proprietary tools, left with AI-generated alerts your team still has to investigate, or exposed to non-deterministic risk without adequate guardrails. The stakes are too high for gut decisions.

The Wrong Way to Decide

Most security leaders choose based on brand recognition (“CrowdStrike is the biggest”), feature-count marketing (“We have 500 integrations”), or polished demo impressions. This ignores the critical questions: Can the AI explain its decisions for compliance audits? Can the platform work with your existing stack without requiring replacement? Can analysts actually respond to threats and contain them, or do they just escalate tickets back to your team? If you’re not asking these questions, you’re evaluating the wrong things.

The 7-Criteria Evaluation Framework

Score each AI SOC provider 0–2 on these criteria (max 14):

- Vendor-Agnostic Integration: Does it work with your existing security stack (SIEM, EDR, cloud, and identity), or does it force proprietary tool replacement?

- Human Analyst Access: Do you get direct communication with Tier 3–4 analysts, or just ticket-based escalations through a portal?

- Response Capability: Can they contain and remediate threats (credential revocation, endpoint isolation, and lateral movement blocking), or just detect and notify?

- Non-Deterministic Risk Management: Are there reasoning traces, confidence thresholds, and human-in-the-loop guardrails for every AI decision?

- Pricing Transparency: Is cost published and predictable (per-endpoint), or hidden behind “contact sales” with opaque enterprise quotes?

- Compliance Integration: Does security monitoring generate audit evidence automatically, or does it require separate compliance tools and manual collection?

- Onboarding Speed: Does deployment require 6 months of professional services, or can you validate results with a 30-day turnkey implementation?

⭐ Score threshold: 10+ represents genuine operational partnership. Below 7 means you’re buying an alert feed, not managed detection and response.

Where UnderDefense Scores

| Criterion | Score | Evidence |

|---|---|---|

| Vendor-Agnostic Integration | ✅ 2 | 250+ integrations, works with your existing stack |

| Human Analyst Access | ✅ 2 | Direct Tier 3–4 communication, concierge model |

| Response Capability | ✅ 2 | Full containment and remediation, 0.5h MTTR |

| Non-Deterministic Risk Mgmt | ✅ 2 | Reasoning traces, human validation on critical decisions |

| Pricing Transparency | ✅ 2 | Published $11–15/endpoint/month |

| Compliance Integration | ✅ 2 | Forever-free compliance kits included with MDR |

| Onboarding Speed | ✅ 2 | 30-day turnkey deployment with custom detection tuning |

| Total | 14/14 |

UnderDefense is purpose-built to meet every criterion in this framework, because it was designed from the ground up as an AI SOC with Human Ally support, not a retrofitted monitoring tool with AI marketing bolted on top.

The Meta-Insight

The real question isn’t which AI SOC has the best ML model but which provider can respond to threats the way a dedicated security team would, while giving you full transparency into how every decision was made. That’s the shift UnderDefense represents.

UnderDefense maintains a 100% ransomware prevention record across 500+ MDR clients over 6 years, because detection without human-driven response is just expensive alerting.

Q9. Choosing the Right SOC Model: AI SOC Providers, Tools, and Comparisons

The leading AI SOC and SOC-as-a-Service providers for mid-market organizations in 2026 include UnderDefense, Arctic Wolf, CrowdStrike Falcon Complete, Simbian, Radiant Security, and Dropzone AI, each with distinct approaches to detection, response, pricing, and the AI/human balance.

Three Models, Three Tradeoffs

The AI SOC market has fragmented into three models security leaders need to understand before evaluating vendors:

- Pure-AI platforms (autonomous, no human analysts): fast triage, but non-deterministic decisions without human guardrails create real risk for high-stakes incidents

- Traditional MDR with AI augmentation (humans first, AI assists): familiar, but manual workflows can’t scale when you’re processing thousands of alerts daily

- AI SOC + Human Ally (AI-driven detection and triage with dedicated human analyst response): the model we built at UnderDefense, combining machine-speed investigation with analyst judgment for outcomes you can audit

The right choice depends on your readiness score, risk tolerance for non-deterministic AI, and whether you need detection-only or full containment capability.

What Separates AI SOC Providers

Here’s the evaluation framework that actually matters, not feature lists, but architectural decisions that determine whether a provider can own outcomes or just escalate alerts:

- Vendor-agnostic integration vs. proprietary stack requirements: Does the provider work with your existing tools, or force replacement?

- Human analyst access: Concierge (direct Tier 3–4 communication) vs. ticket-based escalation vs. none

- Response capability: Detection-only vs. full containment and remediation

- Published response-time SLAs and documented outcomes: Specific MTTR metrics, or “industry-leading” claims?

- Transparent pricing vs. opaque enterprise quotes: Published per-endpoint rates, or “contact sales” black boxes?

- Non-deterministic risk guardrails: Reasoning traces, human-in-the-loop for critical decisions, and audit trails

Each provider excels in different scenarios. For a comprehensive ranked evaluation with pricing, response times, integration capabilities, and documented outcomes, see our full breakdown below.

💰 Know Your Real SOC Costs First

One of the biggest mistakes I see security leaders make is evaluating AI SOC providers without understanding what their current SOC actually costs, fully loaded. In-house SOC costs hide in salary overhead, tooling licenses, training, turnover, and after-hours coverage gaps. Before comparing vendor quotes, calculate your baseline.

This analysis is based on documented response times, G2 Spring 2026 rankings, published pricing, and operational outcomes across 500+ MDR deployments.

Q10. The Bottom Line: Which SOC Model Fits Your Organization, and What’s Your Next Step?

If you’ve made it this far, you have four lenses to evaluate the AI SOC transformation: detection intelligence, automated triage, non-deterministic risk management, and organizational readiness. Here’s how they connect, and what to do next.

The Four Pillars, Connected

The shift from rules to intelligence means detection adapts to your threat landscape instead of matching yesterday’s signatures. The shift from manual to automated triage means analysts hunt threats instead of chasing false positives at 2 AM. Understanding non-deterministic risk means adopting AI with guardrails, not blind trust, not paralyzed avoidance. And the readiness scorecard gives you an honest assessment of whether to move now, plan for 90 days, or augment selectively.

These aren’t abstract concepts. They’re the operational reality of every security team deciding between building a 20-person SOC and outsourcing to a provider that may or may not own outcomes.

✅ Three Profiles, Three Prescriptions

Profile A: Traditional SOC: <500 endpoints, minimal alert volume, established in-house team with low turnover, and heavily regulated environments requiring 100% deterministic auditability.

- Action: Optimize current operations, invest in detection engineering, and revisit AI SOC in 12 months.

Profile B: Standalone AI SOC: High alert volume, strong internal security engineering to manage AI models, tune detection, and handle non-deterministic risk independently.

- Action: Evaluate pure-AI platforms with strong model governance.

Profile C: AI SOC + Human Ally: Mid-market companies, scaling tech firms, healthcare organizations, PE portfolio companies, and any team needing enterprise-grade protection without building a 20-person SOC.

- Action: Start with the readiness scorecard, then validate with a 30-day pilot.

Why We Built It This Way

UnderDefense exists for organizations that understand the AI SOC transformation is real but refuse to trade security for speed. Vendor-agnostic integration protects your existing CrowdStrike, Splunk, and Microsoft investments. AI-driven detection with human validation delivers automation efficiency plus experienced analyst judgment. Transparent pricing ($11–15/endpoint/month) means confident budgeting. And UnderDefense MAXI 30-day deployment means you prove ROI before you commit.

⏰ Your Next Step

- Scored 8+ on readiness? Book a demo. See the UnderDefense MAXI platform resolve a real threat in your environment within the first call.

- Scored 4–7? Start with a 15-minute security gap assessment for your highest-impact upgrade path.

- Still evaluating? Download the SOC Automation Checklist to benchmark current operations before your next budget conversation.

UnderDefense protects 500+ organizations across healthcare, financial services, technology, and government, with a 100% ransomware prevention record, response times 2 days faster than CrowdStrike OverWatch, 96% MITRE ATT&CK coverage, and published pricing starting at $11/endpoint/month.

“We received little value from ArcticWolf. The product offered little visibility when we were using it… Anything you want to look at or changes you need to make in the product must go through their engineering team.”

— Matt C., Manager, Cybersecurity Services Arctic Wolf – G2 Verified Review

“Despite the capabilities of the technical platform… there is still a limit to the environmental/organizational knowledge inherent in the service. This leads to a fairly frequent need for engagement with our internal team.”

— Verified User in Computer Software, Mid-Market Expel – G2 Verified Review

“Arctic Wolf provides solid detection and response capabilities, but overly relies on the client’s team for remediation, which really hurts the value of the service.”

— VP of Technology, Services Arctic Wolf – Gartner Verified Review

That’s the gap this article exists to close, between providers that escalate alerts and providers that own outcomes.

1. What is the core architectural difference between an AI SOC and a traditional SOC?

A traditional SOC relies on static SIEM correlation rules, signature-based detection, and manual Tier-1 triage. Analysts match alerts against known-threat databases, and detection only fires when a pattern hits a pre-written rule. This creates a structural ceiling: your security posture depends entirely on someone having already documented the exact threat you face.

An AI SOC flips that model. Instead of asking “Does this match a known signature?”, behavioral analytics engines ask “Is this normal for this user, this device, at this time?” We built the UnderDefense MAXI platform around this principle: vendor-agnostic integration across 250+ tools, connecting endpoint, identity, cloud, and network telemetry in one context-aware layer.

Key differences include:

-

Detection method: static rules vs. behavioral analytics + rules (hybrid)

-

Alert triage: manual Tier-1 investigation vs. AI-automated enrichment with human validation

-

Response speed: hours to days vs. minutes

-

Coverage: 22–50% of alerts investigated vs. 100%

The result is not replacing humans but focusing analyst judgment on confirmed threats rather than false positive investigation.

2. How much time does a traditional SOC waste on false positives, and how does AI triage fix this?

The numbers reveal an operational crisis in traditional SOC environments. 25–27% of analyst time is wasted chasing false positives every shift, 51% of SOC teams report feeling overwhelmed by daily alert volume, and 74% of breaches had alerts generated but ignored because analysts couldn’t keep up.

The root cause is architectural: fragmented tools without cross-correlation force manual investigation at every step. Your EDR sees endpoint behavior, your identity tool sees user context, and neither reasons across both. The analyst becomes the integration engine.

We solve this through a four-phase automated triage process:

-

Phase 0: Consolidate alerts from all sources into a unified queue

-

Phase 1: Enrich with contextual information (user history, geolocation, access patterns)

-

Phase 2: Automated root cause analysis using behavioral scoring and cross-telemetry reasoning

-

Phase 3: Automated containment for confirmed threats, with human escalation for edge cases

This achieves 100% alert coverage versus the 30–50% typical of manual triage, with a 99% reduction in customer-facing alert noise.

3. What is non-deterministic risk in an AI SOC, and how should security teams manage it?

Non-deterministic AI produces probabilistic outputs, meaning large language models and neural networks can generate different outputs from identical inputs based on model state, training drift, or stochastic inference. In a SOC context, this means the same alert could be classified “critical” today and “low” tomorrow, not because the threat changed, but because the model’s internal state shifted.

This creates five categories of operational risk:

-

Inconsistent triage decisions that undermine SLA reliability

-

Auditability gaps for regulators requiring explainable security decisions (HIPAA, SOC 2, ISO 27001)

-

Hallucinated remediation steps that could isolate wrong endpoints

-

Model drift degrading detection accuracy over time

-

Adversarial exploitation of probabilistic classification boundaries

The goal is not to avoid non-deterministic AI but to contain the risk. We address this through a hybrid approach: AI-driven detection with mandatory human analyst validation for critical decisions, reasoning traces for every triage decision, and confidence thresholds that trigger manual review. Learn more about our compliance-integrated approach that ensures full auditability alongside AI-powered detection.

4.How do we know if our organization is ready to upgrade from a traditional SOC to an AI SOC?

We developed a 10-point readiness scorecard based on patterns we’ve observed across hundreds of engagements. Score your operations against these criteria:

-

Alert volume exceeds 1,000/day with less than 50% investigation rate

-

False positive rate above 50%

-

MTTR exceeds 4 hours for critical incidents

-

3+ unfilled SOC analyst positions or over 30% annual turnover

-

Analysts spending over 60% of time on Tier-1 triage

-

No true 24/7/365 coverage

-

SIEM rule count exceeds 5,000 with monthly tuning consuming analyst time

-

3+ siloed security tools with no unified correlation

-

Compliance evidence requires manual collection (20+ hours per audit cycle)

-

Zero proactive hunting capacity

If you score 8–10, your SOC is structurally overwhelmed and an AI SOC upgrade is urgent. At 4–7, significant gaps exist and compound over time. At 1–3, consider targeted AI augmentation for specific workflows. Use our SOC cost calculator to benchmark your current spend before evaluating providers.

5. Can we upgrade to an AI SOC without replacing our existing SIEM and EDR investments?

Yes, and this is one of the most common concerns we address. Organizations that have invested $500K+ in CrowdStrike, Splunk, SentinelOne, or Microsoft Defender understandably resist rip-and-replace proposals.

We built UnderDefense specifically to integrate with your existing stack, not replace it. The UnderDefense MAXI platform connects vendor-agnostically across 250+ tools, making your current investments more effective rather than obsolete. Our analysts log into your system; we don’t force you onto ours.

The phased migration approach works in four stages:

-

Weeks 1–2: Connect existing tools, establish behavioral baselines, configure detection tuning

-

Weeks 3–4: AI handles Tier-1 triage in shadow mode parallel to your existing SOC

-

Weeks 5–8: Graduated handoff with human oversight and ChatOps verification

-

Weeks 9–12: Full operational mode with AI handling Tier-1/Tier-2 at scale

Most organizations validate results within 30 days, before full commitment. Explore our full integrations to confirm compatibility with your current stack.

6. How does AI SOC pricing compare to the cost of running a traditional in-house SOC?

Traditional in-house SOC costs scale linearly with headcount. A single SOC analyst costs $150K+ annually. 24/7 coverage requires at minimum three shifts, bringing the base cost to $450K+/year before tooling, training, and turnover expenses. Organizations also lose approximately $1.4M annually per burned-out senior analyst through recruitment, training, and knowledge transfer costs.

AI SOC pricing operates on a fundamentally different model: per-endpoint rather than per-analyst. UnderDefense publishes transparent pricing at $11–15/endpoint/month, which includes 24/7/365 monitoring, AI-automated triage, concierge analyst response, and built-in compliance kits.

The cost difference becomes significant at scale. For a mid-market organization with 1,000 endpoints, that’s $132K–$180K/year for full AI SOC + Human Ally coverage versus $450K+ for a minimally staffed in-house team, before accounting for tooling licenses and turnover costs. Review our detailed MDR pricing breakdown to model costs for your specific environment.

7. What evaluation criteria should we use when comparing AI SOC providers?

We recommend a 7-criteria framework that focuses on architectural decisions rather than feature-count marketing. Score each provider 0–2 on these dimensions (max 14):

-

Vendor-agnostic integration: works with your existing stack, or forces proprietary tool replacement?

-

Human analyst access: direct Tier 3–4 communication, or ticket-based escalations?

-

Response capability: full containment and remediation, or detect-and-notify only?

-

Non-deterministic risk management: reasoning traces, confidence thresholds, and human-in-the-loop guardrails?

-

Pricing transparency: published per-endpoint rates, or “contact sales” black boxes?

-

Compliance integration: automated audit evidence generation, or separate manual tools?

-

Onboarding speed: 30-day turnkey implementation, or 6 months of professional services?

A score of 10+ represents genuine operational partnership. Below 7 means you’re buying an alert feed, not managed detection and response. Download our free SOC Provider Evaluation Checklist to run this framework against your shortlisted vendors.

8. Why can't signature-based SIEM rules keep up with modern threats, and what replaces them?

The data is now unambiguous. According to CardinalOps’ Fifth Annual Report on the State of SIEM Detection Risk, enterprise SIEMs have detection coverage for just 21% of MITRE ATT&CK techniques, leaving 79% uncovered. On top of that, 13% of existing SIEM rules are broken and will never trigger due to misconfigured data sources and missing log fields.

The fundamental limitation is structural: rules can only catch what’s already been documented. Living-off-the-land attacks using legitimate tools like certutil.exe for data exfiltration look “normal” to rule-based systems because they match no malicious signature.

Intelligence-based detection, powered by User and Entity Behavior Analytics (UEBA) and ML-driven anomaly detection, builds behavioral baselines per user, device, and network segment. Deviations get flagged regardless of whether the specific attack pattern has been seen before.

The argument isn’t rules or intelligence; it’s that rules alone defend only against yesterday’s threats. We combine both in a hybrid model within our security operations center architecture: deterministic rules for compliance baselines, plus behavioral AI for novel threat detection.

The post AI SOC Vs Traditional SOC: Compare Rules vs. Intelligence, Manual vs. Automated Triage, Non-Deterministic Risk appeared first on UnderDefense.