Q1. What Is SOC Alert Fatigue, and Why Can’t Your Team Outrun 4,484 Alerts Per Day?

⏰ It’s 2:47 AM on a Tuesday

Your phone buzzes. Alert #14 this week: “suspicious PowerShell execution on DESKTOP-FIN-034.” You drag yourself to the laptop, pull the SIEM, cross-reference the endpoint logs, chase down the user’s identity. Forty-five minutes later, it’s another false positive, a developer running a legitimate deployment script. You close the ticket, lie back down, and stare at the ceiling wondering: did I miss something real three alerts ago when I was too exhausted to dig deeper?

This scenario isn’t a bad night. This is every night. And if you’re running a security operations center with fewer than ten analysts, the math is about to get uncomfortable.

📊 The Volume Problem Is Arithmetic, Not Strategic

Enterprise SOCs receive thousands of alerts daily. According to the 2025 SANS Detection & Response Survey, 73% of organizations now list false positives as their number-one detection challenge, a dramatic year-over-year rise. Each alert demands 20 to 30 minutes of manual investigation to properly triage. At that rate, fully processing a day’s alert volume would require hundreds of analyst-hours, roughly the output of a team most mid-market organizations simply don’t have.

Compound this with tool sprawl. The average enterprise runs dozens of security tools, each generating its own alert stream with zero cross-context. Your EDR sees endpoint behavior. Your identity provider sees authentication anomalies. Your cloud console sees configuration drift. None of them talk to each other, and you, the human analyst, become the manual correlation layer.

❌ The Hidden Costs No One Budgets For

Here’s what this looks like in practice:

- 10 to 15 hours per week per analyst consumed by manual triage and investigation of alerts that lead nowhere.

- 73% of teams cite false positives as the top obstacle to effective detection, up sharply from prior years.

- “Very frequent” false positives jumped from 13% to 20% year-over-year, per SANS 2025. Detection engineering can’t keep pace with noise.

- Average dwell time increases significantly when analysts are overwhelmed, giving attackers more runway for lateral movement, credential abuse, and data exfiltration.

Analysts resort to what I call “title scanning”: reading alert titles and closing without investigation. Not because they’re careless, but because the volume makes disciplined triage arithmetically impossible. It’s a survival mechanism, and it’s happening in your SOC right now.

✅ How It Should Actually Work

The ideal state isn’t “fewer alerts.” It’s a system that correlates alerts across all your tools, verifies suspicious activity directly with affected users, and escalates only confirmed threats requiring human decision. You’d wake up to “incident contained at 2:52 AM, here’s what happened and what we did,” not 47 unread alerts to triage.

The rest of this article shows exactly how an AI SOC delivers this, turning 1,000 daily alerts into 6 analyst-ready cases without discarding a single signal.

⚠️ The Workforce Math Doesn’t Bend

According to Tines, 71% of SOC analysts report burnout, with alert fatigue cited as the primary driver. The ISC2 2024 Cybersecurity Workforce Study estimated a global gap of 4.8 million professionals needed, a 19% year-over-year increase. Meanwhile, 37% of security teams experienced budget cuts, and 25% experienced layoffs.

The math doesn’t bend. You can’t hire your way out of this. The architecture must change.

Q2. How Does the Burnout-to-Breach Feedback Loop Quietly Destroy Your Security Posture?

🔄 Burnout Is a System Failure, Not an Individual Weakness

Here’s something most security leaders understand intuitively but rarely map explicitly: burnout isn’t a morale problem. It’s a self-reinforcing system failure that degrades your entire security posture over time.

The sequence starts innocuously. Alert volumes exceed human capacity. Analysts experience cognitive overload. Overload leads to desensitization, the brain’s natural defense against constant noise. Desensitization leads to shortcuts. Shortcuts lead to missed threats. Missed threats lead to breaches. And the ISC2 workforce data makes this worse: the global cybersecurity talent gap hit 4.8 million in 2024. Burned-out analysts leave. Remaining analysts absorb more load. Burnout accelerates. SOC analyst turnover has reached 28% annually, with average tenure shrinking below 18 months in some environments.

❌ Traditional MDR Treats Symptoms While the Disease Progresses

The common industry response to this problem? Add dashboards. Add escalation tiers. Add “more eyes.” Arctic Wolf and CrowdStrike add layers of monitoring and concierge teams, but the fundamental architectural problem persists: humans remain the manual correlation layer between fragmented tools.

Traditional MSSPs provide monitoring without actionable context, checkbox coverage based on rigid playbooks. And here’s what a CISO who experienced this firsthand shared about Arctic Wolf:

“Analysts provide little context, and when asked for more information in the investigation nothing is ever provided or even communicated. Support incidents are not worked to completion and communication evaporates.”

— CISO, Manufacturing Arctic Wolf – Gartner Review

Hiring more Tier 1 analysts just feeds more people into the burnout machine. You’re treating the symptom, fatigue, while ignoring the disease: volume.

⚠️ The Complete Feedback Loop (Map This)

Here’s the full cycle, and each node amplifies the next:

Burnout → Desensitization → Missed Alert → Breach → Incident Response Costs ($4.88M average per IBM 2024) → Regulatory Scrutiny → Budget Pressure → Understaffing → More Burnout

This is not a linear problem. It’s a self-reinforcing spiral. The average time to detect and contain a breach sits at 277 days. Every node in this loop compounds the next. And with 70% of breached organizations reporting significant or very significant disruption, the business impact extends far beyond the SOC.

✅ Breaking the Loop at the Root

The circuit-breaker is straightforward in principle: remove the volume problem at the source. When AI automates triage of 99%+ noise, deduplicating, correlating, enriching, and scoring alerts before they reach a human, you eliminate the root cause rather than treating the symptom.

Analysts shift from reactive alert-processors to proactive threat hunters. That’s a role that drives engagement, not burnout. Think of it as a tiered model: Machine handles investigation grunt work → Analyst handles judgment calls → Strategist handles program development. The loop breaks at the very first node, because alert volume never reaches human capacity.

From what we’ve seen at UnderDefense, when analysts stop drowning in false positives and start investigating real, contextualized incidents, the entire team dynamic shifts. People stay longer. Institutional knowledge compounds. Detection quality improves.

💸 The Turnover Economics Are Brutal

Here’s a number most CISOs don’t track closely enough: replacing a single Tier 1 analyst costs $50K to $100K when you factor in recruiting, training, and the 6-month ramp to full productivity. A 10-person SOC with 28% annual turnover spends $140K to $280K per year just maintaining headcount, before counting institutional knowledge loss and increased breach risk during ramp periods.

An AI SOC that reduces burnout-driven turnover by even 30% recoups its cost in retention savings alone, and that’s before measuring the breach-risk reduction from analysts who actually have the bandwidth to investigate real threats.

Q3. How Does an AI SOC Actually Turn 1,000 Alerts Into 6 Analyst-Ready Cases?

This is the section the title of this article promises, so let me walk through the exact mechanics. No black boxes, no “trust me, it works.” Observable pipeline, reproducible outcomes.

🔍 The Capability Statement

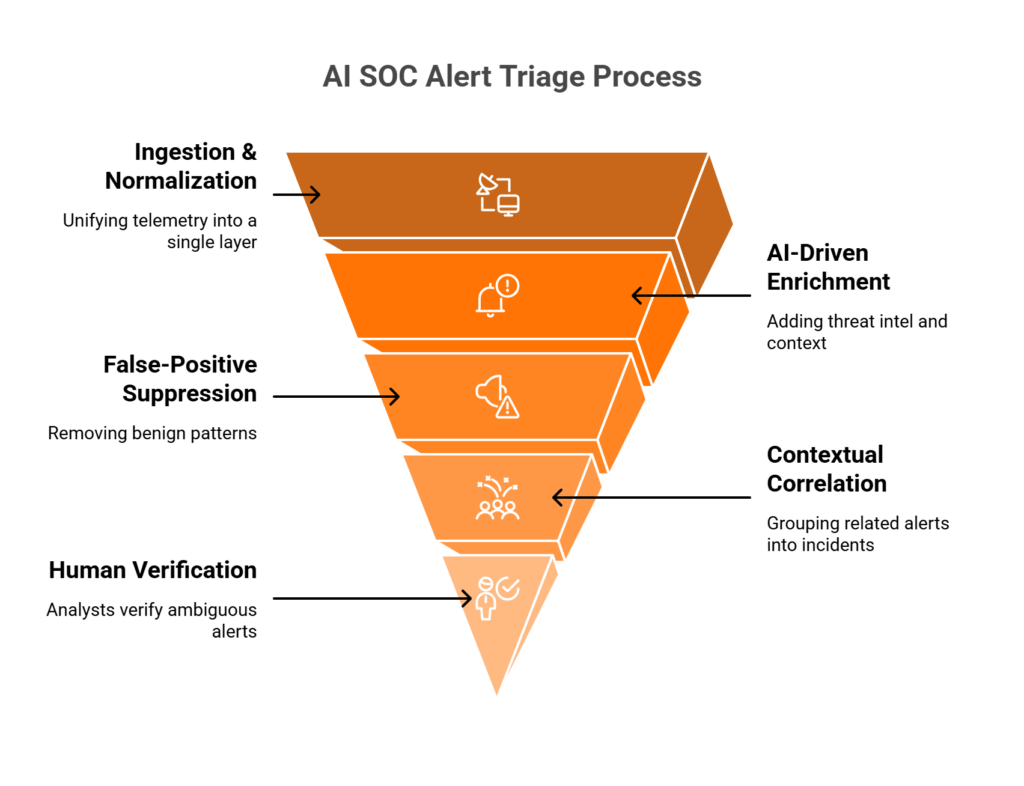

The AI SOC triage pipeline is a multi-stage reduction funnel that transforms raw alert volume into decision-ready cases through automated correlation, enrichment, deduplication, and contextual scoring, without discarding anything. Every alert is investigated. Only confirmed, contextualized incidents reach human analysts.

⚙️ The Funnel: Stage by Stage

Here’s what actually happens when 1,000 alerts enter the pipeline:

Stage 1: Ingestion & Normalization. 1,000 raw alerts from SIEM, EDR, identity, cloud, and network sources get consolidated into a unified schema. Different formats, different vendors, different severity scales, all normalized into a single language the AI engine can reason across.

Stage 2: Deduplication & Correlation. Related alerts get grouped into approximately 200 clusters. Example: 15 failed login attempts + 1 MFA bypass attempt + 1 privilege escalation on the same user account = 1 correlated cluster, not 17 separate alerts for your analyst to chase individually.

Stage 3: AI Enrichment & Context. Each cluster gets enriched with threat intelligence feeds, asset criticality scores, user behavior baselines, and organizational context. That PowerShell alert from the developer laptop at 2 PM? Auto-closed. The same command from a marketing laptop at 3 AM, combined with a new device enrollment? Flagged. This stage reduces approximately 200 clusters to roughly 40 enriched incidents.

Stage 4: Risk Scoring & Prioritization. Behavioral AI scores each incident against kill-chain progression, business impact, and confidence level. Approximately 40 enriched incidents become roughly 6 high-confidence, analyst-ready cases, each with full investigation context, evidence, affected asset maps, and recommended response actions attached.

✅ What This Pipeline Handles

- Known-benign pattern matching: developer scripts, scheduled tasks, and IT admin activity auto-closed based on learned baselines.

- Cross-source correlation: connecting an endpoint alert + identity anomaly + network lateral movement into a single narrative instead of three disconnected tickets.

- User verification via ChatOps: confirming suspicious activity directly with affected users before escalating. This is what separates investigation from guesswork.

- False positive feedback loop: every analyst disposition (confirmed threat, false positive, or benign) trains the model. The system gets smarter with every decision.

- Compliance evidence generation: every alert investigated, every decision documented. The audit trail writes itself.

💡 Why the “6 Cases” Matter More Than You Think

The 6 cases that reach analysts aren’t “the 6 loudest alerts.” They’re 6 fully investigated incidents with context, evidence, affected asset maps, and recommended response actions attached. Analysts make decisions, not investigations.

This is the difference between “suspicious login detected, please investigate” and “confirmed credential compromise on Jane.Smith’s account, lateral movement to finance-server-03 detected, containment recommendation: isolate endpoint + revoke session tokens.”

How UnderDefense Delivers This Pipeline

The UnderDefense MAXI platform delivers this exact funnel across 250+ integrated security tools, reducing customer-facing alerts by 99% through AI-driven triage while maintaining 96% MITRE ATT&CK coverage. No alert is ignored; every one is investigated by AI before the cases that matter reach your team.

“Before the guys from UD stepped in, we were getting bombarded with alerts from all our security tools. Their team cleaned up our configurations and got the noise under control within the first week. Now when we get an alert, we know it’s something worth looking into.”

— Verified User, Marketing and Advertising UnderDefense – G2 Verified Review

“UnderDefense MAXI has been a key player in helping us maintain a secure environment. It has significantly reduced the number of false positives, allowing our team to focus on real threats.”

— Darina I., Customer Success Manager UnderDefense – G2 Verified Review

“Not having to worry about ransomware, alert overload and reporting. Getting a clear view of my security posture, where the threats are coming from and how they are handled. They literally took care of all our problems.”

— Arlin O., Enterprise UnderDefense – G2 Verified Review

Q4. How Does AI Eliminate False Positives Without Missing Real Threats?

This is the section that addresses the implicit objection behind every AI SOC conversation: “But what if the AI suppresses something real?”

Fair question. Let me walk through the specific techniques and why the architecture is designed to never silently drop an alert.

📊 The False Positive Problem, by the Numbers

The 2025 SANS Detection & Response Survey found that 73% of organizations now cite false positives as their #1 threat detection challenge, with “very frequent” false positives jumping from 13% to 20% year-over-year. Each false positive consumes 15 to 30 minutes of analyst time. At 1,000 alerts per day with a 50%+ false positive rate, you’re burning hundreds of analyst-hours daily on noise.

This is the core fuel for the burnout cycle mapped in Q2. Every wasted investigation hour is an hour not spent on real threats.

⚙️ Technique 1: Behavioral Baselining

AI learns normal patterns per user, per device, per application over time. A developer running PowerShell at 2 PM on a weekday is baseline behavior. The same command from a marketing laptop at 3 AM is anomalous. Context-aware detection eliminates the “one-size-fits-all” signature rules that generate most false positives.

This isn’t a static whitelist but a continuously learning model that adapts as your environment changes. New hire? The model builds a baseline. Role change? It adjusts. Seasonal business patterns? Incorporated automatically.

🔗 Technique 2: Cross-Source Correlation & Recursive Reasoning

AI connects alerts across EDR, identity, cloud, and network to build attack narratives rather than treating each alert in isolation. A failed login alone is noise. A failed login + MFA bypass attempt + new device enrollment + privilege escalation = a correlated incident with kill-chain context.

Fifteen individual alerts become one contextualized case. The AI reasons recursively, pulling in related signals that an analyst would need to manually hunt across three or four different consoles.

🔄 Technique 3: Analyst Feedback Loop

Every analyst disposition, whether confirmed threat, false positive, or benign, trains the model. Over weeks and months, the AI learns org-specific patterns: which service accounts trigger alerts, which IP ranges are developer VPNs, which after-hours activity is normal for APAC teams.

This is where the “show, don’t tell” principle matters most. You can audit these feedback loops. You can see what the model learned and why. No black box: observable, auditable, reproducible decisions. In mature deployments, this drives false positive rates well below 5%.

💬 Technique 4: ChatOps User Verification (The Layer Competitors Miss)

When behavioral context alone can’t determine intent, and this happens more often than pure-AI vendors want to admit, there’s a fourth layer that we built at UnderDefense specifically because automation has limits: ChatOps User Verification.

When an alert is ambiguous, UnderDefense MAXI analysts reach out to the affected user directly via Slack or Teams: “Did you just log in from a new device in a location you haven’t used before?” The user confirms or denies in seconds. This resolves the category of alerts that pure AI would either suppress (risk of missing a real threat) or escalate (adding more noise to an already overwhelmed team).

This is why UnderDefense maintains a 100% ransomware prevention record across 500+ MDR clients. Detection without human-driven verification at the ambiguity boundary is just expensive guessing.

How UnderDefense Simplifies False Positive Reduction

UnderDefense combines all four techniques, behavioral baselining, cross-source correlation, analyst feedback loops, and ChatOps user verification, into a single pipeline through the UnderDefense MAXI platform. The result: alerts that reach your team are investigated, contextualized, and verified. Your analysts make decisions on confirmed incidents, not raw signals. And every alert disposition feeds back into the model, making the system measurably smarter every week.

The question isn’t whether AI might miss something. The question is whether your current approach of human analysts drowning in 73% false positives is missing more. The answer, statistically, is yes.

Q5. What Investigation Speed Benchmarks Should You Expect From an AI-Augmented SOC?

Three metrics determine whether your SOC architecture can stop modern threats or just document them after the fact: Mean Time to Detect (MTTD), Mean Time to Contain (MTTC), and Mean Time to Respond (MTTR). These aren’t dashboard vanity numbers. They directly determine whether ransomware encrypts one server or a thousand. IBM’s 2024 Cost of a Data Breach Report found the global average breach cost hit a record $4.88M, with every additional hour of dwell time increasing exposure.

The operational reality is stark. According to Mandiant’s M-Trends 2024 report, global median attacker dwell time dropped to just 10 days, with ransomware-related breaches averaging around five days. Meanwhile, ReliaQuest’s 2026 Annual Threat Report shows threat actors now achieve lateral movement in as little as 4 minutes, 85% faster than last year. Manual SOCs averaging 16 hours for containment are architecturally incapable of stopping these threats.

⏰ Before vs. After: The Numbers That Matter

| Metric | Manual SOC | AI-Augmented SOC |

|---|---|---|

| MTTD | 194 to 277 days average (IBM 2024) | Minutes to hours (behavioral detection) |

| MTTC | 70+ days average | Under 1 hour (automated containment) |

| MTTR | Days to weeks | Hours or less |

| Alert triage per incident | 20 to 30 min manual | Seconds (automated) |

| Alerts investigated/day | 50 to 100 (human capacity) | 100% (AI processes all) |

| Lateral movement response | ~16 hours manual | ~4 minutes with AI+automation |

These aren’t theoretical projections. They’re the documented gap between legacy operations and AI-augmented architectures.

📊 Speed Isn’t Efficiency. It’s Outcome.

Ransomware groups like Akira and RansomHub now encrypt systems in 4 to 6 hours after initial intrusion. The fastest data exfiltration in 2025 took merely 6 minutes. A manual SOC with 70-day MTTC doesn’t just respond slowly. It responds after the damage is irreversible. AI SOC doesn’t speed up the same process; it changes what’s architecturally possible.

✅ UnderDefense’s Documented Benchmarks

We publish transparent SLAs, not marketing claims: 15-minute escalation for critical incidents, 2-minute alert-to-triage with enrichment and context automation, documented across 500+ MDR clients. In a head-to-head case study, UnderDefense detected and contained a threat 2 days faster than CrowdStrike OverWatch, because AI-driven detection combined with human analyst context closes the gap pure technology leaves open. For a US Government organization, we reduced threat response time to 9 minutes.

“Not having to worry about ransomware, alert overload and reporting. Getting a clear view of my security posture, where the threats are coming from and how they are handled. They literally took care of all our problems.”

— Arlin O., Enterprise (1000+ emp.) UnderDefense – G2 Verified Review

“With their proactive monitoring and rapid incident response capabilities, we can detect and mitigate security threats.”

— Alexey S., CEO, Mid-Market UnderDefense – G2 Verified Review

“Their team is proactive in identifying and addressing threats, providing 24/7 oversight… it lets me focus on strategy, knowing the day-to-day security is managed effectively.”

— Oleg K., Director Information Security, Mid-Market UnderDefense – G2 Verified Review

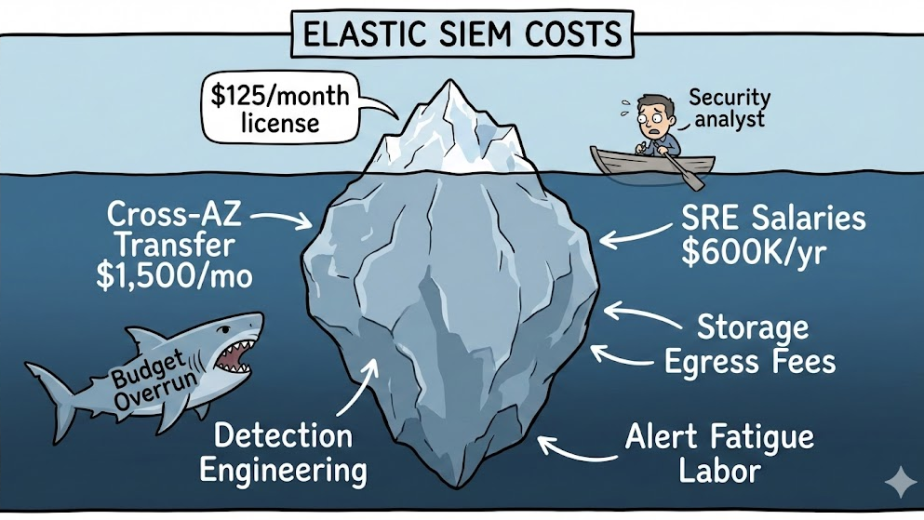

Q6. Why Do SIEM Tuning and SOAR Playbooks Fail Where AI SOC Succeeds?

Most SOC teams have already invested heavily in SIEM, whether Splunk, Microsoft Sentinel, or Elastic, and SOAR platforms like Palo Alto XSOAR or Swimlane. They’ve written hundreds of correlation rules and dozens of playbooks. Yet alert fatigue persists. The question isn’t “should we replace SIEM/SOAR?” but “why aren’t they solving the problem they were bought to solve?”

❌ The Infinite Tuning Loop

SIEM tuning is fundamentally a losing battle. Every new detection rule generates new false positives, requiring more tuning, which creates an infinite loop. CISA and ASD’s 2025 SOAR guidance acknowledged this directly: organizations become “operationally overwhelmed by false alerts,” and SOAR automation built on inaccurate SIEM output either blocks legitimate traffic or misses real threats. SOAR playbooks work for known, repeatable scenarios, but they break on novel attack patterns. Both systems are deterministic (if X then Y) in a threat landscape that’s fundamentally probabilistic. They automate the known; they can’t reason about the unknown.

The deeper issue is architectural. SOAR accuracy depends entirely on SIEM detection accuracy. Without proper SIEM operations, SOAR can’t reliably provide automated response. “No rules or signatures = no job” for SOAR. That’s the structural limitation, not a configuration problem.

✅ AI SOC: Reasoning on Top, Not Replacement

AI SOC doesn’t replace SIEM/SOAR. It layers reasoning on top of both. It ingests SIEM alerts, SOAR outputs, EDR detections, and identity anomalies, then applies behavioral AI to reason across all sources simultaneously. Where SOAR asks “does this match a playbook?”, AI SOC asks “does this look like an attack narrative across multiple signals?”

This is the critical distinction. A failed login alone is noise in your SIEM. But a failed login + MFA bypass attempt + new device enrollment + privilege escalation: that’s a correlated incident an AI SOC can build into a single narrative without requiring a human to manually connect four different tool consoles.

📊 Side-by-Side: SIEM/SOAR vs. AI SOC

| Dimension | SIEM/SOAR | AI SOC |

|---|---|---|

| Detection Logic | Rule-based (signatures) | Behavioral + contextual |

| False Positive Handling | Manual rule tuning | ML feedback loops (self-learning) |

| Novel Threat Response | Breaks on unknown patterns | Reasons about anomalies |

| Cross-Source Correlation | Configured rules required | Automatic narrative building |

| Maintenance Burden | Constant manual updates | Self-learning, continuous |

| User Verification | None | ChatOps (Slack/Teams/Email) |

🔧 The Right Answer: Augmentation, Not Replacement

The right architecture is SIEM (data) + SOAR (action) + AI SOC (reasoning). The UnderDefense MAXI platform integrates with your existing SIEM and SOAR investments, 250+ tools supported, rather than forcing migration. In a documented case study, UnderDefense helped a US IT leader make the most of existing security tools and ensure 24/7 monitoring, without replacing a single platform. We protect your investments, and we make them operationally effective. Ask “Can you work with our current stack?”, and the answer is always yes.

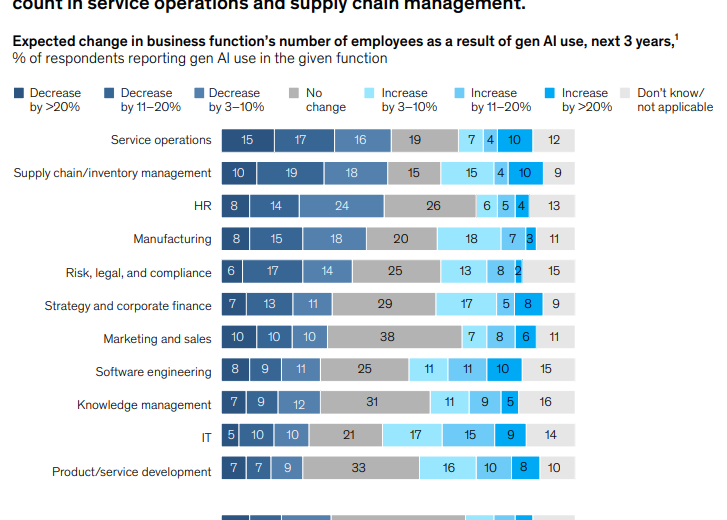

Q7. What Does the Ideal AI-Augmented SOC Operating Model Look Like?

The traditional SOC tiered model, Tier 1 triage → Tier 2 investigation → Tier 3 hunting, was designed for a world of hundreds of alerts per day. At thousands per day, Tier 1 becomes a bottleneck that swallows all headcount, starving Tier 2 and Tier 3. In practice, most “Tier 3 hunters” spend 60%+ of their time helping Tier 1 with triage overflow. The architecture collapses under its own weight.

❌ Hiring More People Feeds the Same Broken Machine

The instinctive response is to hire more Tier 1 analysts, but the ISC2 workforce gap means qualified candidates don’t exist at scale, and burnout ensures they leave within 18 months. You’re paying $50K to $100K per replacement cycle just to maintain headcount, not improve capability. Traditional MDR providers like Arctic Wolf and CrowdStrike Falcon Complete add external Tier 1 capacity, but they don’t change the fundamental model. They’re still processing the same alert volume through the same sequential pipeline.

One Arctic Wolf CISO reviewer on Gartner put it plainly:

“This is not an extension of our security team as was originally sold… Still not quite there with the remediation side of things. We receive alerts, but not necessarily a clear path to resolution.”

— Sr. Cybersecurity Engineer, Manufacturing Arctic Wolf – Gartner Review

✅ The AI-Augmented Operating Model: Machine → Investigator → Strategist

Here’s what actually works:

- Tier 0, AI Machine: Handles 99%+ of triage automatically, including deduplication, correlation, enrichment, false positive elimination, and user verification via ChatOps. Every alert is investigated; none are ignored.

- Tier 1 becomes “Investigator”: Reviews 6 analyst-ready cases per day with full context attached. Makes disposition decisions. Tunes AI. Meaningful, career-building work.

- Tier 2 becomes “Strategist/Hunter”: Proactive threat hunting, detection engineering, and security architecture. This is where strategic value lives, and where your senior talent should spend their time.

Everyone does meaningful work. Nobody is manually triaging 4,000 alerts.

🔧 How UnderDefense Implements This

We implement this model through UnderDefense MAXI + dedicated concierge analysts. UnderDefense MAXI’s AI handles Tier 0, processing all alerts at machine speed with 96% MITRE ATT&CK coverage. UnderDefense’s Tier 3 to 4 analysts work as an extension of your team for investigation and response. Your internal team focuses on strategic security, not on being the manual correlation layer at 2 AM.

This is the “AI SOC + Human Ally” model: machine speed for triage, human judgment for decisions. We learn who your VIPs are to give them white-glove treatment, ask your technical users questions about suspicious activity, and loop in managers to confirm security-impacting changes.

⭐ The Retention Argument

Analysts doing threat hunting and strategic work don’t burn out. They build careers. The best defense against the talent shortage isn’t hiring more people for a broken pipeline but making the job worth staying for, and structuring operations so humans do what humans do best: reason, decide, and act under ambiguity.

Q8. What Is the True Cost of Inaction, and How Do You Build the Board-Level Business Case?

Most organizations quantify the cost of implementing AI SOC but never calculate the cost of not implementing it. The Total Cost of Inaction (TCI) compounds across four categories: breach exposure, talent hemorrhage, compliance penalties, and strategic opportunity cost. For CISOs presenting to boards and PE operating partners, these numbers make the case with financial precision.

💸 The Four-Category TCI Model

- Breach Exposure: IBM’s 2024 report puts the global average breach cost at $4.88M, a 10% year-over-year increase. With 73% of SOC teams overwhelmed by false positives and unable to investigate all alerts, the probability of a missed real threat is materially elevated.

- Talent Hemorrhage: Replacing a Tier 1 analyst costs $50K to $100K (recruiting + training + 6-month ramp). A 10-person SOC with 30% annual turnover spends $150K to $300K per year just maintaining headcount, before counting institutional knowledge loss and increased breach risk during ramp periods.

- Compliance Penalties: SOC 2, HIPAA, and ISO 27001 audits increasingly require evidence of 100% alert investigation. Uninvestigated alerts = audit findings = regulatory exposure. You can’t tell an auditor “we didn’t have time to look at those 3,000 alerts.”

- Strategic Opportunity Cost: Senior analysts consumed by Tier 1 overflow can’t do threat hunting, detection engineering, or architecture work. You’re paying Tier 3 salaries for Tier 1 output.

💰 TCI vs. AI SOC Investment: The Math

| Category | Annual Cost (No AI SOC) | Annual Cost (With AI SOC) |

|---|---|---|

| Breach exposure risk | $4.88M average per incident | Materially reduced (100% alert investigation) |

| Analyst replacement | $150K to $500K/year (10-person SOC) | Reduced turnover = $50K to $150K savings |

| Compliance risk | Audit findings, fines, and lost contracts | Auto-generated evidence, continuous readiness |

| Opportunity cost | Senior analysts trapped in triage | Strategic hunting + detection engineering |

| Total estimated TCI | ~$800K to $2M+/year | |

| AI SOC investment | $66K to $360K/year ($11 to $15/endpoint/month) |

⚠️ The ROI range: 3 to 8x return before counting avoided breach costs.

✅ How UnderDefense Makes This Board-Ready

We publish transparent pricing ($11 to $15/endpoint/month) specifically so CISOs can model ROI against their TCI. The SOC Cost Calculator lets you input your team size, tool count, and alert volume to generate a board-ready cost comparison. In one documented case study, UnderDefense took an organization from $6.7M in losses to zero ransom paid. That’s the documented swing when you move from reactive operations to AI-augmented managed response.

The math doesn’t require faith. It requires a calculator and honest answers about your current alert investigation rate, analyst turnover, and compliance audit trail. Run the numbers, and bring them to the board.

Q9. How Does 100% Alert Investigation Become Your Compliance Advantage?

Most organizations treat alert investigation and compliance as separate workstreams: security handles alerts, GRC handles audits. But auditors have caught up. SOC 2 Type II, HIPAA, ISO 27001, and PCI-DSS all now require documented evidence that security alerts were triaged, investigated, and dispositioned. An uninvestigated alert isn’t just a missed threat but an audit finding waiting to happen.

I’ve seen this pattern dozens of times. A company passes a compliance checkpoint because they have “monitoring in place,” but when the auditor asks “show me investigation records for these 400 alerts from last quarter,” the room goes quiet. Monitoring without investigation is a compliance liability dressed up as a security control.

⚠️ Where Alert Investigation Meets Compliance Requirements

Here’s the uncomfortable overlap most security leaders discover during audit prep, not before:

- SOC 2 Type II: Requires documented evidence of continuous monitoring and incident response for every detected event. Auditors specifically check that logs are reviewed regularly, not just collected, and that alerting configurations produce investigated, documented outcomes.

- HIPAA: Mandates logging and review of all access to ePHI. Uninvestigated anomalous access events constitute a potential violation, regardless of whether actual unauthorized access occurred. The absence of investigation documentation is itself the finding.

- ISO 27001 (Annex A.16): Requires organizations to demonstrate incident identification and response across all security events, with traceable documentation from detection through final disposition.

- PCI-DSS (Requirement 10): Mandates review of all security events with complete audit trail documentation. Gaps in investigation records consistently trigger findings during QSA assessments.

❌ The Operational Gap Auditors Exploit

The operational reality? Most SOC teams investigate 10 to 30% of daily alerts. The rest get closed as noise, deprioritized, or simply ignored due to volume. That 70 to 90% gap is invisible to security dashboards, but auditors see it clearly when they pull investigation logs.

✅ How AI-Driven Investigation Creates Audit-Ready Evidence

We built the UnderDefense MAXI platform to solve this exact problem. Every alert investigated by our AI SOC produces a documented investigation trail: enrichment steps taken, correlation logic applied, disposition reasoning, and response actions executed. This isn’t a summary; it’s reproducible, auditable evidence that satisfies the “show me your investigation records” question auditors ask every single time.

Our forever-free compliance kits, included with every MDR engagement, eliminate the need for separate compliance tools or manual evidence collection. The compliance artifact generation isn’t an add-on or upsell but a natural byproduct of doing security investigation properly at 100% alert coverage.

💰 The Case That Proved the Point

The $650K loss avoidance case study illustrates this perfectly: during onboarding, we discovered 11 servers already infected with Cobalt Strike beacons. The immediate threat was real, but so was the compliance exposure. The client had no documented evidence of prior alert investigation for those servers. Our comprehensive alert coverage closed both the security gap and the compliance exposure simultaneously, with every remediation step documented and audit-ready from day one. Within 30 days, they went from zero investigation coverage to full-spectrum compliance evidence, and responded 40% faster to critical alerts.

Q10. How Should You Evaluate an AI SOC Solution? (7-Point Decision Framework)

Choosing an AI SOC platform means committing to a security architecture that will process every alert your organization generates for years. Pick wrong, and you’re locked into a vendor that generates more noise, not less, or one that suppresses real threats chasing clean dashboards.

❌ The Wrong Way to Evaluate

Most leaders evaluate AI SOC solutions on marketing claims (“we use AI”) or integration counts (“we support 500 tools”). This skips the questions that actually matter: Can it reason across sources, or just ingest them? Does it verify ambiguous alerts, or auto-close them? Does it respond, or just escalate tickets back to your team?

✅ The 7-Point Evaluation Framework

Score each vendor 0 to 2 on every criterion below. Marketing pages don’t count. Ask for live demonstrations.

| # | Criterion | What to Validate |

|---|---|---|

| 1 | Cross-Source Reasoning | Correlates across EDR + identity + cloud + network, or processes each in isolation? |

| 2 | False Positive Feedback Loop | AI learns from analyst dispositions to reduce noise over time? |

| 3 | User Verification Capability | Contacts affected users directly to resolve ambiguous alerts? |

| 4 | Response Capability | Detect-only, or detect + contain + remediate? |

| 5 | Vendor-Agnostic Integration | Works with existing stack, or requires tool migration? |

| 6 | Transparent Speed SLAs | Published response times with documented case study evidence? |

| 7 | Compliance Evidence Generation | Auto-produces audit artifacts, or requires separate tools? |

⏰ How to Read Your Score

- 12 to 14 = Genuine AI SOC capability. This vendor can own security outcomes.

- 8 to 11 = Partial automation. You’ll still need significant internal triage effort.

- Below 8 = AI-branded monitoring, not intelligent triage.

- Below 5 = You’re adding another alert source, not solving alert fatigue.

⭐ Where UnderDefense Scores

| Criterion | Score | Evidence |

|---|---|---|

| Cross-Source Reasoning | 2/2 | 250+ integrations across EDR, identity, cloud, network, and SaaS |

| False Positive Feedback Loop | 2/2 | 99% alert noise reduction within the first month |

| User Verification (ChatOps) | 2/2 | Only MDR that contacts users directly via Slack/Teams/Email |

| Response Capability | 2/2 | Full containment + remediation; 0.5-hour MTTR SLA |

| Vendor-Agnostic Integration | 2/2 | Works with existing stack, no migration required |

| Transparent Speed SLAs | 2/2 | Detected threats 2 days faster than CrowdStrike OverWatch |

| Compliance Evidence | 2/2 | Forever-free compliance kits included with MDR |

| Total | 14/14 | $11 to $15/endpoint/month; 30-day turnkey deployment |

The real question isn’t “Which AI SOC has the most integrations?” but “Which provider can respond to threats the way a dedicated security team would?”

“Their team provided us with clear and detailed insights into security vulnerabilities, along with practical recommendations on how to fix them. This level of transparency made it easy for our team to take action and strengthen our security.”

— Arman N., CTO UnderDefense – G2 Verified Review

“UnderDefense MAXI integrates well with our systems, specifically with our SIEM, Splunk. Their team is proactive in identifying and addressing threats, providing 24/7 oversight.”

— Oleg K., Director Information Security UnderDefense – G2 Verified Review

UnderDefense maintains 100% ransomware prevention across 500+ MDR clients over 6 years, because detection without context-driven human response is just expensive alerting.

Q11. Which SOC Solutions Are Leading the AI-Augmented Security Operations Shift?

The leading SOC-as-a-Service providers for AI-augmented security operations in 2026 include UnderDefense, Arctic Wolf, CrowdStrike Falcon Complete, Expel, and Red Canary, each with fundamentally different architectural approaches to alert triage and investigation automation.

How the AI SOC Market Has Split

The market has divided into three distinct camps, and understanding which camp a vendor falls into matters more than any feature list:

- Vendor-locked platforms that require proprietary tool replacement before monitoring begins (Arctic Wolf, ReliaQuest). You get comprehensive coverage, but only after migrating your entire security stack to theirs, abandoning the business logic, correlation rules, and tuning you’ve built over years.

- Detection-only AI that automates initial triage but still escalates unresolved alerts back to your team for investigation and response (Expel, Red Canary). Strong technology, but the “last mile” of response still lands on your analysts.

- AI SOC + Human Ally models that combine automated triage with concierge analyst response and direct user verification, owning outcomes end-to-end (UnderDefense). Detection, verification, containment, and remediation happen without escalating back to your already-stretched team.

The right choice depends entirely on your existing tool investments and whether you need detection alone or detection-plus-response.

What Separates AI-Augmented SOC Providers

When evaluating providers, these differentiators matter more than generic feature lists:

- Vendor-agnostic integration vs. proprietary stack requirements, which determines whether you protect or abandon current security investments.

- AI triage depth: simple deduplication vs. full enrichment + cross-source correlation + direct user verification.

- Human analyst access: ticket-based escalation queues vs. direct Tier 3 to 4 analyst communication.

- Published response SLAs backed by documented case studies, not marketing claims or asterisk-laden guarantees.

- Compliance evidence included natively with monitoring vs. sold as a separate product or add-on.

Who Fits Where

Each provider excels in different scenarios. Arctic Wolf works for organizations wanting single-vendor simplicity without existing security investments to protect. UnderDefense works for teams that want to keep their current stack and add AI-augmented triage with human response. CrowdStrike Falcon Complete works for Falcon-native environments where endpoint depth matters most. Expel works for teams valuing software-driven transparency with their own internal response capability. The detailed comparison below breaks down pricing, response times, integration capabilities, and compliance support for each provider.

Analysis based on documented case studies, G2 Spring 2025 rankings, and operational outcomes across 500+ MDR deployments.

Q12. Is Your SOC Ready for the AI Triage Shift? (Security Operations Readiness Checklist)

Score your security operations against these 8 criteria to determine whether your current SOC architecture can sustain your organization’s growth, or whether alert volume will outpace your team within 12 months.

The 8-Point SOC Readiness Checklist

- ☐ 1. Can your SOC investigate 100% of daily alerts, not just the ones analysts have time for?

- ☐ 2. Is your mean time to contain critical threats under 1 hour?

- ☐ 3. Do analysts spend more time on threat hunting than alert triage?

- ☐ 4. Are false positive rates below 10% across all detection sources?

- ☐ 5. Can your team verify suspicious user activity directly via Slack/Teams?

- ☐ 6. Does security monitoring auto-generate compliance evidence (SOC 2, HIPAA, and ISO 27001)?

- ☐ 7. Is SOC analyst annual turnover below 20%?

- ☐ 8. Can you onboard new detection sources in days, not months?

⭐ Score Interpretation

| Score | Assessment | What It Means |

|---|---|---|

| 6 to 8 ✅ | SOC is mature | Focus on optimization, threat hunting, and expanding detection coverage |

| 3 to 5 ⚠️ | Critical gaps exist | You’re likely missing threats or burning out your team on alert noise and manual triage |

| 0 to 2 ❌ | Architecture outpaced | Reactive processes dominate; breach risk is elevated and growing with every new tool added |

Be honest with yourself on this. When I talk to CISOs and IT Directors, most land in the 2 to 4 range, not because their teams aren’t talented, but because the architecture wasn’t designed for the alert volumes and tool sprawl they’re dealing with today. It’s an infrastructure problem, not a people problem. And it’s solvable.

✅ How UnderDefense Turns Unchecked Boxes Into ✅

UnderDefense is designed to move every unchecked box to checked: 24/7 AI triage with a 0.5-hour MTTR SLA, ChatOps user verification via Slack and Teams, 250+ tool integrations without replacing your existing stack, automated compliance evidence generation, and dedicated Tier 3 to 4 analysts who learn your organization’s context. Most clients move from 2 to 3 checks to 8/8 within 30 days of onboarding, because we don’t just layer on top of your tools but operationalize them.

The Numbers Behind the Checklist

UnderDefense clients achieve 96% MITRE ATT&CK coverage and 99% alert noise reduction within the first month. Those aren’t aspirational benchmarks but documented outcomes across 500+ deployments. When your SOC team spends less time chasing false positives and more time on proactive threat hunting, the entire security posture shifts from reactive to resilient.

“The biggest win for me was getting actual control over our security alerts. Before the guys from UD stepped in, we were getting bombarded with alerts from all our security tools. Their team cleaned up our configurations and got the noise under control within the first week.”

— Verified User, Marketing and Advertising UnderDefense – G2 Verified Review

“UnderDefense has changed our approach to cybersecurity. At first, we hired them for managed SIEM service, but after they demonstrated the value of MDR, our management was motivated to act on it. Now, with their security monitoring and incident response we know our endpoints are well-protected.”

— Yaroslava K., IT Project Manager UnderDefense – G2 Verified Review

Scored below 5? Book a 15-minute security gap assessment to see exactly where UnderDefense closes the holes.

1. How does an AI SOC reduce 1,000+ daily alerts down to a handful of analyst-ready cases?

We designed our AI SOC platform to work the way a senior analyst thinks—but at machine speed.

Here’s how the reduction happens:

-

Ingestion & normalization: The platform pulls telemetry from endpoints (CrowdStrike, SentinelOne), SIEM (Splunk, Sentinel), identity (Okta, Azure AD), and cloud consoles into a single correlation layer.

-

AI-driven enrichment: Each alert is automatically enriched with threat intelligence, asset context, and historical behavioral baselines—eliminating the manual lookup cycle.

-

Automated false-positive suppression: Custom detection tuning, built during onboarding, filters out known benign patterns specific to your environment. This alone removes 80–90% of noise.

-

Contextual correlation: Remaining alerts are grouped into incident clusters. A suspicious login, a PowerShell execution, and a data exfiltration attempt aren’t three separate alerts—they’re one case.

-

Human verification: Our analysts verify ambiguous alerts directly with affected users via Slack, Teams, or email before escalating.

The result: your team reviews 5–6 confirmed, investigation-ready cases per day—not 1,000 raw alerts. That’s the architectural shift from alert factories to actionable intelligence.

2. What is SOC alert fatigue, and why is it a critical risk for security teams?

SOC alert fatigue occurs when security analysts are overwhelmed by the sheer volume of alerts—most of which are false positives or low-priority noise—leading to desensitization and missed threats.

We see this pattern across nearly every organization we onboard:

-

Volume problem: Mid-market SOCs receive 500–5,000+ alerts daily, but fewer than 1% represent genuine threats.

-

Cognitive overload: Analysts spend 10–15 hours per week manually triaging alerts that should never have reached them.

-

Coverage gaps: Research consistently shows that 70% of SOC teams admit critical alerts get ignored due to volume.

-

Turnover accelerator: The average SOC analyst tenure is 18 months before burnout-driven attrition.

The hidden cost isn’t just fatigue—it’s the real threats buried in the noise that go uninvestigated. We built our alert triage and investigation capabilities specifically to eliminate this cycle. Our custom detection tuning reduces customer-facing alerts by up to 99%, ensuring your team focuses on confirmed incidents—not triaging thousands of maybes.

3. How does AI-powered triage automation speed up SOC investigations?

Traditional SOC investigation workflows are painfully linear: an alert fires, an analyst opens it, pivots between 3–5 tools, gathers context, contacts the user, and makes a disposition. This cycle takes 25–45 minutes per alert.

AI-powered triage automation compresses this into seconds by:

-

Auto-enriching alerts with asset ownership, user behavior baselines, geolocation, and threat intelligence—eliminating manual pivot time.

-

Running correlation logic across endpoints, identity, cloud, and network simultaneously, not sequentially.

-

Applying disposition recommendations based on historical patterns in your specific environment.

-

Triggering ChatOps verification—our analysts reach out directly to affected users via Slack or Teams to confirm or deny suspicious activity in real time.

At UnderDefense, we documented threat detection 2 days faster than CrowdStrike OverWatch in a head-to-head comparison. Our MTTR for critical incidents is 0.5 hours—because AI handles the repetitive correlation while human analysts focus on judgment calls that require organizational context.

4. Can AI SOC automation actually reduce SOC analyst burnout?

Yes—and we’ve seen it firsthand across 500+ MDR deployments.

Burnout in security operations isn’t caused by hard work—it’s caused by meaningless work. When a Tier 1 analyst spends their entire shift investigating alerts that turn out to be a developer running a legitimate script, frustration compounds daily.

AI SOC automation reduces burnout by:

-

Eliminating repetitive triage: AI handles the first 95% of alert disposition, freeing analysts to work on genuine threats that require critical thinking.

-

Removing the 2 AM investigation cycle: Automated containment (credential revocation, endpoint isolation) means confirmed threats are handled without waking your team.

-

Restoring professional fulfillment: Analysts transition from alert processors to threat hunters and incident responders—the work they were actually hired to do.

One of our G2 reviewers, a CIO, described it perfectly: “Not having to worry about ransomware, alert overload, and reporting” was the primary benefit of working with us. We recommend exploring our SOC Automation Assessment Checklist to benchmark where your team currently stands and identify automation opportunities.

5. How do we reduce false positives in the SOC without missing real threats?

False positive reduction without coverage loss requires a dual approach: better detection logic and human verification. Most tools attempt only the former and fail at the latter.

Here’s our proven framework:

-

Custom detection tuning during onboarding: We don’t apply generic rulesets. During our 30-day onboarding, we map your environment’s normal behavior—approved scripts, expected geo-logins, sanctioned tools—and suppress known benign patterns.

-

AI-driven behavioral baselining: The platform continuously learns what’s normal for each user and asset, flagging deviations rather than matching static signatures.

-

Direct user verification (ChatOps): When an alert is ambiguous—”Did Jane log in from Berlin?”—our analysts ask Jane directly via Slack or Teams. This closes the verification loop in seconds, not hours.

-

96% MITRE ATT&CK coverage: Our comprehensive threat detection ensures that while false positives drop, real attack techniques remain fully covered.

The net result: false positives become a rarity while genuine threats surface faster. Our clients consistently report that when an alert reaches them, it’s something worth investigating.

6. What's the real cost of not addressing SOC alert fatigue?

The cost extends far beyond analyst salaries—it compounds across operational, financial, and strategic dimensions.

Operational costs:

-

10–15 hours/week per analyst wasted on manual triage of false positives

-

Increased mean time to detect (MTTD) as critical alerts are buried in noise

-

Average dwell time increases 3x when analysts are overwhelmed

Financial costs:

-

Analyst turnover costs $50K–$100K per replacement (recruiting, training, ramp-up)

-

A single missed breach averages $4.45M in total damages (IBM Cost of a Data Breach)

-

Compliance penalties for inadequate monitoring and response documentation

Strategic costs:

-

Security team credibility erodes with leadership when incidents are missed

-

Inability to retain senior talent who refuse to work in reactive, alert-factory environments

We built our MDR service specifically to eliminate these compounding costs. Organizations that switch from manual triage to AI-assisted operations reclaim 60–80% of analyst time for proactive security activities—threat hunting, posture improvement, and architecture review.

7. How does an AI SOC differ from a traditional MSSP or MDR provider?

The distinction is architectural, not just branding.

Traditional MSSPs provide monitoring without intelligence. Legacy MDR providers detect without responding. An AI SOC combines automated enrichment, behavioral analysis, and human analyst response into a unified workflow.

We’ve detailed this evolution in our AI SOC Promise vs. Reality guide, which provides a practical evaluation framework for security leaders comparing these models.

8. How quickly can we implement an AI SOC to start seeing reduced alert volume?

With UnderDefense, the timeline is 30 days from contract to full operational coverage—not the 6-month deployment cycles typical of legacy providers.

Here’s the onboarding breakdown:

-

Week 1: Integration with your existing stack. Our platform connects to 250+ tools (CrowdStrike, Splunk, SentinelOne, Microsoft Defender, Okta) without forcing replacements.

-

Week 2: Custom detection tuning. We map your environment’s normal behavior to suppress known false positives from day one.

-

Week 3: 24/7 monitoring activation with AI-driven triage and human analyst response.

-

Week 4: 30-day impact report delivery—documenting alert reduction, threats detected, and response metrics.

Clients typically see an 80–90% reduction in alert noise within the first week of custom tuning. By day 30, you receive a full impact report showing exactly what changed—threats caught, false positives eliminated, and time reclaimed.

As one of our G2 reviewers noted: “The speed of onboarding was a delightful surprise. In times where integrating new systems can take weeks, UnderDefense had us up and running in no time.”

The post AI SOC Investigation Speed: How We Cut 1,000 Alerts to 6 Real Cases appeared first on UnderDefense.