Q1. Why Does Your AI SOC Deployment Model Matter More Than the AI Itself?

Here’s a pattern I see constantly: a security team already runs CrowdStrike for endpoints, Splunk for log aggregation, Okta for identity, and Microsoft Defender for cloud workloads. They decide to add an AI SOC layer, and suddenly the hardest question isn’t “how good is the AI?” It’s where does the AI actually run, who controls the infrastructure, and where does your security telemetry live? That deployment model decision is the architectural fork that determines everything downstream: data control, compliance posture, integration depth, and operational ownership. Most vendor evaluations focus on detection accuracy or AI capabilities. In practice, the deployment architecture shapes your security program far more than any algorithm ever will. Get the deployment wrong, and even the best AI engine becomes a liability.

❌ The Vendor Lock-In Trap Most Providers Won’t Talk About

Most traditional MDR providers dodge this question entirely. Arctic Wolf operates a proprietary stack: you feed data into their cloud, their way, on their terms. Want to look under the hood or make changes? As one cybersecurity services manager put it:

“We received little value from ArcticWolf. The product offered little visibility when we were using it… Anything you want to look at or changes you need to make in the product must go through their engineering team.”

— Matt C., Manager, Cybersecurity Services Arctic Wolf – G2 Verified Review

CrowdStrike Falcon Complete keeps you inside the Falcon ecosystem. ReliaQuest ties everything to GreyMatter. The message from most vendors is effectively the same: “plug into our cloud or leave.” That’s a deployment philosophy that treats your infrastructure reality as an afterthought, and it creates genuine problems when your regulatory or operational requirements don’t align with the vendor’s preferred model. I’ve watched organizations abandon months of integration work because the MDR vendor’s rigid deployment architecture collided with a new compliance mandate.

⚠️ Why Deployment Flexibility Is Non-Negotiable in 2026

Regulatory fragmentation has made this worse. GDPR, NIS2, CMMC, and ITAR: these frameworks don’t just regulate raw logs. AI models processing your security telemetry create derivative data: enriched alerts, correlation outputs, and investigation artifacts. That derivative data falls under the same jurisdictional controls as the source logs. A SaaS-only AI SOC processing European employee identity data in a US-hosted environment creates GDPR and CLOUD Act exposure that most vendors don’t even acknowledge. Sovereign cloud mandates across the EU and Asia-Pacific are accelerating this trend even further.

The “AI SOC + Human Ally” model has to adapt to the customer’s infrastructure, not force the customer to rebuild their SOC around a vendor’s preferred architecture. That’s the operational reality, and it’s non-negotiable.

✅ How UnderDefense MAXI Handles This Differently

We built UnderDefense MAXI to be purpose-built for deployment flexibility: SaaS, customer-specific cloud environments (AWS, Azure, GCP, Oracle), on-premise, and air-gapped options. MAXI keeps logs and AI-generated data in your data lake, integrates with 250+ existing tools, and provides vendor-agnostic operation regardless of which deployment model fits your reality. You don’t surrender your data or your detection logic to access AI-powered security operations.

UnderDefense operates across 65,000+ endpoints globally in cloud, hybrid, and on-prem environments, because the deployment model should fit your regulatory and operational reality, not force you to rebuild your SOC around a vendor’s infrastructure preference.

Q2. What Are the Four AI SOC Deployment Models and How Do They Differ?

Four distinct deployment architectures exist for AI SOC environments, and each one makes fundamentally different tradeoffs around data control, compliance, cost, and operational ownership. Understanding these differences isn’t academic but rather what determines whether your AI SOC helps or harms your compliance posture.

The Four Models, Defined

- SaaS (Vendor-Hosted Multi-Tenant Cloud) — The vendor hosts everything: AI models, detection logic, log storage, and investigation workflows in their own multi-tenant cloud infrastructure.

- BYOC (Bring Your Own Cloud) — The vendor’s software and AI layer run inside your cloud VPC (AWS, Azure, GCP, Oracle). The vendor manages orchestration; you own the cloud account and all data.

- On-Premise — All components (AI models, detection logic, and telemetry) stay within your physical or virtual infrastructure. Full control, full responsibility.

- Air-Gapped — Complete isolation with zero internet connectivity. Designed for defense, intelligence community, critical infrastructure (OT/SCADA), and ITAR-controlled environments.

📊 Master Comparison Table

| Dimension | SaaS | BYOC | On-Premise | Air-Gapped |

|---|---|---|---|---|

| Data Location | Vendor cloud | Customer VPC | Customer data center | Isolated facility |

| Infrastructure Control | Vendor-managed | Shared (customer owns cloud, vendor manages software) | Customer-managed | Customer-managed |

| Shared Responsibility | Vendor handles most | Split: vendor manages AI/detection, customer owns data layer | Customer handles most | Customer handles all |

| Compliance Fit | SOC 2, basic GDPR | GDPR, HIPAA, SOC 2, NIS2 | All frameworks including strict sovereignty | CMMC, ITAR, classified environments |

| TCO Profile | Lowest operational overhead | Moderate (cloud costs + vendor fees) | Highest (infrastructure + ML-Ops staff) | Highest (physical + specialized staff) |

| Staffing Needs | Minimal | Cloud/DevOps team required | Internal ML-Ops + SOC team | Specialized cleared personnel |

| Update Mechanism | Automatic, vendor-controlled | Vendor-pushed into customer VPC | Vendor-assisted or manual | Secure media transfer only |

| Integration Approach | API-based, vendor-managed | API within customer VPC boundary | On-prem connectors + custom integrations | Isolated connectors, no external calls |

SaaS: Speed Over Control

SaaS is the fastest path to deployment and the lowest operational overhead. The vendor manages everything: infrastructure, updates, and scaling. The trade-off is that your telemetry leaves your perimeter, you face CLOUD Act exposure if the vendor hosts in US jurisdiction, and customization of AI model behavior is limited. Ideal for teams without dedicated ML-Ops staff who need coverage fast.

BYOC: The Middle Ground Gaining Traction

BYOC is the model I see gaining the most traction in 2026, especially among regulated industries: healthcare, fintech, and any organization subject to NIS2 or strict GDPR enforcement. The vendor manages the AI and detection layer, but your data never leaves your VPC. AI inference runs within your cloud account with vendor-managed orchestration. You get the expertise without surrendering data residency.

On-Premise: Full Control, Full Responsibility

On-premise means AI models, detection logic, and all telemetry stay within your physical or virtual infrastructure. This requires internal ML-Ops capability or vendor-assisted management, but it’s the right choice for enterprises with significant data center investments and strict data sovereignty mandates.

🔒 Air-Gapped: Maximum Isolation

Zero internet connectivity. Complete isolation. Model updates arrive via secure media transfer. This architecture exists for defense/IC, critical infrastructure, and ITAR-controlled environments. It delivers the highest security ceiling alongside the highest operational complexity.

UnderDefense MAXI supports all four models, keeping logs and AI data in the customer’s data lake regardless of which architecture fits your operational and regulatory reality.

Q3. Where Does Your Data Actually Live, and What’s the Difference Between Data Residency and Data Sovereignty?

This is the question that separates security leaders who understand AI SOC implications from those who don’t. “Where does my data live?” sounds simple. The answer, in an AI SOC context, is anything but.

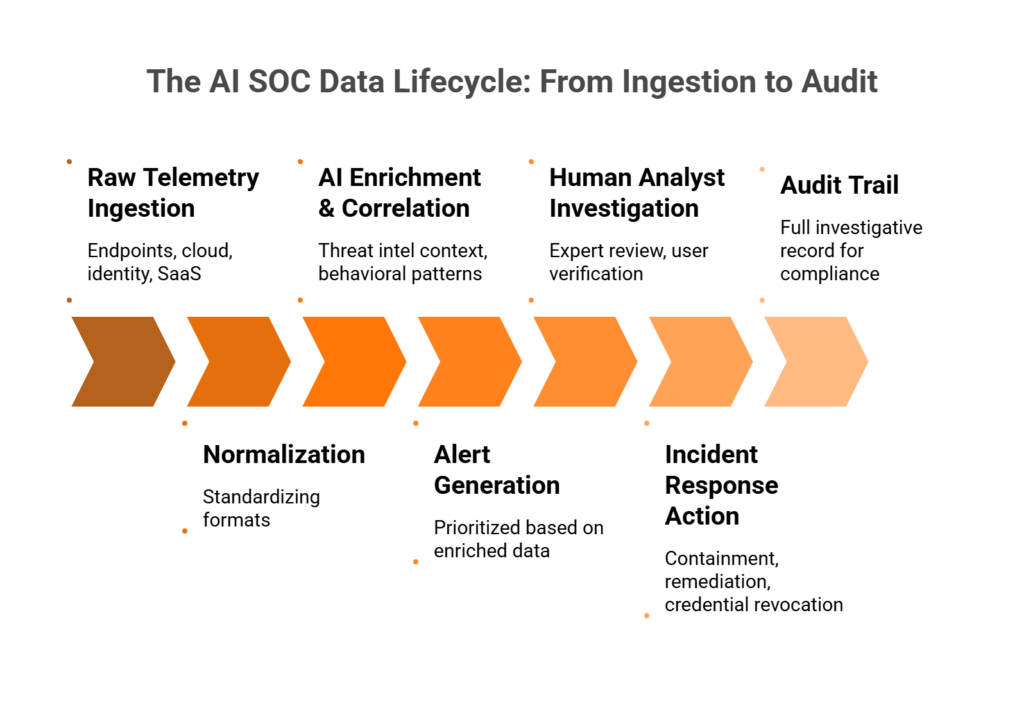

The AI SOC Data Lifecycle

Every AI SOC processes security data through a multi-stage lifecycle, and each stage creates data with jurisdictional, compliance, and ownership implications:

- Raw telemetry ingestion — Logs from endpoints, cloud workloads, identity providers, and SaaS applications

- Normalization — Standardizing formats across disparate sources

- AI enrichment and correlation — The AI layer connects signals, adds threat intelligence context, and identifies behavioral patterns

- Alert generation — Prioritized alerts based on enriched data

- Human analyst investigation — Expert review, user verification, and contextual analysis

- Incident response action — Containment, remediation, and credential revocation

- Audit trail — Full investigative record for compliance evidence

The critical point most vendors ignore: AI inference outputs are derivative data. Enriched alerts, correlation results, and investigation artifacts inherit the classification of the source telemetry. This means every stage above has compliance implications, not just the initial ingestion.

📊 Data Flow by Deployment Model

| Lifecycle Stage | SaaS | BYOC | On-Premise | Air-Gapped |

|---|---|---|---|---|

| Raw telemetry storage | Vendor cloud | Customer VPC | Customer infrastructure | Isolated facility |

| AI processing location | Vendor cloud | Customer VPC | Customer infrastructure | Isolated facility |

| Enriched alert storage | Vendor cloud | Customer VPC | Customer infrastructure | Isolated facility |

| Investigation artifacts | Vendor cloud | Customer VPC | Customer infrastructure | Isolated facility |

| Audit trail ownership | Shared (vendor-controlled) | Customer-owned | Customer-owned | Customer-owned |

| External data egress | Yes (to vendor cloud) | No (stays in VPC) | No | Zero |

⚠️ Data Residency vs. Data Sovereignty: The Distinction That Matters

These two terms get conflated constantly, and the confusion creates real compliance risk:

- Data residency = where data physically sits. A geographic question.

- Data sovereignty = whose laws govern that data. A jurisdictional question.

Here’s where it gets dangerous: an AI SOC processing European employee identity logs in a US-hosted SaaS environment creates GDPR exposure, even if the raw logs originated in the EU. The US CLOUD Act gives federal agencies authority to compel US-based cloud providers to produce data stored anywhere globally. If your AI SOC vendor hosts in the US, your European security telemetry may be subject to US jurisdiction regardless of where the data physically resides.

This extends to AI model training data. If a vendor’s AI models are trained on aggregated customer telemetry, who owns the patterns the model learned? Where does that training happen? These are questions most MDR providers simply cannot answer transparently.

✅ UnderDefense’s Data Ownership Approach

We designed UnderDefense MAXI so that logs and AI-generated data stay in your data lake. Whether deployed as SaaS, BYOC, on-prem, or air-gapped, the customer retains full ownership of all telemetry and AI-generated investigation artifacts. Every investigative step is observable and auditable: no black boxes, no hidden data processing, no “trust me, it works.” That’s the standard we hold ourselves to.

Q4. Who Controls the Infrastructure, and What Does ‘Shared Responsibility’ Really Mean for Each Model?

“Shared responsibility” is one of the most overused and least understood phrases in cybersecurity. Every MDR vendor claims a shared responsibility model. Almost none of them define it clearly enough for you to know who’s actually accountable when something goes wrong.

📊 Shared Responsibility Matrix

| Responsibility | SaaS | BYOC | On-Premise | Air-Gapped |

|---|---|---|---|---|

| Physical infrastructure | Vendor | Customer (cloud provider) | Customer | Customer |

| Network security | Vendor | Shared | Customer | Customer |

| OS patching | Vendor | Shared | Customer | Customer |

| AI model training/updates | Vendor | Vendor (deployed in customer VPC) | Vendor-assisted or customer | Customer (via secure media) |

| Detection rule management | Vendor | Shared | Shared or customer | Customer |

| Log storage/retention | Vendor | Customer | Customer | Customer |

| Encryption key management | Vendor | Customer | Customer | Customer |

| Access control (RBAC) | Shared | Customer | Customer | Customer |

| Incident response execution | Vendor (with escalation) | Shared | Shared | Customer (with vendor guidance) |

| Compliance evidence generation | Vendor | Shared | Customer | Customer |

The Gray Areas That Cause Operational Friction

The matrix above looks clean. Reality isn’t. Here are the questions that shift dramatically between deployment models, and that most vendors avoid answering:

- Who owns encryption keys? In SaaS, the vendor typically controls key management. In BYOC and on-prem, you do.

- Who controls AI model update cadence? Can you delay an update that might disrupt detection logic during a critical compliance window?

- Who has access to raw investigation data? In SaaS, vendor analysts and infrastructure staff have access. In BYOC, access is scoped to your VPC.

- Who can modify detection logic? If you can’t adjust detection rules, you’re renting someone else’s security judgment.

❌ The “Black Box” Problem with Traditional MDR

This is where traditional MDR providers fall apart operationally. A CISO reviewing Arctic Wolf on Gartner put it directly:

“Analysts provide little context, and when asked for more information in the investigation nothing is ever provided or even communicated.”

— CISO, Manufacturing Arctic Wolf – Gartner Peer Review

“This is not an extension of our security team as was originally sold… We receive alerts, but not necessarily a clear path to resolution.”

— Sr. Cybersecurity Engineer, Manufacturing Arctic Wolf – Gartner Peer Review

When your MDR operates as an opaque system, you can’t observe what the AI does with your data, you can’t audit investigation logic, and you can’t modify detection rules to match your context.

✅ UnderDefense’s Observable, Auditable Approach

Every investigative step in UnderDefense MAXI is observable and auditable. Our Detection Logic as Code approach means detection rules are written in Python, versioned, unit-tested, and deployed via CI/CD. The customer retains ownership of detection rules, investigation artifacts, and all telemetry, regardless of deployment model. ChatOps verification provides full transparency: when our analysts verify suspicious activity with a user via Slack or Teams, you see the conversation, the decision, and the outcome.

The test is simple: can you reproduce the investigation? Can you audit every step? If not, you don’t have a partner but a black box with a monthly invoice.

Q5. Which Deployment Model Matches Your Regulatory Requirements?

Every regulatory framework imposes different data handling, residency, and control requirements. Choose the wrong deployment model for your AI SOC, and you’re not just dealing with a vendor mismatch. You’re staring down compliance gaps that cost six figures to remediate and can stall deals for months.

❌ The Wrong Way to Decide

Most security leaders default to whatever the vendor offers. “They only have SaaS, so we’ll make SaaS work for HIPAA.” Or they assume any cloud deployment automatically disqualifies them from compliance with data sovereignty mandates. Both approaches are wrong.

The first ignores that certain frameworks impose explicit requirements around data residency, encryption-at-rest controls, and access auditability that a multi-tenant SaaS model may not satisfy without additional architectural controls. The second ignores the fact that BYOC (Bring Your Own Cloud) deployments running inside your VPC can satisfy the same residency and control requirements as on-prem, sometimes better, because the cloud provider’s compliance certifications layer on top of yours.

✅ The Right Evaluation Framework

Map your specific regulatory mandates to deployment capabilities. Here’s the compliance mapping matrix:

| Deployment Model | GDPR | HIPAA | SOC 2 Type II | ISO 27001 | NIS2 | PCI DSS | CMMC Level 2+ | ITAR | FedRAMP |

|---|---|---|---|---|---|---|---|---|---|

| SaaS (Multi-Tenant) | ✅ | ⚠️ | ✅ | ✅ | ⚠️ | ✅ | ❌ | ❌ | ❌ |

| BYOC (Your VPC) | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ⚠️ | ❌ | ⚠️ |

| On-Premise | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ⚠️ | ⚠️ |

| Air-Gapped | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

🏢 Industry-Vertical Mapping

Your industry often dictates the shortest path to the right model:

- Defense / Intelligence Community → Air-Gapped (ITAR, CMMC Level 2+, FedRAMP High)

- Healthcare → On-Prem or BYOC (HIPAA BAA requirements, PHI data residency)

- Financial Services → BYOC or SaaS (SOC 2, PCI DSS, data sovereignty per jurisdiction)

- Technology / SaaS Companies → SaaS or BYOC (SOC 2, ISO 27001, speed-to-value)

- Manufacturing / OT / SCADA → On-Prem or Air-Gapped (network isolation, NIS2)

- PE Portfolio Companies → SaaS for rapid security uplift across portfolio

Where UnderDefense Stands

Here’s the operational reality: most AI SOC vendors offer exactly one deployment model, which means you either accept compliance risk or reject the vendor entirely. We support all four, SaaS, BYOC, On-Prem, and Air-Gapped, because the customer should choose the model, and the vendor should adapt.

UnderDefense holds full regulatory compliance for ISO 27001, SOC 2 Type 1 & 2, HIPAA, PCI DSS, GDPR, and CMMC. The UnderDefense MAXI Compliance product includes forever-free compliance kits for SOC 2, ISO 27001, and HIPAA, replacing separate GRC tools like Vanta or Drata with automated evidence collection, continuous control validation, and reporting built on an actual security operations platform.

Deployment flexibility isn’t a feature checkbox. It’s the difference between achieving compliance and explaining to your auditor why your AI SOC vendor forced you into an architecture that doesn’t fit your regulatory reality.

Q6. How Does Each Model Fit Into the SOC Stack You Already Operate?

The Scenario Nobody Wants

You’ve spent 18 months tuning Splunk detection rules. You’ve invested $200K+ in CrowdStrike Falcon across 3,000 endpoints. Your Okta identity governance is finally mature. Then a vendor says their AI SOC requires migrating logs to their proprietary SIEM, abandoning your detection logic, and starting from scratch.

This isn’t hypothetical but an operational reality. As JR, a former CISO at Micron and Darktrace, explained on our podcast: when you switch vendors, the business logic and correlation rules don’t transfer. Custom integrations like connecting project management systems to security workflows for insider threat context are gone. You’re back to square one on the tuning process.

⚠️ Why This Problem Exists

Most AI SOC vendors bundle proprietary SIEM/XDR as a hard requirement. Arctic Wolf requires replacing your existing SIEM with their stack, offering no flexibility and poor on-prem support. Deepwatch ties you to Splunk. The deployment model you pick dictates whether your existing investments survive or get abandoned.

“This is not an extension of our security team as was originally sold.”

— Sr. Cybersecurity Engineer, Manufacturing [$500M–$1B] Arctic Wolf – Gartner Verified Review

“We received little value from Arctic Wolf. The product offered little visibility… Anything you want to look at or changes you need to make in the product must go through their engineering team.”

— Matt C., Manager, Cybersecurity Services Arctic Wolf – G2 Verified Review

✅ How It Should Work

The AI SOC layer should integrate with your existing stack regardless of deployment model.

- SaaS: API connections to your SIEM, EDR, and identity tools in the cloud

- BYOC: Runs inside your VPC alongside Splunk, Elastic, or Sentinel

- On-Prem: Deploys adjacent to your SIEM infrastructure

- Air-Gapped: Operates within your isolated network segment

Zero rip-and-replace across all four models. Your detection logic stays yours.

UnderDefense’s Approach

UnderDefense MAXI integrates with 250+ tools across every deployment model: Splunk, Elastic, Microsoft Sentinel, CrowdStrike, SentinelOne, Defender, Okta, AWS, Azure, and GCP.

Detection Logic as Code means rules are versioned, portable, and yours to keep. If you ever switch providers, the correlation rules and business logic you built stay with you, not locked in a vendor’s proprietary system. 30-day turnkey onboarding includes security hardening and custom detection tuning validated with adversary simulation frameworks like Caldera and Atomic Red Team.

“Analysts provide little context, and when asked for more information in the investigation nothing is ever provided or even communicated. Support incidents are not worked to completion and communication evaporates.”

— CISO, Manufacturing [$3B–$10B] Arctic Wolf – Gartner Verified Review

From 6-month stack migrations to 30-day turnkey deployment preserving every dollar invested in security tools, that’s vendor partnership, not vendor lock-in.

Q7. How Do AI Models Get Updated in On-Premise and Air-Gapped Environments?

AI SOC platforms depend on continuously updated detection models, threat intelligence feeds, and behavioral baselines. In SaaS and BYOC deployments, updates flow seamlessly over the network. In on-premise and air-gapped environments, the update pipeline must be completely redesigned. This is the most under-documented aspect of AI SOC deployment, and the #1 concern practitioners raise once they move past the marketing slides.

🔄 On-Premise Update Mechanisms

On-prem deployments maintain internet connectivity, so updates are logistically simpler. They still require deliberate operational controls:

- Vendor-managed update channels via secure VPN/tunnel connections to the vendor’s update repository

- Customer-controlled update schedules with staged rollout: test environment first, production second

- Detection Logic as Code rules versioned in Git, tested via CI/CD pipelines, and deployed on the customer’s cadence

- Threat intelligence feeds delivered via STIX/TAXII protocols or vendor-supplied packages integrated into the existing SIEM

The key distinction from SaaS: the customer controls when updates deploy, not the vendor. This matters for change management in regulated environments where unscheduled changes to production security infrastructure require documented approval.

🔒 Air-Gapped Update Mechanisms

Air-gapped environments are fundamentally different. No network connectivity means every update requires physical intervention, what practitioners call the “sneakernet” model.

- Secure media transfer: Encrypted USB drives or optical media carry model packages, detection rules, and threat intelligence from a connected staging environment to the isolated production network

- One-way data diodes for threat intelligence ingestion: hardware devices that physically allow data to flow in only one direction, enabling threat feed updates without creating any outbound data path

- Offline model packages validated via cryptographic signatures before deployment, with every package integrity-checked to ensure it hasn’t been tampered with during the transfer process

- Recommended cadence: Security patches monthly, model refreshes quarterly, and full platform updates semi-annually

⏰ The Latency Trade-Off

Here’s the honest reality: air-gapped environments operate with inherent update lag. Your detection models and threat intelligence will always be hours to days behind what a connected SaaS deployment sees. Pretending otherwise is dishonest.

The mitigation strategy is two-fold:

- Broader behavioral detection baselines that identify anomalous patterns without requiring the latest signature; behavioral rules age better than IOC-based rules

- Proactive threat hunting by experienced analysts who compensate for any update latency by actively looking for adversary techniques, not just waiting for automated detections to fire

How UnderDefense Handles This

We support all update models. Detection Logic as Code deploys through customer-controlled CI/CD pipelines, connected or air-gapped. For air-gapped customers, we provide cryptographically signed offline packages on a defined cadence.

Proactive threat hunting by Tier 3–4 analysts compensates for update latency in isolated environments. Because here’s the thing we’ve learned over years of serving defense and government organizations: in a disconnected environment, the human analyst layer becomes more critical, not less. Automation scales routine work, but humans handle the edge cases that signatures haven’t caught yet.

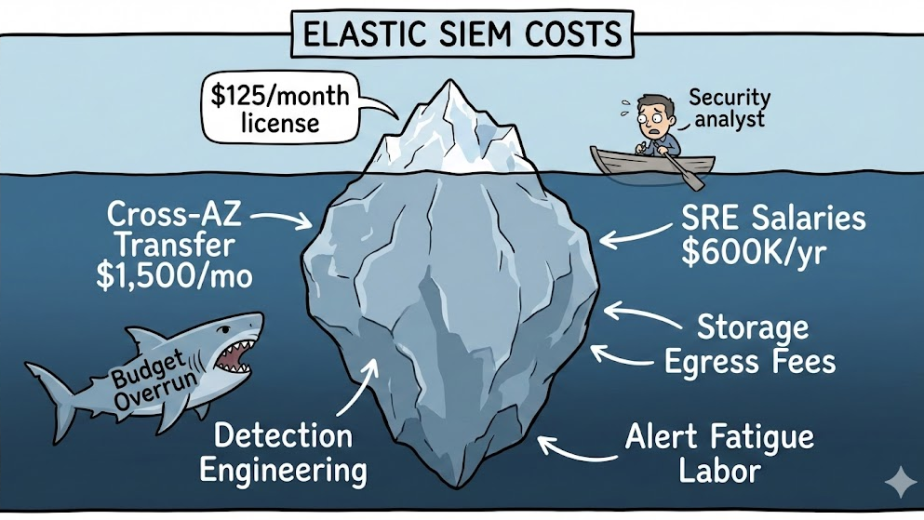

Q8. What Does Each Deployment Model Cost, and What Are the Hidden Operational Expenses?

The cheapest deployment model isn’t always the most cost-effective. SaaS has the lowest upfront cost but the least control. Air-gapped has the highest operational overhead, but it may be the only option that avoids regulatory fines worth multiples of the infrastructure investment. Understanding the full TCO across all four models is the difference between a sound business decision and a budget surprise six months in.

💰 TCO Breakdown by Deployment Model

| Cost Category | SaaS | BYOC | On-Premise | Air-Gapped |

|---|---|---|---|---|

| CapEx (Hardware) | None | None | High | Highest |

| OpEx (Licensing) | Monthly subscription | Cloud spend + vendor sub | Perpetual/annual license | Perpetual/annual license |

| Infrastructure Staff | None required | Cloud-literate team | Dedicated infrastructure + ML-Ops | Dedicated infrastructure + ML-Ops + update management |

| Onboarding Cost | Low | Moderate | Moderate–High | High |

| Compliance Evidence | Automated (if included) | Varies | Manual or tooling | Manual or tooling |

| Typical Annual Range | $50K–$200K | $100K–$350K | $300K–$800K | $500K–$1.2M+ |

Ranges based on mid-market organizations managing 1,000–5,000 endpoints.

💸 Hidden Costs Most Vendors Don’t Mention

- ML-Ops staffing for on-prem and air-gapped: Someone has to manage model updates, validate packages, and coordinate deployment windows. This is a specialized role that doesn’t exist in SaaS models.

- Compliance evidence generation: If your MDR vendor doesn’t include compliance automation, add $30K–$60K/year for a separate GRC tool (Vanta, Drata, or equivalent).

- Detection rule maintenance: Custom rules decay over time as your environment evolves. Who maintains them, and at what cost?

- Professional services fees: Many vendors charge $50K–$150K for onboarding alone, with 6-month deployment timelines that burn internal resources.

- Vendor lock-in exit costs: If your detection logic and correlation rules are trapped in a vendor’s proprietary SIEM, switching providers means rebuilding from scratch.

✅ UnderDefense’s Approach to Cost Transparency

Transparent, published pricing: $11–$15/endpoint/month for MDR across all deployment models. No hidden fees, no “contact sales” for a quote.

- 30-day turnkey onboarding eliminates the 6-month professional services spend that vendors like Arctic Wolf and Deepwatch require for stack migrations

- Forever-free compliance kits (SOC 2, ISO 27001, and HIPAA) eliminate separate GRC tool costs

- Detection Logic as Code stays with you: no exit penalty, no rebuilding if you ever switch

- 830% ROI over 3 years documented across MDR customer deployments

“Arctic Wolf provides solid detection and response capabilities, but overly relies on the client’s team for remediation, which really hurts the value of the service.”

— VP of Technology, Services [$50M] Arctic Wolf – Gartner Verified Review

“Started out well but over the years the service has consistently not met expectations. The issues that we have experienced has greatly outweighed the benefits.”

— CISO, Manufacturing [$3B–$10B] Arctic Wolf – Gartner Verified Review

UnderDefense maintains 113% net dollar retention and zero ransomware cases across all MDR customers for 6 years. Transparent pricing and deployment flexibility mean customers never feel trapped, and that’s exactly why they stay.

Q9. How Do You Choose the Right Deployment Model? A Decision Flowchart for Your Organization

Answer these 7 questions to identify your AI SOC deployment model. Grab a pen: this takes two minutes, and the result saves you months of vendor evaluation headed in the wrong direction.

📋 The Deployment Model Checklist

- ☐ Does your organization handle ITAR, CMMC Level 3+, or classified data? → If yes: Air-Gapped is your only compliant option. Stop here.

- ☐ Do you face strict data sovereignty mandates (GDPR, NIS2) but don’t require air-gapping? → On-Prem or BYOC depending on infrastructure maturity.

- ☐ Do you operate mature cloud infrastructure (AWS, Azure, or GCP) with a cloud-literate ops team? → BYOC: deploy the AI SOC inside your own VPC for maximum control with cloud economics.

- ☐ Do you maintain an on-prem data center with an existing SIEM (Splunk, Elastic)? → On-Prem or Hybrid: the AI SOC deploys adjacent to your current infrastructure.

- ☐ Is your security team under 5 people with no dedicated infrastructure staff? → SaaS: fastest time-to-value, lowest operational overhead.

- ☐ Must you preserve existing SIEM/EDR investments without rip-and-replace? → Any model, but only with a vendor-agnostic provider.

- ☐ Do you operate across multiple regulatory jurisdictions simultaneously? → Hybrid (multiple deployment models for different segments).

🏢 Industry-Vertical Quick Reference

| Industry | Recommended Model | Primary Driver |

|---|---|---|

| Defense / Intelligence Community | Air-Gapped | ITAR, CMMC, FedRAMP High |

| Healthcare (HIPAA + state privacy) | On-Prem or BYOC | PHI data residency, BAA requirements |

| Financial Services (SOC 2 + PCI DSS) | BYOC or SaaS | Data sovereignty per jurisdiction |

| Technology / SaaS | SaaS or BYOC | Speed-to-value, SOC 2, ISO 27001 |

| Manufacturing / OT / SCADA | On-Prem or Air-Gapped | Network isolation, NIS2 |

| PE Portfolio Companies | SaaS | Rapid uplift across portfolio |

Score Interpretation

- Checked Air-Gapped or On-Prem boxes: Your deployment model is non-negotiable. Eliminate any vendor that doesn’t support it.

- Checked BYOC or SaaS boxes: You have flexibility. Optimize for speed, cost, and integration depth.

- Checked the vendor-agnostic box: This is the filter that matters most. Most vendors fail here.

✅ Where UnderDefense Covers Every Scenario

UnderDefense is the only AI SOC provider supporting all four deployment models with the same UnderDefense MAXI platform, same detection capabilities, same concierge analyst team, and same 250+ integrations. Whether you checked one box or five, the platform adapts to your reality, not the other way around.

30-day onboarding across all models includes custom detection tuning and adversary simulation validation using Caldera and Atomic Red Team. The result: 99% noise reduction and detection coverage you can verify on day one.

Q10. Why Do Most AI SOC Vendors Lock You Into a Single Deployment Model, and What Should You Demand Instead?

If you’re evaluating AI SOC providers in 2026, deployment flexibility isn’t a nice-to-have but the architectural decision that determines whether your security investments survive the next vendor contract cycle. Most providers offer exactly one model, and that constraint isn’t a feature gap. It’s a business model choice that prioritizes their economics over your operational reality.

⚠️ Where the Major Providers Fall Short

Arctic Wolf delivers strong MDR coverage with dedicated concierge teams. The limitation: it operates on a proprietary SIEM stack, cloud-only, with no on-prem and no air-gapped support. If you have existing security investments, they get replaced, not enhanced.

CrowdStrike Falcon Complete provides elite endpoint detection. But it runs exclusively in CrowdStrike’s cloud, within the Falcon ecosystem. No BYOC option, no air-gapped AI SOC, and no flexibility for organizations that need deployment choice.

“We received little value from Arctic Wolf. The product offered little visibility… Anything you want to look at or changes you need to make in the product must go through their engineering team.”

— Matt C., Manager, Cybersecurity Services Arctic Wolf – G2 Verified Review

“Started out well but over the years the service has consistently not met expectations. Log collectors show working, however when asked to provide logs for an investigation no logs could be provided.”

— CISO, Manufacturing [$3B–$10B] Arctic Wolf – Gartner Verified Review

✅ UnderDefense’s Multi-Model Approach

UnderDefense MAXI supports SaaS, BYOC (AWS, Azure, GCP, Oracle), On-Prem, and Air-Gapped, with the same AI SOC capabilities, same concierge analyst team, and same 250+ integrations across every model. Detection Logic as Code is versioned, portable, and yours to keep.

Side-by-Side Comparison

| Dimension | UnderDefense | Arctic Wolf | CrowdStrike Falcon Complete |

|---|---|---|---|

| Deployment Options | SaaS, BYOC, On-Prem, Air-Gapped | Cloud-only (proprietary) | Cloud-only (Falcon) |

| Data Ownership | Customer retains full control | Vendor-controlled SIEM | Falcon cloud storage |

| Integration Approach | 250+ existing tools supported | Proprietary stack required | Falcon ecosystem only |

| Pricing Transparency | Published $11–$15/endpoint/month | Contact sales ($96K median) | Contact sales |

| Response Capability | Full containment + ChatOps verification | Alert escalation to customer | Endpoint-focused response |

| Compliance Support | Forever-free kits (SOC 2, HIPAA, ISO) | Separate product | Limited |

| Onboarding Speed | 30-day turnkey deployment | Stack migration required | Falcon deployment |

Who Should Choose What

Choose Arctic Wolf if you’re starting from scratch with zero existing security investments and prefer single-vendor simplicity. Choose CrowdStrike if you’re all-in on the Falcon ecosystem. Choose UnderDefense if you need deployment flexibility, want to preserve existing stack investments, and expect analysts who verify alerts directly with users rather than escalating back to your team.

“This is not an extension of our security team as was originally sold.”

— Sr. Cybersecurity Engineer, Manufacturing [$500M–$1B] Arctic Wolf – Gartner Verified Review

UnderDefense detected threats 2 days faster than CrowdStrike OverWatch in documented head-to-head comparisons, while integrating with, not replacing, the customer’s existing CrowdStrike deployment.

Evaluating AI SOC Providers? Start With the Full Comparison

Deployment model flexibility is the hidden differentiator most AI SOC evaluations miss. Once you’ve identified which model fits your regulatory and infrastructure reality, the next step is comparing providers who actually support it, across pricing, response capability, and integration depth.

What Separates Top SOC Providers

- Deployment model support: SaaS, BYOC, on-prem, and air-gapped, or only one?

- Vendor-agnostic integration vs. proprietary stack requirements that force rip-and-replace

- Published response time SLAs and documented outcomes vs. unpublished claims

- Transparent pricing (per-endpoint, published) vs. opaque “contact sales” quotes

- Compliance support included (SOC 2, HIPAA, and ISO 27001) vs. separate add-on products

- Onboarding speed and existing stack preservation vs. 6-month migration projects

The Next Step for Serious Evaluators

Each deployment model changes the provider shortlist. For a full side-by-side comparison of SOC providers, including deployment flexibility, pricing, response times, and compliance support, see the complete breakdowns below.

This analysis is based on documented deployment architectures, G2 reviews, published pricing, and operational outcomes across 500+ MDR deployments with zero ransomware incidents over 6 years.

The post AI SOC Deployment Models Explained: SaaS, BYOC, On-Premise, and Air-Gapped appeared first on UnderDefense.