Q1: What Is an AI SOC, and Why Is 2026 the Tipping Point for Implementation?

Most organizations in 2026 are still running security through a patchwork of tools that were never designed to work together. You’ve got copilots bolted onto SIEMs, SOAR playbooks automating what you already know, and traditional SOCs where human analysts manually triage thousands of alerts at human speed. Each piece solves a sliver of the problem. None of them own the outcome.

The distinctions matter, so let me define them plainly. A copilot (Microsoft Security Copilot, CrowdStrike Charlotte AI) answers questions about your data, but it doesn’t act. It’s an accelerator, not a replacement for the gap between “we see something” and “we contained it.” A SOAR platform (Splunk SOAR, Palo Alto XSOAR) automates known playbooks, but fails silently on novel threats your team hasn’t documented yet. A traditional SOC relies on human speed to process machine-volume alerts, which is a losing equation when attackers move at AI speed.

⚠️ Why Copilots and SOAR Fall Short

An AI SOC is fundamentally different. It’s an agentic intelligence layer that reasons across your entire security stack, investigates autonomously, and collaborates with human analysts to detect, verify, and contain threats. Traditional MDR providers offer monitoring, but require proprietary stack migration and lengthy onboarding timelines that leave you exposed during the transition. One Sr. Cybersecurity Engineer in manufacturing described the experience bluntly:

“This is not an extension of our security team as was originally sold.”

— Sr. Cybersecurity Engineer, Manufacturing Arctic Wolf – Gartner Verified Review

That’s the gap: monitoring without actionable context, alerts without ownership.

⏰ The 2026 Tipping Point

Gartner predicts 50% of SOCs will deploy AI-based decision support by 2026, and that prediction is now operational reality, not a future forecast. Threat actors have already weaponized agentic AI for reconnaissance, exploit development, and lateral movement, compressing attack timelines from weeks to hours. The barrier to entry for sophisticated attacks is collapsing while attack effectiveness is skyrocketing. Organizations still running human-speed operations are fighting AI with clipboards.

We built UnderDefense MAXI as the architectural response. It’s an agentic AI platform delivering continuous threat detection, enrichment, and automated context gathering across identity, endpoint, cloud, network, and SaaS, deployed as a unified security operations layer on top of any existing SIEM or XDR. No proprietary lock-in. No black boxes. Every investigative step is observable and auditable. The AI collects context at machine speed; your analysts (and ours) make the decisions.

✅ The Practical Difference

The difference between a copilot and an AI SOC is the difference between having an assistant who researches and a partner who investigates, verifies, and contains. As I’ve said many times, you can’t transform your security posture with a slide deck. You need observable workflows, reproducible outcomes, and honest conversations about what AI can and can’t do. That’s exactly what the 2026 security landscape demands.

Q2: What Does a Realistic AI SOC Implementation Timeline Look Like by Organization Size?

The most common question I hear from CISOs evaluating AI SOC platforms is straightforward: “How long will this actually take?” The honest answer depends on three variables: your tool stack complexity, your team’s bandwidth, and whether your vendor requires stack replacement or works with what you already have.

The Universal Five-Phase Framework

Regardless of organization size, every AI SOC implementation follows the same core phases:

| Phase | Description | Key Deliverables |

|---|---|---|

| 1. Readiness & Scoping | Define scope, audit tools, baseline MTTD/MTTR | Tool inventory, success criteria, executive alignment |

| 2. Integration & Detection Tuning | Connect data sources, build custom detections, tune false positives | API integrations live, detection rules deployed |

| 3. Pilot / Shadow Mode | Run AI SOC in parallel, validate accuracy | Side-by-side accuracy report, analyst confidence assessment |

| 4. Full Production Rollout | Cutover to 24/7 live coverage | Production go-live, SLA baselines established |

| 5. Continuous Optimization | Detection engineering cadence, quarterly reviews | Monthly rule reviews, detection gap analysis |

SMB Timeline: 2–4 Weeks (<500 Employees)

Smaller organizations benefit from simpler tool stacks (typically 1–2 SIEM/EDR tools), fewer custom detection rules, and faster stakeholder alignment. Phase 1 takes 2–3 days. Phase 2 runs 1–1.5 weeks. Phase 3 is 3–5 days of parallel operation. Phase 4 is a 1–2 day cutover. The key advantage is a smaller attack surface to map and fewer decision-makers to coordinate.

Mid-Market Timeline: 4–6 Weeks (500–5,000 Employees)

This is where complexity scales meaningfully. Expect 3–5 security tools requiring integration, custom detection engineering for your specific threat model, and compliance mapping for SOC 2, ISO 27001, or NIS2. Phase 1 takes a full week. Phase 2 runs 2–3 weeks as detection tuning requires iteration across multiple data sources. Phase 3 is 1–2 weeks of shadow mode. Phase 4 is 2–3 days. Budget additional time for stakeholder alignment across IT, security, and compliance teams.

⏰ Enterprise Timeline: 6–10 Weeks (5,000+ Employees)

Enterprise deployments involve multi-SIEM environments (Splunk + Sentinel is common), global deployment considerations, change management across multiple SOC shifts, and regulatory requirements across jurisdictions. Phase 1 takes 1–2 weeks. Phase 2 runs 3–4 weeks. Phase 3 needs 2–3 weeks of parallel validation. Phase 4 is a 1-week phased cutover, often segmented by business unit, geography, or criticality tier.

| Org Size | Total Timeline | Phase 1 | Phase 2 | Phase 3 | Phase 4 |

|---|---|---|---|---|---|

| SMB (<500) | 2–4 weeks | 2–3 days | 1–1.5 weeks | 3–5 days | 1–2 days |

| Mid-Market (500–5K) | 4–6 weeks | 1 week | 2–3 weeks | 1–2 weeks | 2–3 days |

| Enterprise (5K+) | 6–10 weeks | 1–2 weeks | 3–4 weeks | 2–3 weeks | 1 week |

✅ Why UnderDefense Compresses These Timelines

UnderDefense’s 30-day turnkey deployment compresses these timelines because the vendor-agnostic UnderDefense MAXI platform integrates with your existing stack rather than requiring migration. We don’t ask you to rip and replace your SIEM. We log into your system. Mid-market organizations routinely achieve full production coverage in under 30 days, validated by custom detection tuning and adversary simulation testing (Caldera, Ransomware Monkey) before production cutover. That’s not a marketing claim. It’s a measurable, reproducible outcome.

Q3: What Internal Resources and Readiness Does AI SOC Onboarding Require?

The fastest way to derail an AI SOC deployment is not a technology failure but a resource planning failure. Knowing who needs to be involved, for how many hours, and at which phase is the difference between a 30-day deployment and a 90-day slog.

Internal Resource Requirements

| Role | Weekly Time Commitment | Active Phases | Notes |

|---|---|---|---|

| Executive Sponsor / CISO | 2–3 hrs/week | Phases 1–4 | Decision authority for cross-team access |

| Technical Lead / Integration Engineer | 8–10 hrs/week | Phases 1–3 (drops to 2–3 hrs Phase 4+) | Primary integration contact |

| SOC Manager / Analyst Champion | 5–8 hrs/week | All phases | Change management, analyst adoption |

| IT Operations Contact | As-needed (~3–5 hrs total) | Phases 1–2 | Access provisioning, API credentials |

| Compliance Officer | 2–3 hrs total | Phases 1, 4 | Framework mapping (SOC 2, ISO 27001, HIPAA, NIS2) |

⚠️ With UnderDefense’s concierge model, the Technical Lead’s time drops to 5–8 hrs/week because 80%+ of integration work is handled by our onboarding team.

📝 AI SOC Implementation Readiness Checklist

Score yourself honestly. This is not a sales qualification exercise but an operational planning tool.

- ☐ Documented inventory of all security tools and data sources

- ☐ Current MTTD/MTTR baselines measured

- ☐ Executive sponsor identified and committed

- ☐ Technical Lead with 5–10 hrs/week bandwidth during onboarding

- ☐ API access and credentials available for existing tools

- ☐ Compliance framework requirements mapped (SOC 2, ISO 27001, HIPAA, NIS2)

- ☐ Analyst champion designated for change management

- ☐ Current tools support API-based integration/orchestration

- ☐ Incident response playbooks documented

- ☐ Budget approval secured for AI SOC investment

⭐ Score Interpretation

8–10 ✅ = Ready for immediate deployment. Start vendor evaluation now. Your organization has the documentation, executive buy-in, and technical prerequisites to move directly into Phase 1.

5–7 ✅ = Ready with 1–2 weeks of prep work. Most gaps here are documentation tasks, including tool inventories, baseline measurements, or compliance mapping that a concierge onboarding team can accelerate.

0–4 ⚠️ = Foundational gaps need addressing first. Focus on executive sponsorship and tool inventory before engaging any vendor. Without these, even the best AI SOC deployment will stall at Phase 1.

How UnderDefense Closes Readiness Gaps

Our concierge onboarding team actively helps close these gaps as part of the 30-day deployment, including tool audits, baseline measurement, detection rule documentation, and compliance mapping. Most organizations scoring 5+ on this checklist achieve full production deployment within 30 days. Scored below 5? We conduct a complimentary readiness assessment to build a pre-deployment action plan. The goal is straightforward: don’t sell you a service you’re not ready to use, and get you ready fast if you’re close.

Q4: What Are the Best Practices, and Worst Mistakes, in Phased AI SOC Rollout?

After working with organizations of every size, from 50-person startups to enterprises with 35,000+ employees, I’ve seen the same patterns repeat. The implementations that succeed follow a disciplined phase-gate methodology. The ones that stall usually hit the same avoidable anti-patterns.

Phase 1: Readiness & Scoping

✅ Best Practice: Define measurable success criteria upfront: target MTTD/MTTR reduction, alert noise reduction percentage, and coverage scope. Conduct a tool audit. Establish baseline metrics before making any changes. You can’t prove value if you don’t know where you started.

❌ Anti-Pattern: “Boiling the ocean.” Trying to integrate every tool and cover every use case from day one. Fix: start with identity + endpoint detection (highest signal-to-noise), then expand to cloud and network in Phases 2–3.

Phase 2: Integration & Detection Tuning

✅ Best Practice: Prioritize the highest-value data sources. Build custom detection rules based on your org-specific threat model, not generic rulesets. Validate with adversary simulation mapped to MITRE ATT&CK frameworks.

❌ Anti-Pattern: Skipping baselines. Deploying without measuring current MTTD, MTTR, and false positive rates means you can’t demonstrate ROI to your CFO or board. This kills budget renewals faster than any breach.

❌ Anti-Pattern: Choosing vendors that require stack replacement. Proprietary platforms that force SIEM migration extend timelines by 3–6 months and destroy your custom correlation rules. One CISO described the long-term reality plainly:

“Started out well but over the years the service has consistently not met expectations. The issues that we have experienced has greatly outweighed the benefits.”

— CISO, Manufacturing Arctic Wolf – Gartner Verified Review

Phase 3: Pilot & Shadow Mode

✅ Best Practice: Run the AI SOC in parallel with existing processes for 1–2 weeks. Measure investigation accuracy, triage speed, and analyst confidence side-by-side. Use the pilot to train internal analysts on new workflows, not just validate the technology.

❌ Anti-Pattern: No executive sponsor. AI SOC implementations without CISO/CTO-level sponsorship stall when cross-team access is needed. Integration requires firewall rules, API credentials, and identity provider access, all needing executive authority.

❌ Anti-Pattern: Skipping adversary simulation. Going live without testing detection rules against simulated attacks (Caldera, Ransomware Monkey, purple team exercises) is like shipping code without QA.

Phase 4 & 5: Production & Optimization

✅ Best Practice: Establish clear cutover criteria: <5% false positive rate, >95% investigation accuracy, all critical data sources connected. Implement a continuous detection engineering cadence with monthly rule reviews and quarterly optimization sprints. Build feedback loops between AI findings and human analyst insights.

❌ Anti-Pattern: Treating AI SOC as “set and forget.” Without ongoing detection engineering, effectiveness degrades within 90 days as attackers adapt and your environment evolves. Security is people, process, and tools, not a one-time purchase.

✅ How UnderDefense Prevents These Failures

Our onboarding methodology prevents every anti-pattern listed above. Dedicated concierge teams handle integration complexity. Security hardening (Active Directory, Azure, O365 fine-tuning) is included in onboarding. Adversary simulation validates 100% of customer-required use cases before production. Continuous detection engineering is built into the ongoing service, not sold as an add-on.

Q5: When Should You Expect First Value From Your AI SOC?

Here’s the question behind the question: “How long before I can walk into a board meeting and prove this investment was worth it?” If you’ve spent any time in security leadership, you’ve been burned by this before, a 6-month deployment that delivers dashboards, status reports, and a vague promise that “value is coming.” Meanwhile, every day without AI-augmented detection is a day of compounding risk: missed threats, analyst burnout, and accumulating technical debt that nobody wants to quantify.

The honest answer is that time-to-value depends on the architecture of what you’re buying. And most providers get this wrong.

⏰ Why Most Providers Delay Value

Traditional MDR and AI SOC providers routinely take 3–6 months before delivering meaningful signal. Arctic Wolf requires a proprietary stack migration before any detection begins, meaning zero detection value during the migration window. That’s not onboarding but a coverage gap you’re paying for.

“We received little value from ArcticWolf. The product offered little visibility when we were using it… Anything you want to look at or changes you need to make in the product must go through their engineering team.”

— Matt C., Manager, Cybersecurity Services Arctic Wolf – G2 Verified Review

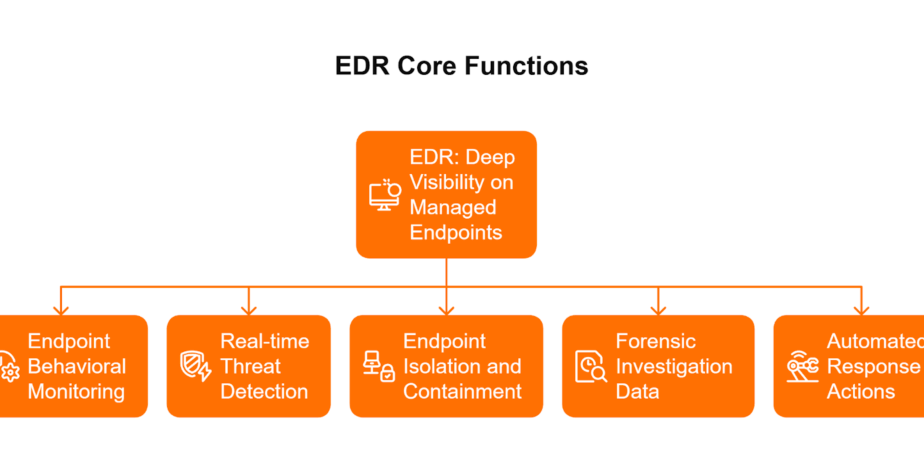

CrowdStrike Falcon Complete delivers endpoint value fast, but visibility gaps on network, SaaS, and identity layers persist. You get EDR coverage quickly; you don’t get organizational context. Endpoint-only MDR solves one problem while leaving the architecture fragmented.

✅ The Right Time-to-Value Framework

Rather than vague promises, here’s a milestone-based expectation you can actually hold your provider accountable to:

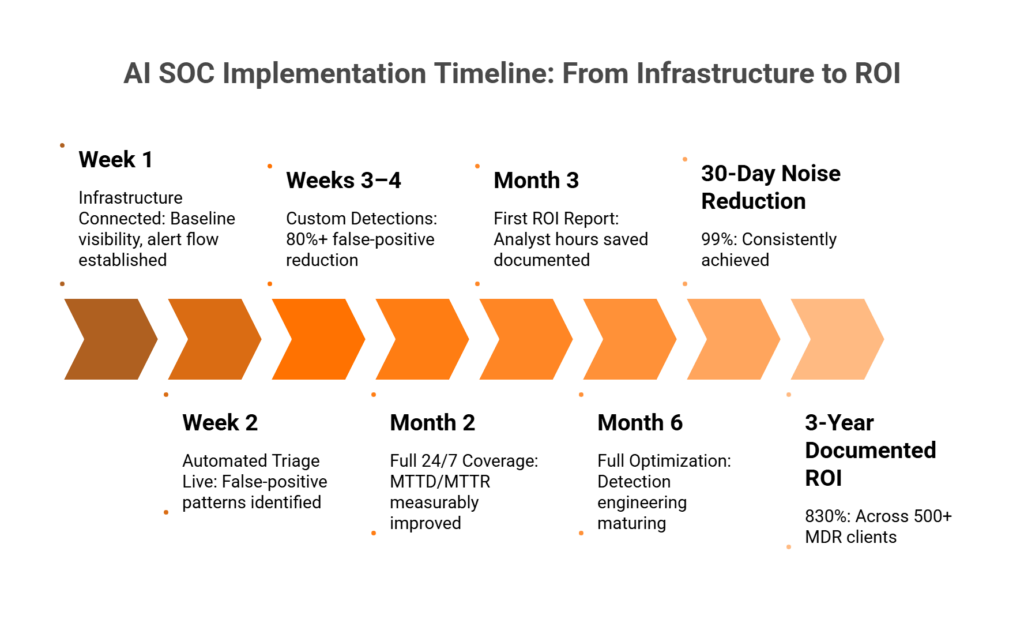

- Week 1: Infrastructure connected, baseline visibility achieved, initial alert flow established

- Week 2: First automated triage and enrichment operational, false positive patterns identified

- Weeks 3–4: Custom detections live, false positives reduced by 80%+, analyst workload visibly decreasing

- Month 2: Full 24/7 coverage operational, MTTD/MTTR measurably improved vs. baseline

- Month 3: First ROI report with documented analyst hours saved and avoided incidents quantified

- Month 6: Full optimization, ROI trajectory confirmed, detection engineering maturing

If your provider can’t map their onboarding to something like this, they’re selling you a timeline, not a commitment.

💰 UnderDefense’s Documented Value Timeline

We built our onboarding around observable milestones, not abstract phases. From day one of production, UnderDefense operates with a 2-minute alert-to-triage SLA and 15-minute escalation for critical incidents. Within the first 30 days, we consistently achieve 99% noise reduction across client environments, because the concierge team isn’t just connecting tools but tuning detections to your specific context.

“The speed of onboarding was a delightful surprise. In times where integrating new systems can take weeks, UnderDefense had us up and running in no time. When alerts pop up, there’s no panic. With their guidance, we know precisely what steps to take next.”

— Valeriia D., Marketing Specialist UnderDefense – G2 Verified Review

“Building our cybersecurity from scratch felt like a daunting challenge. Enter UnderDefense MAXI and its 30-day impact report. For a marketing agency taking baby steps in security, these reports were our guiding star, clear, concise, and oh-so-relevant.”

— Val R., Small-Business UnderDefense – G2 Verified Review

The broader numbers tell the same story: 830% ROI documented over 3 years across 500+ MDR clients. 96% MITRE ATT&CK coverage from the start. And zero ransomware cases across all MDR customers for six consecutive years, because a 30-day onboarding that’s both fast and thorough means you’re not waiting months to be protected. You’re operational in weeks.

Q6: How Does AI SOC Integrate Into Your Running SOC Without Rebuilding It?

Picture this: your security team has spent 18 months tuning Splunk detection rules, deploying CrowdStrike across 3,000 endpoints, and configuring Okta identity policies. Everything is finally stable. Then a vendor walks in and says: “Migrate everything to our platform.”

You know exactly what that means: re-implementing every detection rule from scratch, retraining every analyst on new interfaces, running blind during a 3–6 month transition window, and explaining to your board why you’re spending more money to temporarily have less coverage.

This scenario isn’t hypothetical but the default experience with most MDR and AI SOC providers.

⚠️ Why This Problem Exists

Most AI SOC and MDR providers, including Arctic Wolf, Deepwatch, and ReliaQuest, are built on proprietary technology stacks. Their business model requires you to adopt their SIEM, their data lake, their detection engine. When they say “integration,” they mean replacement, not augmentation.

“Arctic Wolf provides solid detection and response capabilities, but overly relies on the client’s team for remediation, which really hurts the value of the service.”

— VP of Technology, Services Arctic Wolf – Gartner Verified Review

“Started out well but over the years the service has consistently not met expectations. Log collectors show working, however when asked to provide logs for an investigation no logs could be provided.”

— CISO, Manufacturing Arctic Wolf – Gartner Verified Review

💸 The Hidden Costs of Rebuilding

The financial and operational toll of stack migration is real and quantifiable:

- 3–6 months of degraded coverage during migration

- $200K–$500K in professional services for enterprise stack migration

- 40%+ of existing detection rules lost or degraded during re-implementation

- 30–50% drop in analyst productivity while learning new platforms

- Compliance audit gaps during the transition period

The irony is hard to miss: you’re paying more to be less secure during the exact period you’re trying to improve.

✅ UnderDefense’s Overlay Architecture

We designed UnderDefense MAXI to layer on top of what you already have, not replace it. UnderDefense integrates with 250+ existing security tools via native API connections. During the 30-day onboarding, the concierge team audits your current toolset, preserves and enhances existing detection rules, adds custom detections on top, and enables containment actions that execute through your deployed tools: blocking compromised accounts in your AD/Azure, isolating hosts via your CrowdStrike or SentinelOne, and locking cloud accounts via your IAM.

Your tools stay. Your rules stay. UnderDefense MAXI adds the intelligence and response layer on top.

“UnderDefense’s ease of integration into our existing tech stack mirrors the positive aspects, enhancing our security without disrupting workflow. Its compatibility and the streamlined process of connecting with our software tools have significantly bolstered our security framework.”

— CEO, Mid-Market UnderDefense – G2 Verified Review

“The platform itself is straightforward. It pulls in data from all our existing security tools, so we didn’t have to rip and replace anything. Their SOC team is responsive and knows their stuff.”

— Verified User in Marketing and Advertising UnderDefense – G2 Verified Review

From 6-month proprietary migration to 30-day overlay deployment: that’s the difference between rebuilding your SOC and upgrading it.

Q7: What Does an Analyst’s Day Look Like Before vs. After AI SOC?

It’s 2:47 AM on a Tuesday. The 14th “critical alert” this week buzzes your phone. You log into the SIEM, spend 45 minutes investigating, cross-referencing CrowdStrike with Okta logs manually, messaging the user’s manager on Slack at 3 AM with no response. It’s a false positive, a developer running a legitimate script. You’ve been awake for an hour. You still don’t know if you missed something real during the 13 other alerts you triaged on autopilot this week.

If this sounds familiar, you’re not alone. And this is the operational reality that no vendor whitepaper captures.

❌ Why This Experience Persists

Your EDR sees endpoint behavior, but your identity tool sees user context, and neither reasons across both. You become the manual correlation engine. The data backs this up: burnout rates exceed 65% among SOC analysts, and 56% of security professionals feel exhausted by incoming alerts on a daily or weekly basis. Organizations face an average of over 2,000 attacks per day, with more than 80% of user-reported alerts turning out to be false positives.

“Our IT team was overwhelmed by the sheer volume of security alerts and doesn’t have the resources for 24/7 monitoring.”

— Andriy H., Co-Founder and CTO UnderDefense – G2 Verified Review

This isn’t a tooling problem but an architectural one, and no amount of dashboard upgrades will fix it.

✅ The Transformed Day

Now picture this instead. You arrive at 8:30 AM. The AI SOC processed 847 alerts overnight. 844 were auto-triaged, enriched, and closed, including the developer’s script, which was verified directly with the developer via Slack at 2:48 AM by the AI SOC’s ChatOps system. Two were escalated as confirmed incidents with full investigation reports. One is critical: compromised credential detected, user verified as not the actor, credential revoked, and endpoint isolated, all before your first coffee.

Your morning: review 3 incident reports, not triage 847 alerts.

“Before MAXI, we were slightly overwhelmed with alerts and often unsure of how to prioritize or respond to them. Now, not only do we get alerts, but we also get clear guidance on how to handle them. False positives have become a rarity.”

— Valeriia D., Marketing Specialist UnderDefense – G2 Verified Review

⭐ Making the Transition Work

Change management is where most AI SOC deployments stall, not technology. Here’s the framework that works:

- Start with an analyst champion, one senior analyst who pilots the system and becomes the internal advocate

- Run shadow mode for 1–2 weeks so analysts see AI investigations alongside their own, building trust through comparison

- Celebrate early wins by sharing the first “AI caught what we would have missed” example broadly

- Redefine the analyst role from “alert triage machine” to “threat hunter and strategic advisor”

- Insist on transparent AI by using platforms where every AI decision is observable and auditable, not a black box

UnderDefense’s ChatOps user verification, reaching out to affected users directly via Slack, Teams, email, and SMS, is the feature that converts skeptical analysts fastest. When they see the AI SOC ask Jane directly “Did you authorize this OAuth app at 2:41 AM?” and get a verified answer in minutes instead of hours, trust shifts from theoretical to experiential.

“They handle a lot of the alert monitoring, which saves time. And if a real problem does happen, they react quickly, which has been a lifesaver.”

— Verified User in Computer Software UnderDefense – G2 Verified Review

That’s why our concierge team doesn’t just deploy technology but partners with your analysts through the transition.

Q8: What Governance and Human-in-the-Loop Controls Should Your AI SOC Include?

AI in the SOC introduces a category of operational risk that most security leaders haven’t had to govern before: automated decisions that directly affect security posture. Without proper governance, three things go wrong fast. AI takes containment actions without human validation on high-impact assets. Auditors ask “who authorized this automated response?” and get no clear answer. Analyst trust erodes because AI reasoning is opaque.

The governance question isn’t theoretical. It’s the difference between an AI SOC that scales your team and one that creates a new category of audit findings.

✅ 5 Non-Negotiable AI SOC Governance Controls

Every AI SOC deployment should enforce these principles from day one:

| # | Governance Control | What It Means in Practice |

|---|---|---|

| 1 | Human-in-the-Loop for Critical Actions | Automated triage and enrichment are fine; automated containment of production systems requires human approval above a defined severity threshold |

| 2 | Full Audit Trail | Every AI investigation step, every enrichment query, and every decision must be logged and retrievable |

| 3 | Explainable Reasoning | Analysts must see why the AI reached its conclusion, not just the conclusion itself |

| 4 | Role-Based Access Control (RBAC) | Define who can configure AI behavior, approve containment actions, and override automated decisions |

| 5 | Continuous Validation | AI detection models must be tested against adversary simulation regularly, not trusted blindly |

If your AI SOC vendor can’t demonstrate all five of these in a live environment, that’s a red flag. “Trust our AI” is not a governance model.

📋 Compliance Framework Mapping

These governance controls aren’t just security best practices. They map directly to existing compliance frameworks, enabling dual-purpose value.

| Governance Control | SOC 2 (Trust Services Criteria) | ISO 27001 (Annex A) | HIPAA (Technical Safeguards) | NIS2 (Risk Management) |

|---|---|---|---|---|

| Human-in-the-Loop | CC6.1 – Logical Access | A.9.2 – User Access Mgmt | §164.312(a) – Access Control | Art. 21 – Risk Measures |

| Full Audit Trail | CC7.2 – System Monitoring | A.12.4 – Logging & Monitoring | §164.312(b) – Audit Controls | Art. 21 – Incident Handling |

| Explainable Reasoning | CC4.1 – Design of Controls | A.18.2 – Compliance Reviews | §164.308(a)(8) – Evaluation | Art. 21 – Policies on Risk |

| RBAC | CC6.3 – Role-Based Access | A.9.4 – System Access Control | §164.312(a)(1) – Unique IDs | Art. 21 – Access Control |

| Continuous Validation | CC4.2 – Monitoring Controls | A.14.2 – Security Testing | §164.308(a)(8) – Evaluation | Art. 21 – Testing Measures |

This mapping means every AI SOC governance control you implement generates audit-ready evidence, not just better security.

⭐ UnderDefense’s Observable AI Architecture

Every investigative step taken by UnderDefense MAXI is observable and auditable. Every AI enrichment, every user verification via ChatOps, and every containment action is logged with full reasoning chains. The AI collects context; your analysts (and ours) make the decisions. This isn’t just a design philosophy but how we generate automated compliance evidence for SOC 2, ISO 27001, HIPAA, and PCI-DSS as part of the UnderDefense MAXI platform.

UnderDefense MAXI’s Compliance module, serving as a replacement for tools like Vanta or Drata, provides auto-evidence collection, continuous control validation, and audit-ready reporting built on actual security operations data. Because compliance evidence should come from real security telemetry, not theoretical policies.

“They’ve also made our audit process much less painful. The reports from their platform give us clear evidence of our security controls and incident response capabilities. When auditors or clients ask questions about our security posture, we can pull up exactly what they need to see.”

— Verified User in Marketing and Advertising UnderDefense – G2 Verified Review

“UnderDefense MAXI improves security posture in general. It made it easier for us to make informed security decisions, and helped us to comply with important regulations.”

— Serhii I., CEO UnderDefense – G2 Verified Review

The bottom line: governance isn’t a checkbox exercise. It’s the foundation that makes AI in the SOC trustworthy, for your analysts, your auditors, and your board.

Q9: How Should You Evaluate AI SOC Vendors for Implementation Speed and Quality?

Choosing an AI SOC vendor isn’t just a technology purchase but an operational commitment that shapes your security posture for years. Get it wrong, and you’re looking at 3–6 months of wasted budget, continued breach exposure, and the painful process of ripping out a platform that never delivered on its promises.

⚠️ The Evaluation Trap Most Security Leaders Fall Into

Most security leaders evaluate based on AI capability marketing alone, “most advanced LLM,” “most autonomous agents,” “biggest training dataset,” while ignoring the operational factors that actually determine success. I’ve watched CISOs pick providers because of a slick demo, only to discover six months later that the vendor can’t work with their existing SIEM, takes weeks to escalate a confirmed threat, or hides pricing behind opaque enterprise quotes that balloon after Year 1.

The question that separates a useful evaluation from a wasted quarter: Can they work with your existing tools? How fast do they go live? And when AI can’t resolve an alert, do they escalate back to you, or do they get the answer themselves?

✅ The 7-Criteria Scoring Framework

Here’s the evaluation framework that actually works. Score each vendor 0–2 on these seven criteria:

- Vendor-Agnostic Integration — Does the vendor work with your existing SIEM, EDR, XDR, and identity tools, or do they force proprietary replacement? If you’re running Splunk, CrowdStrike, and Okta today, can they plug in natively, or do you start from scratch?

- Onboarding Speed — Is it a 30-day turnkey deployment, or a 6-month professional services engagement that requires dedicated internal staff? Every week of delayed deployment is another week of exposure.

- Human Analyst Access — Do you get direct communication with Tier 3–4 analysts, or are you submitting tickets into a queue and waiting hours for a response?

- Response Capability — Can they detect, contain, and remediate threats, or just detect, notify, and escalate the investigation back to your team?

- User Verification (ChatOps) — When a suspicious login triggers at 2 AM, can they verify directly with the affected user via Slack or Teams? Or does that verification task get pushed back to you?

- Pricing Transparency — Is the cost published and predictable per-endpoint, or hidden behind “contact sales” with no public benchmarks?

- Compliance Integration — Is compliance evidence generation included in the service, or is it a separate product at additional cost?

📊 How to Apply This Framework

Score each AI SOC provider 0–2 per criterion. Providers scoring 10+ represent genuine operational partnerships, and they’ll own outcomes alongside your team. Below 7 means you’re buying an alert feed, not managed detection and response.

Here’s how UnderDefense scores against this framework:

| Criterion | Score | Justification |

|---|---|---|

| Vendor-Agnostic Integration | ✅ 2 | 250+ native integrations, works with your existing stack |

| Onboarding Speed | ✅ 2 | 30-day turnkey deployment with custom detection tuning |

| Human Analyst Access | ✅ 2 | Direct Tier 3–4 concierge analyst communication |

| Response Capability | ✅ 2 | Full containment and remediation, 2-minute alert-to-triage and 15-minute escalation for critical incidents |

| ChatOps User Verification | ✅ 2 | Direct user verification via Slack, Teams, Email, and SMS |

| Pricing Transparency | ✅ 2 | Published $11–15/endpoint/month pricing |

| Compliance Integration | ✅ 2 | Forever-free compliance kits included with MDR |

| Total | 14/14 |

💡 Speed Without Quality Is Just Fast Failure

Implementation speed matters, but only when paired with detection quality. We detected threats 2 days faster than CrowdStrike OverWatch in documented case studies, and we maintain zero successful ransomware attacks across 500+ MDR clients for 6 years. That combination of speed and outcome is what you should be scoring for.

“The speed of onboarding was a delightful surprise. In times where integrating new systems can take weeks, UnderDefense had us up and running in no time. Their 24/7 detection and response service is fast and comprehensive.”

— Valeriia D., Marketing Specialist UnderDefense – G2 Verified Review

“UnderDefense’s ease of integration into our existing tech stack mirrors the positive aspects, enhancing our security without disrupting workflow.”

— CEO, Mid-Market UnderDefense – G2 Verified Review

“We received little value from ArcticWolf. The product offered little visibility when we were using it. Anything you want to look at or changes you need to make in the product must go through their engineering team.”

— Matt C., Manager, Cybersecurity Services Arctic Wolf – G2 Verified Review

Q10: Already Evaluating SOC Providers? Compare the Top 12 SOCaaS Platforms

The AI SOC and SOCaaS market in 2026 includes providers with fundamentally different architectures: vendor-locked platforms (Arctic Wolf, Deepwatch), endpoint-focused solutions (CrowdStrike Falcon Complete, Red Canary), AI-first platforms (ReliaQuest, Radiant Security), and vendor-agnostic AI SOC + Human Ally models (UnderDefense). The right choice depends entirely on your existing stack, compliance requirements, and whether you need detection-only or full detection-and-response.

What Separates Top SOCaaS Providers in 2026

- Vendor-agnostic integration vs. proprietary stack lock-in — Does the provider work with your Splunk, CrowdStrike, and SentinelOne, or force you to rip and replace?

- Human analyst access — Direct concierge communication with Tier 3–4 analysts, or ticket-based escalation that takes hours?

- Published response time SLAs with documented outcomes — Can they show you actual case studies, not just marketing claims?

- Transparent per-endpoint pricing vs. opaque enterprise quotes — Published rates you can budget around, or “contact sales” black boxes?

- Compliance evidence generation included vs. separate add-on — Is SOC 2, HIPAA, and ISO 27001 evidence bundled, or an upsell?

- Onboarding speed — 30-day turnkey deployment vs. 3–6 month professional services engagement?

Which Provider Fits Which Scenario

Each provider excels in different scenarios. Arctic Wolf suits organizations starting from scratch who want single-vendor simplicity, but you’ll give up your existing tool investments and face opaque pricing (median $96K/year). CrowdStrike Falcon Complete fits Falcon-native environments where endpoint detection is the priority, but response times are documented as 2 days slower than vendor-agnostic alternatives. Red Canary delivers solid playbook automation for endpoint-heavy environments, but struggles with cloud and identity coverage beyond CrowdStrike integrations.

UnderDefense is purpose-built for organizations that want to protect existing tool investments while adding unified AI detection + human response. With 250+ integrations, published $11–15/endpoint/month pricing, and analysts who verify suspicious activity directly with affected users, it’s the model for teams that need outcomes, not just alerts.

For a complete head-to-head comparison with pricing, response times, and integration capabilities across all 12 providers, see the full breakdown below.

🧮 PLAN YOUR BUDGET

SOC Cost Calculator

Estimate your SOC build vs. buy costs and see how AI SOC compares to in-house operations.

This analysis is based on documented response times, G2 reviews, published pricing, and operational outcomes across 500+ MDR deployments.

Q11: Frequently Asked Questions About AI SOC Implementation

How long does AI SOC implementation typically take?

Most AI SOC implementations take 2–10 weeks depending on organization size and complexity. SMBs (under 200 endpoints) typically go live in 2–4 weeks. Mid-market organizations (200–1,000 endpoints) need 4–6 weeks. Enterprise deployments (1,000+ endpoints) can stretch to 6–10 weeks due to custom integrations and compliance requirements.

⏰ The biggest variable isn’t technology but internal readiness. Organizations with documented tool inventories and a designated technical lead deploy 40% faster.

- Integration complexity scales with the number of unique data sources, as connecting 3 tools is fundamentally different from connecting 15.

- Vendors requiring proprietary SIEM replacement add 8–12 weeks to any estimate because you’re rebuilding detection logic from scratch.

UnderDefense’s turnkey approach compresses mid-market deployments to 30 days. We deploy into your existing stack, including Splunk, CrowdStrike, SentinelOne, Microsoft Defender, and Okta, without forcing replacement, which eliminates the single biggest deployment bottleneck.

Can AI SOC work with my existing SIEM and EDR?

Yes, if the vendor is genuinely vendor-agnostic. Before signing, confirm native support for your specific SIEM (Splunk, Elastic, Microsoft Sentinel), EDR (CrowdStrike, SentinelOne, Defender for Endpoint), and identity provider (Okta, Azure AD, Duo).

⚠️ Watch for vendors who claim “integration” but actually require you to forward logs to their proprietary platform. That’s not integration but data migration.

- Ask whether your custom correlation rules, business logic, and detection tuning carry over or need rebuilding.

- Verify that you retain ownership of your security data and business logic if you ever switch providers.

UnderDefense MAXI integrates with 250+ tools natively. Our analysts log into your systems where your data lives, a principle that protects your investment and eliminates vendor lock-in.

What is the ROI of AI in the SOC?

Organizations deploying AI SOC platforms report 830% ROI over 3 years, driven by measurable efficiency gains across multiple dimensions. Gartner predicts that by 2026, 50% of SOCs will deploy AI-based decision support, and the early movers are already seeing the returns.

- 💰 Analyst hours saved: 2,000–4,000 hours/month on investigation automation alone

- Faster threat containment: 2-minute alert-to-triage vs. 30–60 minutes of manual investigation per alert

- Avoided breach costs: The average security incident costs $4.35M when factoring in downtime, data loss, and remediation

- Reduced hiring needs: AI SOC augments existing analysts instead of requiring a 3-shift, 8–12 person team

Do I need to hire new staff for AI SOC?

No. AI SOC augments your existing analysts; it doesn’t replace them or require new headcount. The internal resource requirement is minimal: one Technical Lead at 5–10 hours/week during onboarding, dropping to 2–3 hours/week in steady state for reviewing weekly reports and participating in monthly tuning sessions.

✅ AI handles the investigation grunt work, including context collection, log enrichment, and multi-system correlation.

✅ Your analysts focus on decision-making, threat hunting, and strategic security improvements.

✅ The provider’s concierge analysts handle 24/7 monitoring, so you don’t need to staff night shifts.

What’s the difference between AI SOC and traditional SOC?

A traditional SOC relies on human analysts manually triaging alerts at human speed, typically 30–60 minutes per alert across multiple tools. An AI SOC uses agentic AI to investigate, enrich, and verify threats at machine speed, then presents confirmed incidents to human analysts for decision-making.

- The AI SOC handles the volume; humans handle the judgment.

- Traditional SOC: analysts spend 40% of time chasing false positives.

- AI SOC: automation filters noise so analysts focus on real threats and proactive hunting.

- The result: faster MTTI, lower MTTR, and analysts who stay longer because they’re doing meaningful work instead of burning out on triage.

1. How long does AI SOC implementation take for SMBs vs. mid-market vs. enterprise organizations?

AI SOC implementation timelines vary significantly by organization size and stack complexity. For SMBs with under 200 endpoints, we typically see full deployment in 2–4 weeks, as the integration surface is smaller and decision-making is faster. Mid-market organizations running 200–1,000 endpoints generally need 4–6 weeks, accounting for multiple SIEM/EDR integrations and cross-team coordination. Enterprise environments with 1,000+ endpoints can stretch to 6–10 weeks due to custom detection logic, multi-cloud environments, and compliance validation requirements.

The single biggest variable isn’t technology but internal readiness. Organizations that come to the table with a documented tool inventory, a designated technical lead, and pre-mapped compliance requirements deploy up to 40% faster. Our turnkey MDR onboarding compresses mid-market deployments to 30 days by deploying into your existing stack without forcing proprietary replacement, which eliminates the biggest deployment bottleneck entirely.

2. What internal resources are required to deploy an AI SOC successfully?

The internal resource requirement for AI SOC is deliberately minimal when the provider is structured correctly. During the onboarding phase (first 30 days), we recommend one Executive Sponsor at 2–3 hours/week for governance decisions and one Technical Lead at 8–10 hours/week to coordinate tool access and validate detection rules. An optional Security Analyst Champion at 4–6 hours/week helps build internal trust through shadow-mode validation.

In steady state after go-live, the footprint drops to the Technical Lead at 2–3 hours/week for weekly report reviews and monthly tuning sessions. You should not need to hire additional headcount. The entire point of an AI SOC is to augment your existing team, not to create a new internal project that requires dedicated staffing. If a vendor tells you that you need a full-time internal team to manage their AI SOC, that’s not AI augmentation but a professional services engagement. We built UnderDefense MAXI so your analysts can focus on threat hunting and strategic work rather than managing another platform.

3. When should you expect first measurable value from an AI SOC deployment?

We hold ourselves to observable milestones, not abstract phases. In Week 1, your infrastructure should be connected with baseline visibility and initial alert flow established. By Week 2, automated triage and enrichment should be operational with false positive patterns identified. Weeks 3–4 should deliver custom detections live and false positives reduced by 80%+. By Month 2, full 24/7 coverage should be operational with measurably improved detection and response times. Month 3 should produce your first ROI report with documented analyst hours saved.

If your vendor can’t map their onboarding to milestones like these, they’re selling a timeline, not a commitment. We consistently achieve 99% noise reduction within 30 days and operate with a 2-minute alert-to-triage SLA from day one of production. Across 500+ MDR clients, we’ve documented 830% ROI over 3 years, which starts compounding from the first month of production when analyst hours saved and avoided incidents become quantifiable.

4.Can AI SOC integrate with my existing SIEM, EDR, and identity tools without replacing them?

Yes, if the vendor is genuinely vendor-agnostic. The key distinction is whether the provider’s architecture layers on top of your existing stack (overlay model) or requires you to migrate to their proprietary platform (replacement model). Vendors like Arctic Wolf and Deepwatch are built on proprietary stacks. Their “integration” means replacing your SIEM, your data lake, and your detection engine, which adds 3–6 months of degraded coverage and $200K–$500K in migration costs.

We designed UnderDefense MAXI to integrate with 250+ existing security tools via native API connections. Your Splunk stays. Your CrowdStrike stays. Your Okta stays. During our 30-day onboarding, the concierge team audits your current toolset, preserves and enhances existing detection rules, adds custom detections on top, and enables containment actions that execute through your deployed tools. Before signing with any vendor, confirm that your custom correlation rules, business logic, and detection tuning carry over, and verify that you retain ownership of your security data if you ever switch.

5. What does an analyst's day look like before vs. after AI SOC implementation?

Before AI SOC, your analyst’s day looks like this: 2:47 AM alert, 45 minutes of manual investigation cross-referencing EDR with identity logs, a Slack message at 3 AM with no response, and the conclusion that it’s a false positive. Multiply that by 14 “critical” alerts per week, and you have the burnout reality where 65% of SOC analysts experience chronic exhaustion and 80%+ of user-reported alerts are false positives.

After AI SOC, the transformation is structural. Overnight, the AI SOC processes hundreds of alerts automatically, enriching, correlating, and closing false positives without human intervention. Confirmed incidents arrive as complete investigation reports with full context. Your analysts review 3 incident summaries instead of triaging 847 raw alerts. The role shifts from “alert triage machine” to “threat hunter and strategic advisor.” That’s not a theoretical improvement but an operational shift we see consistently across our MDR client base, where analyst workload drops by 90%+ within the first 30 days.

6. What governance and human-in-the-loop controls should an AI SOC include?

Every AI SOC deployment should enforce five non-negotiable governance controls from day one. First, human-in-the-loop for critical actions, where automated triage is fine but containment of production systems requires human approval above a defined severity threshold. Second, a full audit trail logging every AI investigation step, enrichment query, and decision. Third, explainable reasoning so analysts see why the AI reached its conclusion. Fourth, role-based access control (RBAC) defining who can configure AI behavior and override automated decisions. Fifth, continuous validation through regular adversary simulation testing.

These controls map directly to SOC 2, ISO 27001, HIPAA, and NIS2 compliance frameworks, giving you dual-purpose value. Every governance control generates audit-ready evidence, not just better security. If your AI SOC vendor can’t demonstrate all five in a live environment, that’s a red flag. “Trust our AI” is not a governance model.

7. How do you evaluate and score AI SOC vendors before committing?

We recommend a 7-criteria scoring framework where you rate each vendor 0–2 per criterion. The seven criteria are: vendor-agnostic integration (do they work with your existing tools or force replacement?), onboarding speed (30-day turnkey or 6-month engagement?), human analyst access (direct Tier 3–4 communication or ticket queues?), response capability (full containment or detect-and-notify?), ChatOps user verification (can they verify suspicious activity directly with affected users?), pricing transparency (published per-endpoint rates or “contact sales”?), and compliance integration (evidence generation included or separate upsell?).

Providers scoring 10+ represent genuine operational partnerships. Below 7 means you’re buying an alert feed, not managed detection and response. You can download our SOC Provider Evaluation Checklist to run this scoring exercise across your shortlisted vendors systematically.

8. What ROI can you expect from AI SOC, and how is it measured?

Organizations deploying AI SOC platforms report 830% ROI over 3 years, driven by measurable gains across four dimensions. First, analyst hours saved: 2,000–4,000 hours/month on investigation automation alone. Second, faster threat containment: 2-minute alert-to-triage versus 30–60 minutes of manual investigation per alert. Third, avoided breach costs, where the average security incident costs $4.35M when factoring in downtime, data loss, and remediation. Fourth, reduced hiring needs, as AI SOC augments existing analysts instead of requiring an 8–12 person, 3-shift team.

The ROI compounds fastest when implementation is measured in weeks rather than months. Every day without AI-augmented detection is a day of compounding risk: missed threats, analyst burnout, and accumulating technical debt. We track ROI from day one through our 30-day impact reports, giving you board-ready documentation by Month 3 that quantifies analyst hours saved, false positive reduction rates, and avoided incident costs.

The post The Complete AI SOC Implementation Guide for 2026: Timelines, Checklists, Best Practices and Integration Guide appeared first on UnderDefense.