Q1. What Is AI SOC Automation, and Why Is It Non-Negotiable in 2026?

AI SOC automation uses artificial intelligence, including agentic AI systems that reason, plan, and act autonomously, to automate security operations center workflows such as alert triage, investigation, enrichment, and response. That definition sounds clean on paper. The reality on the ground in 2026 is messier: your analysts are drowning in 4,484 alerts per day, 67% of those alerts go completely uninvestigated, and 71% of SOC analysts report burnout symptoms that directly impact detection quality.

This isn’t a future problem. It’s a right-now, 2 AM, “who’s going to look at this critical alert” problem.

📖 Key Terms You’ll See Throughout This Article

Before we go deeper, let’s level-set on terminology that gets thrown around interchangeably, but shouldn’t be:

- Orchestration: coordinating actions across multiple security tools via API

- SOAR: Security Orchestration, Automation, and Response; rule-based playbook execution

- Agentic AI: AI systems that reason, plan, and act toward goals autonomously, adjusting mid-task

- Multi-Agent Systems (MAS): multiple specialized AI agents (enrichment, correlation, user verification) collaborating on one investigation

- GenAI: generative AI used for summarization, detection rule drafting, and natural-language querying of security data

- Hyperautomation: layering AI, ML, RPA, and orchestration to automate end-to-end processes with minimal human touch

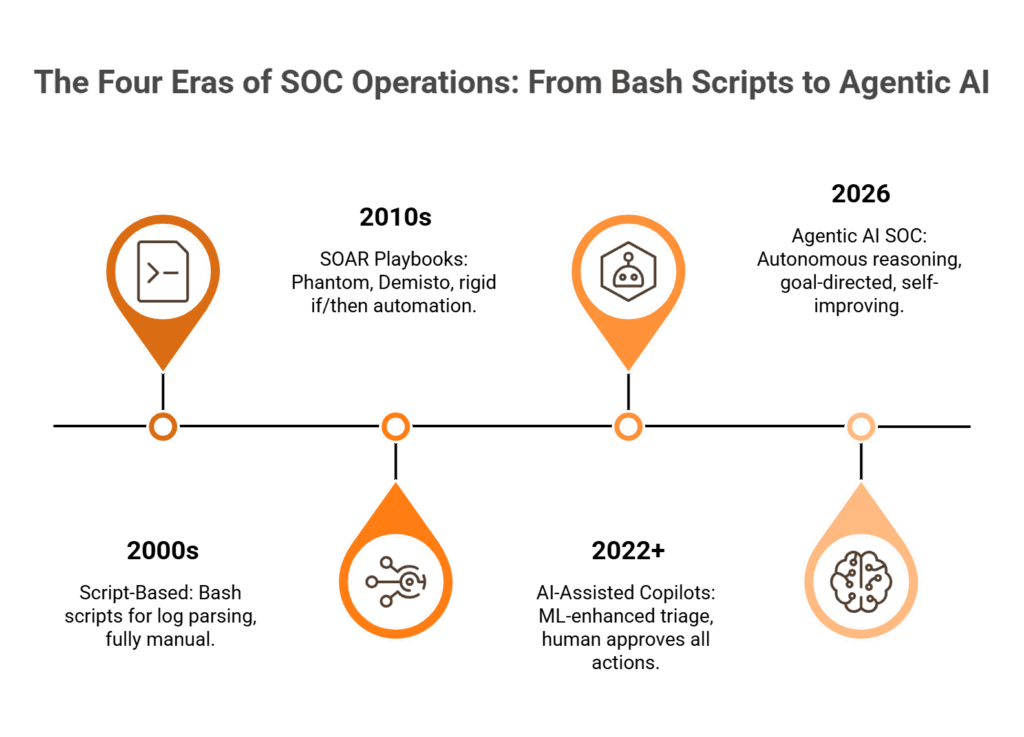

⏰ The Evolution: Scripts → SOAR → AI-Native → Agentic

The SOC has gone through four distinct eras. Bash scripts for log parsing in the 2000s. SOAR playbooks (Phantom, Demisto) in the 2010s. AI-assisted copilots from 2022 onward. And now, in 2026, agentic AI SOCs where the system reasons across your entire stack, not just executing rules someone wrote, but deciding what to investigate and how.

Here’s a distinction that matters more than most vendors will admit: “AI in the SOC” is not the same as “a true AI SOC.” Bolting a ChatGPT wrapper onto your SIEM dashboard is AI in the SOC. An AI SOC is an architecture where intelligence is the operating layer, where detection, investigation, enrichment, and response flow through AI-native pipelines that were designed for autonomous reasoning from day one.

❌ Why Legacy Approaches Are Breaking Down

The cybersecurity talent gap hit 4.8 million unfilled positions globally in 2025, and it’s only widening. Hiring your way out of this isn’t a strategy but a fantasy. Even organizations that can hire face 6–9 month ramp-up times, constant turnover at Tier 1 (the role is brutal), and the overhead of training pipelines that never quite keep pace with emerging threats.

Legacy SOAR playbooks are crumbling under their own weight. Rigid if/then logic requires constant maintenance, breaks on novel attack patterns, and creates “playbook sprawl” that becomes as unmanageable as the alerts themselves. I’ve watched teams maintain 200+ playbooks where half are outdated and nobody’s sure which ones still fire correctly.

Traditional MDR providers like Arctic Wolf force you onto their proprietary SIEM, and when you leave, all your correlation rules, business logic, and custom detections stay behind. That’s vendor lock-in dressed up as “managed security.” Meanwhile, endpoint-focused providers like CrowdStrike see threats on the endpoint but miss organizational context entirely. They can’t verify whether Jane Doe actually made that suspicious login because they never talk to Jane.

✅ The AI SOC + Human Ally Model

Detection without response is noise. Response without context is risk. The architecture that actually works in 2026 combines agentic AI (for speed, scale, and consistency) with human analysts who understand your business (for judgment, context, and trust).

At UnderDefense, we built UnderDefense MAXI as an agentic AI SOC that operates as a unified security operations layer on top of whatever SIEM/XDR you already own, including Splunk, Sentinel, Chronicle, and Elastic, without forcing replacement. Every investigative step is observable and auditable. Detection Logic as Code means detections are written in Python, version-controlled, unit-tested, and deployed via CI/CD. And when the system needs to know whether a suspicious login is legitimate, it pings the affected user directly through Slack, Teams, email, or SMS via ChatOps, because the fastest path to resolving 80% of behavioral alerts is simply asking the person involved.

The proof? ✅ 2-minute alert-to-triage SLA. ✅ 15-minute escalation for critical incidents. ✅ 96% MITRE ATT&CK coverage. ✅ Zero ransomware cases across all MDR clients in six years. ✅ 830% ROI over three years. While traditional MDR tells you “suspicious login detected, please investigate,” we tell you who logged in, confirm with the user directly, and contain the threat before your team wakes up, with documented response times 2 days faster than CrowdStrike OverWatch.

Q2. How Does AI SOC Automation Actually Work: Architecture, Pipeline, and SOC Tier Evolution?

If you can’t explain the pipeline, you don’t understand what you’re buying. Too many vendors hide behind “AI-powered” marketing without showing you the actual mechanics. So let me walk through exactly how an AI SOC automation pipeline works, the same pipeline we run in production every day across 65,000+ endpoints.

🔧 The Five-Stage Automation Pipeline

Every alert that enters an AI SOC goes through five stages, and the quality of each stage determines whether you get signal or noise:

| Stage | What Happens | Speed |

|---|---|---|

| 1. Ingest | Collect alerts from SIEM, EDR, identity (Okta, Entra), cloud (AWS, Azure, GCP), network, and SaaS apps | Real-time |

| 2. Normalize | Standardize data formats across disparate sources into a unified schema, so a CrowdStrike alert and a Sentinel alert speak the same language | Seconds |

| 3. Enrich | Add threat intelligence, asset context, user behavior baselines, geolocation, and reputation scores from multiple feeds | Seconds |

| 4. Decide | AI-driven classification: true positive, false positive, requires deeper investigation, or requires human/user verification | <2 minutes |

| 5. Act | Automated response (containment, notification, ticket creation) or escalation with full enriched context to a human analyst | Minutes |

The key insight: most SOCs break down between stages 3 and 4. They collect alerts fine. They even enrich reasonably well. But the decision layer, “is this real or noise?”, is where manual SOCs fall apart because a human can’t hold 15 data points in context across 4,484 daily alerts. That’s exactly where AI reasoning shines: pattern matching at scale with contextual memory across your entire environment.

⚙️ How AI Transforms Each SOC Tier

The real operational impact becomes clear when you map it tier by tier:

| SOC Tier | Before AI Automation | After AI Automation | Role Evolution |

|---|---|---|---|

| Tier 1 (Alert Monitoring) | Manual triage, 45 min/alert, 60–80% false positives | AI auto-triages 90%+ in <2 min, only escalates confirmed threats | L1 analysts → AI supervisors |

| Tier 2 (Investigation) | Manual log correlation across 5+ consoles, 2–4 hours/investigation | AI auto-enriches, correlates, produces structured reports in seconds | L2 analysts → threat hunters |

| Tier 3 (Threat Hunting) | Reactive hunting based on IOC feeds | AI proactively surfaces anomalies, generates detection hypotheses, writes rules as code | L3 → detection engineers + AI tuners |

This isn’t theoretical. As one CISO I spoke with on a recent podcast put it: the hybrid model works because you outsource the commoditized L1 work to AI, keep L2–L3 close to the business for context, and the feedback loop between tiers actually improves the AI over time, which frees senior analysts for proactive, strategic work.

🤖 Human-in-the-Loop vs. Fully Autonomous: Where to Draw the Line

There’s a spectrum here, and getting it wrong has consequences:

- Fully manual: no AI involvement (Level 0)

- AI-recommended: analyst approves every action (Level 1)

- AI-automated with human oversight: AI acts, human reviews (Level 2)

- Fully autonomous: AI decides and acts without approval (Level 3)

Most production AI SOCs in 2026 operate at Level 1–2, with Level 3 reserved only for low-risk actions like auto-closing known false positives. The architectural decision isn’t “should we automate?” but rather “which actions require human approval gates and which don’t?” Get this wrong and you either slow everything down (too many gates) or introduce risk (too few).

At UnderDefense, UnderDefense MAXI operates at Level 2 by default: AI investigates, enriches, correlates, and recommends; our dedicated Tier 3–4 human analysts validate and execute. The system that detects the threat can verify and contain it, with no escalation back to your overwhelmed team at 3 AM.

Q3. Agentic AI vs. Traditional SOAR: Core Capabilities and the Limits of Automation

I’ve spent years watching teams pour money into SOAR platforms, hire dedicated SOAR engineers, and build 200+ playbooks, only to find themselves maintaining a fragile automation layer that breaks every time a novel attack pattern shows up. The problem isn’t the teams. The problem is the architecture.

⚡ SOAR vs. AI SOC vs. Agentic AI: The Differentiation That Matters

These three terms get conflated constantly. Here’s how they actually differ across the dimensions that matter in operations:

| Dimension | Traditional SOAR | AI-Assisted SOC | Agentic AI SOC |

|---|---|---|---|

| Decision-making | Rule-based if/then | ML-driven classification | Autonomous reasoning with goal-directed planning |

| Adaptability | Fixed playbooks | Learns from data | Dynamically adjusts tactics mid-investigation |

| Maintenance | High (playbook sprawl) | Periodic retraining | Self-improving with feedback loops |

| Novel threats | Fails on unknown patterns | Probabilistic detection | Reasons from first principles |

| Integration | Custom API per tool | Pre-built connectors | Natural language tool use + MCP support |

| Human dependency | Human writes every rule | Human trains models | Human sets guardrails and goals |

Gartner estimates only 1–5% of enterprises have deployed agentic AI in production SOCs as of early 2026, but that number is accelerating fast because the operational advantage is undeniable. The organizations that move now build compounding intelligence. The ones that wait will be playing catch-up against both adversaries and competitors.

🛡️ Five Core Capabilities of a Modern AI-Powered SOC

Whether you build or buy, here’s what the architecture must include:

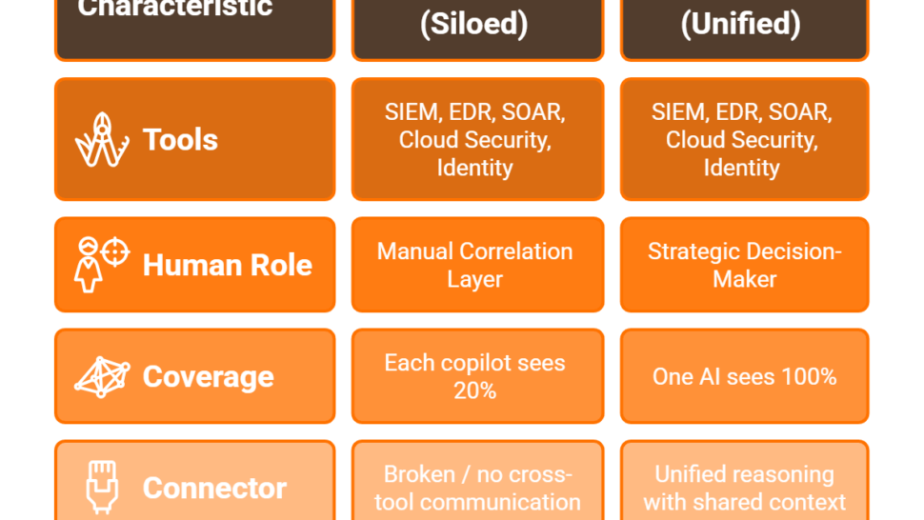

- Unified Data Layer & SIEM-Agnostic Connectivity: single pane across endpoint, cloud, identity, network, and SaaS without vendor lock-in

- Autonomous Investigation & Response: AI conducts multi-step investigations (log pulls, enrichment, correlation, user verification) at machine speed

- Agentic AI with Defined Guardrails: specialized agents for enrichment, correlation, user verification, and containment, coordinated by an orchestration layer where every step is auditable

- Native Case Management: investigation findings, analyst notes, and response actions in one workflow, not scattered across a SOAR console and a ticketing system

- Open Ecosystem & MCP Support: API-first architecture, Model Context Protocol for cross-tool AI reasoning, and Detection Logic as Code deployed via CI/CD

⚠️ The 70% Rule: What AI Can and Cannot Automate

Let me be honest about the limits, because too many vendors won’t be. AI handles roughly 70% of routine SOC tasks today: alert triage, enrichment, correlation, known-pattern detection, false positive closure, and compliance evidence collection.

The remaining 30% requires human judgment that no model can reliably replicate:

- Novel attack pattern recognition

- Business impact assessment (“do we shut down this production server?”)

- Strategic response decisions involving cross-organizational communication

- Legal and regulatory judgment calls

- Adversary intent analysis

“Will AI replace analysts?” No. AI replaces repetitive tasks, not analysts. The analyst role evolves from “alert processor” to “AI supervisor + threat hunter + detection engineer.” Teams that embrace this upskill. Teams that resist get buried in noise.

At UnderDefense, UnderDefense MAXI embodies all five core capabilities while maintaining the human-AI boundary through our “AI collects context, you decide” philosophy. Detection Logic as Code provides the governance layer that lets teams confidently deploy AI-written detections in production, because every rule is versioned, tested, and auditable.

“UnderDefense analysts communicate directly with affected users, providing answers to alerts competitors cannot resolve.”

— UnderDefense Communication Guide

Q4. Which SOC Workflows Deliver the Highest ROI When Automated?

Not all automation is created equal. The biggest mistake I see security leaders make is trying to automate everything at once, rather than starting with the workflows that deliver disproportionate ROI. After serving 500+ MDR clients and processing 6TB of security telemetry daily, here’s how the six core SOC workflows rank by return on investment.

⭐ #1: Alert Triage and Prioritization (Highest ROI)

| Metric | Before Automation | After Automation |

|---|---|---|

| Time per alert | 45 minutes | <2 minutes |

| False positive rate | 60–80% | <10% |

| Alerts uninvestigated | 67% | <5% |

| Analyst time on triage | 60–70% of workday | 10–15% |

Alert triage is the single highest time-sink in SOC operations, and it’s where AI excels because 80% of triage is pattern matching against known-good baselines. Every minute saved on a false positive is a minute your analyst can spend hunting real threats.

⭐ #2: Phishing Investigation and Response

Before automation: 30–45 minutes per phishing report (header analysis, URL detonation, user outreach, credential reset). After: AI auto-analyzes headers, detonates URLs in sandbox, verifies with the affected user via ChatOps, and auto-resets credentials if compromised. Time savings: 85%+ per incident.

This is where ChatOps verification changes the game. Instead of your analyst spending 20 minutes trying to reach the user who reported the phish, the system pings them directly via Slack or Teams: “Did you click this link? Did you enter credentials?”, and gets an answer in minutes, not hours.

💰 #3: Incident Response and Containment

Before: 4.5-hour average MTTR for critical incidents, with manual coordination across tools and teams. After: AI-orchestrated containment, including endpoint isolation, credential revocation, and firewall rule deployment, with human approval gates for high-risk actions. MTTR reduction: 60–80%.

The critical distinction: automated containment with a human approval gate for high-severity actions. You want the system to auto-isolate a compromised endpoint at 3 AM. You don’t want it to shut down your production database without a human saying “go.”

💰 #4: Threat Intelligence Enrichment

Before: manual IOC lookups across 5–10 feeds, 15–20 minutes per alert. After: automated multi-source enrichment in seconds with contextual risk scoring and automatic IOC correlation. Analyst effort reduction: 95%. This is table-stakes automation. If your SOC is still doing manual enrichment in 2026, you’re bleeding time on a solved problem.

#5: Compliance Reporting and MITRE ATT&CK Mapping

Before: manual evidence collection for SOC 2/ISO 27001 audits taking weeks of preparation. After: auto-generated compliance artifacts mapped to regulatory controls in real-time. Audit prep reduction: 70–80%. This is especially critical given new regulatory pressure from CIRCIA’s 72-hour reporting requirements and SEC cybersecurity disclosure rules.

#6: Behavioral Anomaly Detection and Lateral Movement

Before: relies on analyst intuition and manual log review. After: AI baselines normal behavior, detects deviations, and traces lateral movement paths automatically. Detection improvement: 40–60% more anomalies identified. This is the frontier, the use case where agentic AI’s ability to reason across multiple data sources shows the biggest leap over traditional SOAR.

At UnderDefense, UnderDefense MAXI automates all six use cases through its agentic pipeline, with ChatOps user verification being uniquely effective for Use Cases #1 and #2, where context from the affected user is the fastest path to resolution, and where competitors simply escalate the alert back to your already-overwhelmed team.

Q5. The AI SOC Maturity Model: Where Does Your Organization Stand?

Most security teams can’t tell you where they actually stand on the automation spectrum. They know they’re “doing something with AI,” but they can’t articulate what level of autonomy they’ve reached, what gaps remain, or what milestones would move them forward. That ambiguity is expensive, leading to buying tools you don’t need and ignoring capabilities you desperately do.

Here’s a framework built from what we see across 500+ environments. It’s not theoretical. It’s based on where real teams are and what separates those who sleep through the night from those who don’t.

📊 The 5-Level AI SOC Maturity Model

| Level | Name | Automation Rate | MTTD | MTTR | Defining Characteristic |

|---|---|---|---|---|---|

| 1 | Manual / Script-Based | <10% | >24 hrs | >8 hrs | Ad-hoc scripts, fully reactive, no correlation |

| 2 | SOAR Playbook-Driven | 20–40% | 4–12 hrs | 2–4 hrs | Static playbooks, requires dedicated SOAR engineer |

| 3 | AI-Assisted (Copilot) | 40–60% | 1–4 hrs | 30–60 min | ML models assist triage/enrichment; analysts approve all actions |

| 4 | Agentic (Human-in-the-Loop) | 70–85% | 15–60 min | 5–15 min | Multi-agent AI reasons across tools; humans approve high-risk only |

| 5 | Fully Autonomous | 85–95% | <5 min | <2 min | AI handles end-to-end for known threat classes; humans hunt novel threats |

Most mid-market organizations in 2026 sit at Level 2 or early Level 3. They’ve invested in SOAR, maybe bolted on some ML-based alert scoring, but they’re still drowning in manual investigation and spending 60–70% of analyst time on triage.

✅ Self-Assessment Checklist

Score yourself honestly, one point per checkbox:

- ☐ 24/7/365 monitoring without coverage gaps (Level 2+)

- ☐ Alerts correlated across all data sources in a unified view (Level 3+)

- ☐ AI auto-triages >50% of alerts without human intervention (Level 3+)

- ☐ Investigations produce structured reports in <5 minutes (Level 3+)

- ☐ User activity verified directly via Slack/Teams/ChatOps (Level 4+)

- ☐ Detection rules versioned and deployed via CI/CD (Level 4+)

- ☐ AI provides auditable investigation trails for compliance (Level 4+)

- ☐ Containment actions execute within 15 minutes of detection (Level 4+)

- ☐ Compliance evidence auto-generated and mapped to regulatory controls (Level 4+)

- ☐ AI proactively surfaces novel threats without analyst initiation (Level 5)

🎯 What Your Score Means

8–10 ✅ → Level 4–5 maturity. You’re optimizing and proactively hunting. Focus on reducing human approval gates for low-risk actions and expanding agentic coverage.

4–7 ✅ → Level 2–3. Critical gaps exist. Your team is burning out on noise, and response times are likely measured in hours, not minutes. This is the most dangerous range: you have some automation, but not enough to prevent blind spots.

0–3 ✅ → Level 1. Reactive posture with significant breach risk. Hiring won’t fix this fast enough; you need a force multiplier.

⏰ Closing the Gap Fast

We built UnderDefense MAXI to move organizations from Level 2–3 to Level 4 within 30 days, combining agentic AI investigation, ChatOps verification, Detection Logic as Code, and 250+ vendor-agnostic integrations deployed on top of whatever SIEM/XDR you already own. Most teams go from 3 checks to 8+ within the first month. No rip-and-replace. No 6-month implementation project.

Q6. How Do the Top AI SOC Automation Tools Compare in 2026?

Choosing a Legacy SOAR for agentic use cases is like buying a flip phone for mobile banking: the architecture doesn’t support the ambition. The AI SOC tooling landscape in 2026 falls into four distinct categories, and understanding which category you’re evaluating in is more important than comparing individual features.

🗂️ Four Tool Categories

- Legacy SOAR (Splunk SOAR, Palo Alto XSOAR): automation via static playbooks; high maintenance, breaks on novel threats

- AI-Native Platforms (Swimlane Turbine, Torq HyperSOC): AI-enhanced orchestration with pre-built connectors

- Agentic SOC Platforms (UnderDefense MAXI, D3 Morpheus, Radiant Security): autonomous investigation and response with goal-directed planning

- Open-Source Stack (Shuffle + TheHive + MISP + Wazuh): maximum flexibility, highest engineering burden ($150K–$250K/yr in FTEs, no SLA, compliance is DIY)

📋 Vendor Comparison Matrix

| Tool | Category | Agentic Maturity | Vendor Lock-In | Alert-to-Triage | ChatOps Verification | Pricing Transparency | Mid-Market Fit | MITRE Coverage |

|---|---|---|---|---|---|---|---|---|

| UnderDefense MAXI | Agentic | ⭐ Advanced | ✅ No | 2 min | ✅ Yes | ✅ Published ($11–15/ep) | Strong | 96% |

| CrowdStrike Charlotte AI | AI-Native | Moderate | ❌ Yes | ~10 min | ❌ No | ❌ Contact sales | Weak | ~85% |

| Palo Alto Cortex XSIAM | AI-Native | Moderate | ❌ Yes (PAN stack) | ~8 min | ❌ No | ❌ Contact sales | Weak | ~88% |

| Microsoft Security Copilot | AI-Native | Basic–Moderate | Partial (M365) | ~15 min | ❌ No | Partial | Moderate | ~80% |

| Google SecOps Gemini | AI-Native | Moderate | Partial (GCP) | ~12 min | ❌ No | Partial | Moderate | ~82% |

| Torq HyperSOC | AI-Native | Moderate | ✅ No | ~5 min | ❌ No | ❌ Contact sales | Moderate | N/A |

| D3 Morpheus | Agentic | Advanced | ✅ No | ~5 min | ❌ No | ❌ Contact sales | Moderate | ~85% |

| Radiant Security | Agentic | Advanced | ✅ No | ~3 min | ❌ No | ❌ Contact sales | Strong | N/A |

| Splunk SOAR (Legacy) | Legacy SOAR | Basic | Partial | 30+ min | ❌ No | ❌ Contact sales | Weak | N/A |

The pattern is clear: most vendors force you to “contact sales” for pricing, lock you into their ecosystem, and can’t verify alerts directly with affected users.

⚠️ What Real Users Say

“We received little value from ArcticWolf. The product offered little visibility when we were using it… Anything you want to look at or changes you need to make in the product must go through their engineering team.”

— Matt C., Manager, Cybersecurity Services Arctic Wolf – G2 Verified Review

“Analysts provide little context, and when asked for more information in the investigation nothing is ever provided or even communicated.”

— CISO, Manufacturing Arctic Wolf – Gartner Peer Review

“Despite the capabilities of the technical platform and the strength of the analysts providing the service, there is still a limit to the environmental/organizational knowledge inherent in the service. This leads to a fairly frequent need for engagement with our internal team to get clarification and verification.”

— Verified User, Computer Software Expel – G2 Verified Review

🏆 Who Should Choose What

All-in on Palo Alto? → Cortex XSIAM makes sense if you’ve already committed to the PAN ecosystem.

Microsoft-native shop? → Security Copilot integrates well with Defender, Sentinel, and Entra ID.

2+ engineers and zero budget? → Open-source stack, but prepare for the operational overhead.

Protect existing stack investments, need transparent auditable AI, and want analysts who verify alerts directly with users? → UnderDefense MAXI, which detected threats 2 days faster than CrowdStrike OverWatch while integrating with, not replacing, the customer’s existing CrowdStrike deployment.

Q7. How Do You Calculate Total Cost of Ownership and ROI for AI SOC Automation?

Most security leaders get the ROI calculation wrong, and it’s not their fault. The wrong model compares tool cost vs. tool cost. The right model compares total operational cost with and without AI SOC automation. That means factoring in people, process failures, incident losses, and opportunity cost, not just license fees.

💰 Cost Tiers at a Glance

| Tier | Annual Platform Cost | Hidden Costs | Total Realistic Range |

|---|---|---|---|

| Open-Source | $0 licensing | $150K–$250K/yr in FTEs + maintenance | $150K–$250K |

| Mid-Market AI SOC | $50K–$200K/yr | Integration + tuning + training | $80K–$280K |

| Enterprise Agentic | $200K–$600K+ | Professional services, custom integrations | $300K–$800K+ |

❌ The Wrong Way to Calculate ROI

Three mistakes I see constantly:

- Vendor-provided ROI calculators inflate projected savings by 2–3x by assuming perfect adoption and zero ramp-up time

- Comparing sticker price without integration engineering (40–120 hours at $150–$200/hr), detection tuning (10–20 hrs/month for 6 months), and analyst training (40–80 hrs per analyst)

- Ignoring staffing shifts: if your L1 analysts become AI supervisors, their compensation expectations and career paths change

✅ The Right ROI Framework

Formula:

Annual ROI = [(Analyst Hours Saved × Fully-Loaded Hourly Cost) + (Incidents Prevented × Avg Incident Cost) + (Compliance Penalty Avoidance) + (Reduced Turnover Savings)] − (Platform Cost + Integration + Training + Ongoing Tuning)

Worked mid-market example (1,000 endpoints, 5-person security team, 50K alerts/month):

| ROI Component | Calculation | Value |

|---|---|---|

| Analyst hours saved | 3 analysts × 15 hrs/week × 52 weeks × $85/hr | $198,900 |

| Incidents prevented | 2/yr × $500K avg cost | $1,000,000 |

| Reduced turnover | 1 fewer departure/yr × $80K replacement cost | $80,000 |

| Total Benefit | $1,278,900 | |

| Platform + integration + training | $150K + $30K + $20K | $200,000 |

| Net ROI | 539% |

“We give back 25% of time for countering threats and communication… Less theater, more throughput. Less black box, more blue team.”

— UnderDefense Communication Guide

“This is not an extension of our security team as was originally sold.”

— Sr. Cybersecurity Engineer, Manufacturing Arctic Wolf – Gartner Peer Review

📊 Industry Benchmarks: Before vs. After AI SOC

| Metric | Industry Average (Before) | With AI SOC Automation |

|---|---|---|

| MTTD | 197 days | <1 hour |

| MTTR | 69 days | <15 minutes |

| False Positive Rate | 60–80% | <10% |

| Analyst Hours on Triage | 60–70% | 10–15% |

| Cost Per Alert | $25–$50 | $2–$5 |

Decision threshold: if your projected ROI exceeds 200%, it’s a clear “go.” At UnderDefense, our documented results show 830% ROI over 3 years, 99% noise reduction, and zero ransomware across all MDR clients in six years, at a published $11–15/endpoint/month.

📥 Use the UnderDefense SOC Cost Calculator →

Q8. The 90-Day AI SOC Implementation Roadmap: From Assessment to Production

Buying an AI SOC platform is the easy part. Getting it into production where it’s actually reducing risk: that’s where most teams stall. Here’s a phased 90-day roadmap built from hundreds of deployments, with specific milestones, success criteria, and the pitfalls that derail timelines.

📅 Phase 1: Assess and Baseline (Days 1–15)

This phase is about knowing where you stand before changing anything:

- Audit current stack: document every SIEM, EDR, identity, cloud, and network tool, including utilization rates (most teams use only 20% of their tools’ capabilities)

- Map alert volumes: how many daily, by source, by category, by disposition

- Establish baselines: MTTD, MTTR, false positive rate, analyst hours on triage, cost per alert

- Define governance policies: which actions auto-execute vs. require human approval

- Select platform: using the 7-criteria evaluation framework from Q6

✅ Success Criteria: documented baseline, governance policies approved, platform selected.

🔧 Skills Your Team Needs

- Python/scripting for Detection-as-Code

- API integration and data pipeline management

- SOAR/SIEM administration

- Prompt engineering for AI tuning

- IR workflow design

📅 Phase 2: Build and Configure (Days 16–45)

Start narrow; expand with confidence:

- Deploy in shadow mode on a single highest-noise alert category (identity anomalies or phishing are ideal starting points)

- Integrate priority data sources: endpoint, identity, and email first; cloud and network second

- Configure ChatOps channels: connect Slack, Teams, or email for user verification workflows

- Begin detection tuning: refine AI accuracy against your human baseline; expect 2–4 weeks of calibration

✅ Success Criteria: >90% triage accuracy, <5% false negative rate, ChatOps operational, analyst trust building.

📅 Phase 3: Activate and Measure (Days 46–90)

- Transition to live operation: AI triages in real-time with analyst oversight

- Expand to full alert coverage: bring all data sources online

- Enable agentic containment with human approval gates for high-risk actions

- Integrate compliance evidence generation: auto-map detection outputs to SOC 2, ISO 27001, and HIPAA controls

- Begin proactive threat hunting: let AI surface anomalies while analysts investigate edge cases

✅ Success Criteria: 80%+ triage automated, MTTR reduced 50%+, zero missed critical incidents.

⚠️ Common Pitfalls That Derail Timelines

| Pitfall | Why It Happens | How to Avoid |

|---|---|---|

| Automating everything at once | Overconfidence after initial success | Start with 1 alert category, expand weekly |

| Skipping governance | Urgency overrides process | Define approval gates before deployment |

| Ignoring analyst feedback loops | AI tuning treated as one-time setup | Schedule weekly calibration reviews for 90 days |

| Not measuring baselines | Can’t prove ROI without “before” data | Document MTTD/MTTR/FP rate on Day 1 |

⏰ The UnderDefense Fast Track

We condense this into a 30-day turnkey deployment with custom detection tuning, a dedicated onboarding team, and 99% noise reduction from day one. We invest a full 30 days in high-quality onboarding, building customized detections that give you only confirmed, validated offenses, cutting 99% of noise.

🔗 Related: SOC Automation: Streamlining Security Operations (+CISO’s Checklist) →

Q9. What Governance, Risk, and Compliance Guardrails Does AI SOC Automation Require?

The fastest AI SOC is worthless if it can’t prove what it did and why to your auditor. Governance is not a nice-to-have bolt-on. It is the structural foundation that determines whether your AI SOC protects you or creates new liability.

⚠️ Three Failure Modes You Can’t Ignore

Automated Response to False Positives, Outage Risk: AI auto-isolating a production server based on a misclassified alert can cause more damage than the threat itself. Mitigation: confidence thresholds plus human approval gates for high-impact actions.

AI Hallucinations in Investigation Summaries: LLM-generated reports may fabricate IOCs, misattribute attack sources, or invent log entries that don’t exist. This is not theoretical. It happens. Mitigation: ground all AI outputs in raw evidence with source citations; require analyst verification for investigation conclusions.

Compliance Liability of Autonomous Actions: If AI auto-revokes credentials for a VIP during a board meeting based on a false positive, who is liable? Mitigation: documented governance policies, audit trails for every automated action, and clear escalation paths.

🛡️ Agentic AI Risk Matrix

| Autonomous SOC Action | Risk Level | Governance Requirement |

|---|---|---|

| Auto-close known false positives | ✅ Low | AI autonomous, logged |

| Auto-enrich and correlate alerts | ✅ Low | AI autonomous, logged |

| User verification via ChatOps | ⚠️ Medium | AI-initiated, human-confirmed |

| Endpoint isolation | ❌ High | Human approval required, 15-min SLA |

| Credential revocation | ❌ High | Human approval required, documented justification |

| Network segment quarantine | ❌ Critical | CISO/IR lead approval, incident bridge convened |

⏰ Building Trust Incrementally

Don’t flip the switch from “fully manual” to “fully autonomous” overnight. The governance framework that actually works in production:

- Days 1-30: AI runs in shadow/recommend-only mode. Every decision logged, none executed.

- Days 31-60: Auto-execute low-risk actions after validated accuracy exceeds 95%.

- Days 61-90: Expand to medium-risk with human oversight; high-risk actions always require human approval.

Trust is earned through measured accuracy, not vendor promises.

📋 Regulatory Drivers Accelerating AI SOC Governance

| Framework | Key Requirement | AI SOC Implication |

|---|---|---|

| CIRCIA (2026) | Report cyber incidents within 72 hrs, ransomware payments within 24 hrs | AI SOC provides the detection speed and evidence documentation required to meet these windows |

| SEC Cybersecurity Disclosure | Material incidents disclosed via Form 8-K within 4 business days | AI investigation summaries accelerate materiality assessment |

| NIST CSF 2.0 | New “Govern” function addresses AI governance explicitly | Directly applicable to AI SOC decision-making policies |

| EU AI Act Article 14 | High-risk AI requires human oversight, transparency, and audit trails | Applies to AI SOC containment actions affecting production systems |

Compliance mapping at the control level: SOC 2 CC7.2/CC7.3 requires logging all AI decisions. ISO 27001 A.16 requires documenting AI’s role in incident management. HIPAA §164.308 requires justification for automated actions on PHI systems.

At UnderDefense, every investigative step is observable and auditable. On-prem deployment keeps logs in your data lake. Detection Logic as Code provides version-controlled governance. And UnderDefense MAXI Compliance auto-generates audit evidence mapped to regulatory controls, because passing an audit shouldn’t require a 3-month scramble.

Q10. What AI SOC Automation Strategies Work Best for Mid-Market and Lean Teams?

You’re the IT Director at a 1,200-person healthcare company. You have 3 security analysts, a Splunk instance nobody fully understands, CrowdStrike on endpoints, and Okta for identity. Last night at 2:47 AM, your phone buzzed with the 14th “critical” alert this week. You spent 45 minutes investigating, another false positive. Your best analyst just gave notice. The board wants SOC 2 compliance by Q3. Enterprise AI SOC platforms quote $500K+ and require a team you don’t have.

❌ Why Mid-Market Gets Squeezed

Mid-market organizations (500-5,000 employees) face the same threat landscape as Fortune 500 companies but with 10-20% of the budget and staff. Most AI SOC platforms are designed for 50-person SOC teams. Legacy SOAR requires dedicated playbook engineering expertise that lean teams simply don’t have.

The hidden costs are brutal:

- Unfilled analyst positions: $120K-$180K each + $40K-$60K in recruiting and training

- 70% of critical alerts go uninvestigated due to volume

- Average mid-market breach cost: $3.3M (IBM)

- Compliance failures delaying enterprise deals by 3-6 months

- Analyst turnover every 18 months, just as they become effective

✅ Where to Start: Three Use Cases for Maximum Impact

For resource-constrained teams, automate in this order:

- Alert triage: highest volume, lowest complexity, biggest time savings

- Phishing response: most frequent incident type, easily automated end-to-end with ChatOps verification

- Compliance evidence collection: board-visible, audit-critical, and immediately reduces manual prep time

🎯 What “Good” Looks Like

The right system correlates alerts across all your tools, verifies suspicious activity directly with users, and only escalates confirmed threats requiring your decision. You’d wake up to “Incident contained at 2:52 AM, here’s what happened and what we did,” not 47 unread alerts to triage.

“UnderDefense is surprisingly affordable considering the level of protection we get. Their proactive threat hunting and rapid response have saved us from incidents that could have been incredibly costly.”

— Verified User, Program Development UnderDefense – G2 Verified Review

“Underdefense is a great choice for teams like ours that are short on resources. It automates many tasks, plus, with 24/7 monitoring, we know we’re always protected.”

— Inga M., CEO UnderDefense – G2 Verified Review

💰 UnderDefense’s Mid-Market Approach

We built UnderDefense MAXI specifically for teams that can’t afford enterprise complexity but can’t afford to ignore enterprise-grade threats:

- Turnkey 30-day onboarding, no SOAR architects needed

- Works with existing Splunk/Sentinel/CrowdStrike, zero rip-and-replace

- Published $11-15/endpoint/month vs. enterprise “contact sales” opacity

- Concierge analyst team learns your org: VIPs, critical assets, technical users

- MAXI Compliance included: SOC 2, ISO 27001, HIPAA evidence auto-generated

- Scales from 3-analyst team to full 24/7 without hiring

The proof: 830% ROI over 3 years. Zero ransomware across all MDR clients in 6 years. 113% net dollar retention because mid-market clients expand as they see value, not because they’re locked in. 1% customer churn.

“They’re basically my security team since I can’t build one myself. They respond to problems so quickly.”

— Verified User UnderDefense – G2 Verified Review

Q11. Should You Build, Buy, or Partner? Evaluating Managed AI SOC Services

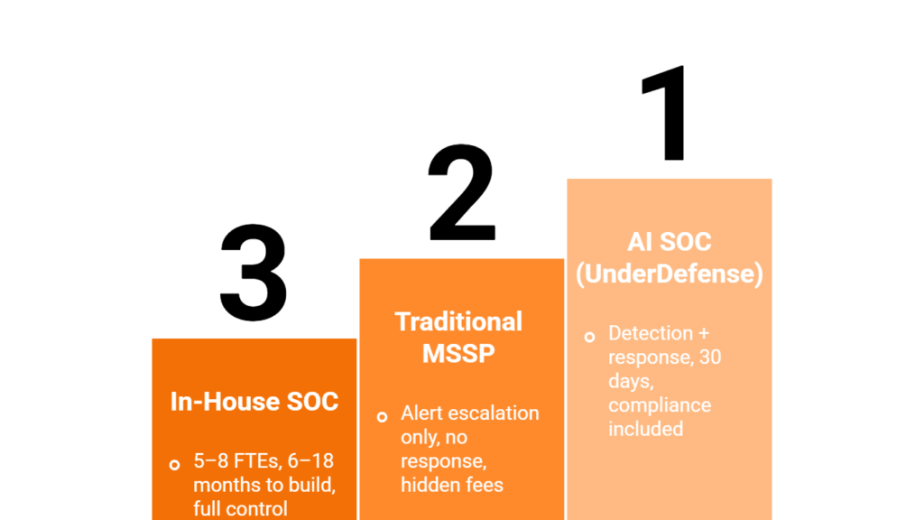

The three paths to AI SOC automation, build internally with open-source tools, buy a commercial platform and run it yourself, or partner with a managed AI SOC provider, each suit different organizational profiles. The right choice depends on your team size, existing stack investments, and whether you need 24/7 coverage without hiring.

🔍 What Separates the Three Paths

Build (open-source): Maximum control, zero licensing cost, requires 2+ dedicated engineers, 6-12 month deployment timeline, no SLA guarantee, compliance is entirely DIY.

Buy (commercial platform): Faster deployment, vendor support included, $50K-$600K+/yr, still requires internal team to operate 24/7.

Partner (managed AI SOC / MDR): Turnkey 24/7 coverage, vendor-agnostic integration, fastest time-to-value (30 days), predictable per-endpoint pricing, but requires trust in an external partner.

🎯 Which Path Fits Your Team

For most mid-market organizations with 2-10 security analysts, the partner path delivers the fastest ROI because it eliminates the hiring, training, and 24/7 coverage gap simultaneously. For enterprise teams with 15+ analysts and dedicated engineering, the buy path preserves maximum control. For budget-zero teams with strong engineering talent, the build path is viable but slow.

If you’re evaluating managed AI SOC providers, the comparison below ranks the top SOC-as-a-Service options by integration depth, response capability, pricing transparency, and mid-market fit.

This analysis is based on documented response times, G2 reviews, published pricing, and operational outcomes across 500+ MDR deployments.

Q12. FAQ: AI SOC Automation Questions Security Leaders Ask

What is the difference between AI SOC automation and traditional SOAR?

Traditional SOAR executes pre-written playbooks using rigid if/then logic. It can only handle scenarios someone anticipated and coded for. AI SOC automation uses machine learning and agentic AI to reason across data, adapt to novel patterns, and make decisions without pre-built playbooks for every scenario. The detailed differentiation table in Q3 above breaks this down across seven dimensions.

How long does it take to implement AI SOC automation?

Implementation ranges from 30 to 90 days depending on the path: building from open-source takes 6-12 months, buying a commercial platform takes 60-90 days with internal engineering, and partnering with a managed provider like UnderDefense delivers turnkey deployment in 30 days with custom detection tuning included.

Can AI SOC automation work with my existing SIEM?

Yes. Modern AI SOC platforms are designed to be SIEM-agnostic, operating as a layer on top of whatever you already own: Splunk, Microsoft Sentinel, Google Chronicle, or Elastic. The key question is whether the platform requires you to replace your SIEM or integrates with it. UnderDefense MAXI integrates with 250+ tools without forcing replacement.

Is AI SOC automation worth it for small security teams?

It’s actually most impactful for teams of 2-5 analysts who cannot sustain 24/7 coverage manually. A 3-person team physically can’t monitor alerts around the clock, and AI automation fills that gap without hiring 6 additional analysts at $120K-$180K each.

Will AI replace SOC analysts?

No. AI replaces repetitive tasks: alert triage, enrichment, correlation, false positive closure. The analyst role evolves from “alert processor” to AI supervisor, threat hunter, and detection engineer. Teams that embrace this shift upskill their analysts into higher-value work.

What does AI SOC automation cost?

Costs range from $0 licensing (open-source with $150K-$250K in FTEs) to $600K+/yr for enterprise agentic platforms. UnderDefense publishes transparent pricing at $11-15/endpoint/month, which includes the platform, 24/7 analyst team, and compliance evidence generation.

What compliance frameworks require AI SOC governance?

SOC 2 (CC7.2/CC7.3), ISO 27001 (A.16), HIPAA (§164.308), the EU AI Act (Article 14), and CIRCIA (2026) all have specific implications for how AI operates within security workflows. The detailed regulatory mapping in Q9 covers each framework’s requirements.

How big is the AI SOC automation market?

The SOC automation market is projected to grow from $9.74B to $26.25B by 2033, reflecting accelerating investment across all verticals as organizations recognize that manual SOC operations cannot scale to match the threat landscape.

Will AI handle 90%+ of Tier 1 work by end of 2026?

Leading agentic platforms already automate 85-90% of Tier 1 triage today. Achieving 90%+ consistently is realistic for mature implementations by late 2026, but only with proper detection tuning, governance frameworks, and feedback loops between AI and human analysts.

What does the future human-AI SOC team look like?

The model converges toward “AI as the analyst workforce, humans as the strategic brain.” AI handles volume at machine speed; humans handle judgment, business context, novel threats, and adversary engagement. Organizations that establish governance frameworks and vendor-agnostic architectures now will lead. Those that wait will be forced to catch up under pressure, and catching up in cybersecurity means catching up after an incident.

1. What is AI SOC automation and how does it differ from traditional SOAR?

AI SOC automation uses artificial intelligence, including agentic AI that reasons, plans, and acts autonomously, to automate security operations center workflows like alert triage, investigation, enrichment, and response. Traditional SOAR relies on rigid if/then playbook logic that only handles scenarios someone anticipated and coded for.

The key differences:

– SOAR requires manual playbook maintenance and breaks on novel attack patterns. Agentic AI dynamically adjusts tactics mid-investigation.

– SOAR demands dedicated playbook engineers. AI SOC systems self-improve through feedback loops.

– SOAR fails on unknown threats. Agentic AI reasons from first principles across your full data set.

We built UnderDefense MAXI as an agentic AI SOC that operates on top of your existing SIEM/XDR without forcing replacement, combining autonomous investigation with human analyst oversight for high-risk actions. The distinction matters because bolting a ChatGPT wrapper onto your SIEM dashboard is AI in the SOC, while a true AI SOC is an architecture where intelligence is the operating layer from day one.

2. Which SOC workflows deliver the highest ROI when automated with AI?

Not all automation delivers equal returns. Based on our experience serving 500+ MDR clients and processing 6TB of security telemetry daily, we recommend prioritizing these workflows:

-

-

Alert triage and prioritization (highest ROI): Reduces time per alert from 45 minutes to under 2 minutes and cuts false positive rates from 60-80% to under 10%.

-

Phishing investigation and response: AI auto-analyzes headers, detonates URLs, verifies with affected users via ChatOps, and auto-resets compromised credentials, saving 85% per incident.

-

Incident response and containment: AI-orchestrated containment with human approval gates for high-risk actions cuts MTTR by 60-80%.

-

Threat intelligence enrichment: Automated multi-source enrichment reduces analyst effort by 95%.

-

The biggest mistake we see is trying to automate everything at once. Start with alert triage because 80% of it is pattern matching against known-good baselines. We cover all six core SOC automation workflows through our agentic pipeline, with ChatOps user verification resolving 80% of behavioral alerts by simply asking the person involved.

3. How do you assess your organization's AI SOC maturity level?

We developed a 5-level AI SOC maturity model based on patterns across 500+ environments:

-

-

Level 1 (Manual/Script-Based): Less than 10% automation, MTTD over 24 hours, fully reactive.

-

Level 2 (SOAR Playbook-Driven): 20-40% automation, static playbooks, requires dedicated SOAR engineer.

-

Level 3 (AI-Assisted Copilot): 40-60% automation, ML models assist triage, analysts approve all actions.

-

Level 4 (Agentic Human-in-the-Loop): 70-85% automation, multi-agent AI reasons across tools, humans approve high-risk only.

-

Level 5 (Fully Autonomous): 85-95% automation, AI handles end-to-end for known threats, humans hunt novel threats.

-

Most mid-market organizations sit at Level 2 or early Level 3. Score yourself using our 10-point self-assessment checklist: if you score 0-3, you need a force multiplier immediately. We built UnderDefense MAXI to move organizations from Level 2-3 to Level 4 within 30 days, combining agentic AI investigation, ChatOps verification, and 250+ vendor-agnostic integrations.

4. How much does AI SOC automation cost, and what ROI should you expect?

Costs vary significantly by approach:

-

-

Open-source (Shuffle + TheHive + Wazuh): $0 licensing but $150K-$250K/yr in FTEs and maintenance.

-

Mid-market AI SOC: $50K-$200K/yr platform plus integration and tuning costs.

-

Enterprise agentic platforms: $200K-$600K+/yr plus professional services.

-

The right ROI formula factors in analyst hours saved, incidents prevented, compliance penalty avoidance, and reduced turnover, not just license cost. For a mid-market example with 1,000 endpoints and a 5-person team: total annual benefits reach $1,278,900 against $200,000 in costs, yielding 539% ROI.

We publish transparent pricing at $11-15/endpoint/month, which includes the platform, 24/7 analyst team, and compliance evidence generation. Our documented results show 830% ROI over 3 years. Use the UnderDefense SOC Cost Calculator to model your specific scenario. Decision threshold: if projected ROI exceeds 200%, it is a clear go.

5. What governance and compliance guardrails does AI SOC automation require?

Governance is the structural foundation that determines whether your AI SOC protects you or creates new liability. Three critical failure modes demand attention:

-

-

Automated response to false positives: AI auto-isolating a production server on a misclassified alert can cause more damage than the threat. Mitigation: confidence thresholds plus human approval gates.

-

AI hallucinations in investigation summaries: LLMs may fabricate IOCs or invent log entries. Mitigation: ground all outputs in raw evidence with source citations.

-

Compliance liability of autonomous actions: If AI revokes VIP credentials during a board meeting, who is liable? Mitigation: documented policies and audit trails.

-

Key regulatory frameworks driving AI SOC governance include CIRCIA (2026), SEC cybersecurity disclosure, NIST CSF 2.0, and EU AI Act Article 14. SOC 2 CC7.2/CC7.3 requires logging all AI decisions, and ISO 27001 A.16 requires documenting AI’s role in incident management. We recommend a phased trust-building approach: shadow mode for Days 1-30, low-risk auto-execution for Days 31-60, and expanding to medium-risk with human oversight for Days 61-90.

6. Should mid-market teams build, buy, or partner for AI SOC automation?

The right path depends on your team size, existing stack investments, and 24/7 coverage requirements:

-

-

Build (open-source): Maximum control, zero licensing, but requires 2+ dedicated engineers, 6-12 month timeline, no SLA, and compliance is entirely DIY.

-

Buy (commercial platform): Faster deployment with vendor support, $50K-$600K+/yr, still requires an internal team to operate 24/7.

-

Partner (managed AI SOC/MDR): Turnkey 24/7 coverage, vendor-agnostic integration, fastest time-to-value at 30 days, predictable per-endpoint pricing.

-

For most mid-market organizations with 2-10 security analysts, the partner path delivers the fastest ROI because it eliminates the hiring, training, and 24/7 coverage gap simultaneously. Mid-market teams face the same threats as Fortune 500 companies but with 10-20% of the budget. We built UnderDefense MAXI for teams that cannot afford enterprise complexity, with turnkey 30-day onboarding, zero rip-and-replace, and published pricing that includes the platform, analysts, and compliance evidence generation.

7. How long does it take to implement AI SOC automation from scratch?

Implementation timelines vary dramatically by path:

-

-

Open-source build: 6-12 months with 2+ dedicated engineers.

-

Commercial platform (self-managed): 60-90 days with internal engineering resources.

-

Managed provider partnership: 30 days with turnkey deployment and custom detection tuning.

-

We follow a structured 90-day roadmap across hundreds of deployments:

– Phase 1 (Days 1-15): Assess and baseline. Audit your current stack, map alert volumes, establish MTTD/MTTR baselines, and define governance policies.

– Phase 2 (Days 16-45): Build and configure. Deploy in shadow mode, integrate priority data sources, configure ChatOps channels, and begin detection tuning.

– Phase 3 (Days 46-90): Activate and measure. Transition to live operation, expand to full alert coverage, and enable agentic containment with human approval gates.

Common pitfalls include automating everything at once, skipping governance, and ignoring analyst feedback loops. We condense this into a 30-day turnkey deployment with 99% noise reduction from day one.

8. Will AI SOC automation replace human SOC analysts?

No. AI replaces repetitive tasks, not analysts. The 70/30 rule applies: AI handles roughly 70% of routine SOC work today, including alert triage, enrichment, correlation, known-pattern detection, false positive closure, and compliance evidence collection.

The remaining 30% requires human judgment that no model can reliably replicate:

– Novel attack pattern recognition

– Business impact assessment (do we shut down this production server?)

– Strategic response decisions involving cross-organizational communication

– Legal and regulatory judgment calls

– Adversary intent analysis

The analyst role evolves from “alert processor” to AI supervisor, threat hunter, and detection engineer. Teams that embrace this shift upskill their analysts into higher-value work. The future model converges toward “AI as the analyst workforce, humans as the strategic brain.” At UnderDefense, our AI SOC with Human Ally approach means AI collects context and you decide, preserving the judgment layer that separates noise reduction from actual security.

The post AI SOC Automation in 2026: Agentic Triage, Maturity Model, ROI & Mid-Market Implementation Guide appeared first on UnderDefense.