Q1: Both Traditional and AI SOCs Pass Audits, So What’s the Real Compliance Difference?

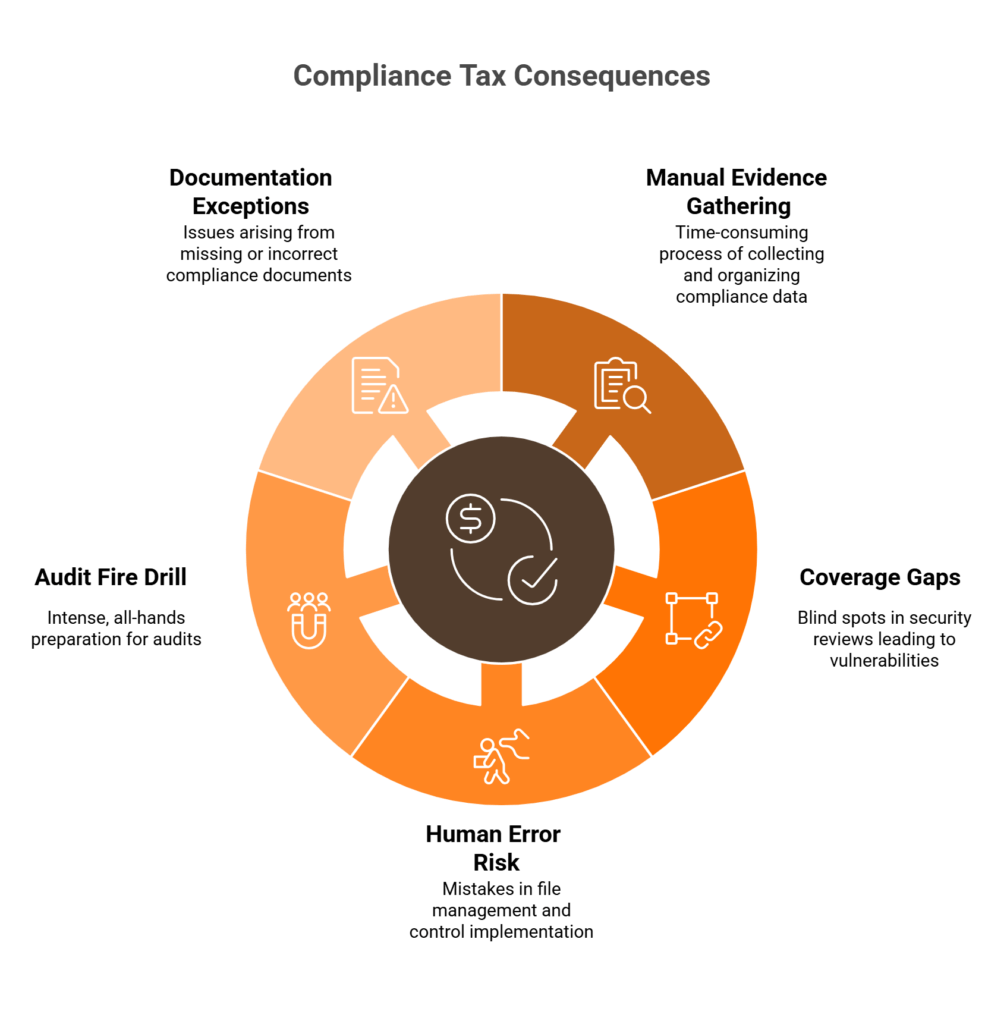

Auditors don’t care whether your SOC runs on AI or human-only workflows. They care about evidence quality, control effectiveness, and continuous adherence. Both SOC types pass audits. Both generate valid compliance artifacts. The real question is: given that both work, where does an AI SOC deliver more value, and how much more? Because there’s a hidden cost most security leaders don’t talk about openly, what I call the “compliance tax.” It’s the cumulative drag of maintaining compliance through manual, periodic processes: the spreadsheets, the screenshots, the all-hands fire drills before every audit cycle.

⏰ The Hidden Compliance Burden

Traditional SOC compliance workflows carry a burden that rarely shows up on anyone’s dashboard. Manual evidence gathering consumes 300–500 hours per audit cycle. Periodic log checks create coverage gaps between review windows. Screenshots and spreadsheets introduce human error: a misnamed file, a timestamp from the wrong timezone, a control that worked fine in Month 1 but slipped quietly in Month 4. Then comes the “audit fire drill”: four to six weeks of all-hands prep that pulls analysts away from actual security operations. Most SOC 2 Type 2 reports contain exceptions, and the pattern is consistent. The majority are driven not by missing controls, but by missing documentation: evidence gaps from periodic collection where nobody was watching between review periods.

✅ The AI SOC as a Compliance Value Multiplier

An AI SOC doesn’t replace compliance effort. It converts compliance from a periodic project into an operational byproduct. Continuous monitoring generates audit evidence as a side effect of daily security operations. Automated evidence collection eliminates the screenshot-and-spreadsheet cycle entirely. Multi-framework mapping means one detection event simultaneously satisfies SOC 2, ISO 27001, HIPAA, and PCI-DSS requirements. This is the shift from “compliance as a tax” to “compliance as a natural output of doing security well.” And that’s not theory. It’s operational reality that changes how your team spends its time.

💰 How We Built This at UnderDefense

This is exactly the philosophy behind how we built UnderDefense MAXI. Our AI SOC + Human Ally model generates compliance artifacts automatically, not from a standalone checklist tool bolted onto your stack, but from an actual security operations platform. 24/7 monitoring produces continuous evidence streams. Vendor-agnostic integration across 250+ tools creates unified audit trails. Automated real-time mapping of security telemetry to compliance controls gives auditors verifiable evidence, not theoretical policies. Our 2-minute alert-to-triage and 15-minute escalation SLAs aren’t just performance metrics. They’re auditable, documented evidence of operational responsiveness.

⭐ Proof in the Numbers

The documented 830% ROI over three years and zero ransomware cases across all MDR customers for six consecutive years aren’t just security outcomes. They’re compliance evidence. When the Full-Spectrum Security case study shows $650K in losses avoided, that’s AI SOC operations producing audit-ready artifacts as a byproduct of doing its actual job: keeping organizations safe. People, process, and tools working together, where compliance is something that happens because security operations run well, not something you scramble to prove every six months.

Q2: What Is Continuous Monitoring, and Why Does It Beat Periodic Log Checks for Every Compliance Framework?

Continuous monitoring, as defined by NIST SP 800-137, means security controls and organizational risks are “assessed and analyzed at a frequency sufficient to support risk-based security decisions to adequately protect organization information.” In operational terms, this translates to real-time or near-real-time assessment of security controls, configuration states, and access patterns. Contrast that with periodic reviews: weekly log pulls, monthly access reviews, quarterly penetration tests. Periodic monitoring gives you snapshots at intervals. Continuous monitoring gives you an unbroken evidence stream across the entire observation period.

❌ The Timeline Gap Problem

Here’s where periodic monitoring breaks down in practice, a step-by-step failure mode that plays out in real audits:

- Day 1: Security event occurs

- Day 30: Log reviewed during scheduled monthly check

- Day 31: Exception discovered

- Day 45: Remediation finally starts

- Audit result: Auditor flags a 29-day evidence gap

Now compare that with continuous monitoring:

- Event occurs → Detected in real-time

- Evidence auto-captured → Control validated instantly

- Zero-day gap between occurrence and documentation

This is why most SOC 2 Type 2 exceptions stem from monitoring lapses between review periods, not from missing controls, but from missing evidence during the windows nobody was watching.

📋 Framework Requirements Driving the Shift

Every major compliance framework now either mandates or strongly favors continuous approaches:

| Framework | Continuous Monitoring Requirement | Key Clause |

|---|---|---|

| SOC 2 Type 2 | Evidence required across the entire observation period, not snapshots | TSC CC7.1–CC7.4 |

| ISO 27001:2022 | Mandates monitoring effectiveness of the ISMS | Clause 9.1 |

| HIPAA | Requires ongoing review of audit logs and activity records | §164.308(a)(1)(ii)(D) |

| PCI-DSS v4.0 | Mandates automated review of security events; manual review no longer sufficient | Requirement 10.4.1 / 10.4.1.1 |

PCI-DSS v4.0 is particularly significant: it now requires automated mechanisms for daily audit log reviews, explicitly moving away from manual processes. This isn’t a future recommendation. It’s a current mandate.

✅ How UnderDefense Delivers This Natively

At UnderDefense, UnderDefense MAXI provides continuous monitoring as an inherent capability of AI SOC operations, not a separate “compliance monitoring” layer you bolt on. Every alert investigated, every response action taken, every user verification conducted via ChatOps becomes a timestamped compliance artifact spanning the full audit observation period. Security operations is compliance monitoring. One process, one evidence stream, four frameworks satisfied simultaneously.

Q3: How Does Automated Evidence Collection Actually Work, From Alert to Audit-Ready Artifact?

Automated evidence collection converts daily SOC operations, including alert triage, incident investigation, user verification, and containment actions, into structured, timestamped compliance artifacts mapped to specific framework controls. This eliminates the manual screenshot-and-spreadsheet cycle entirely and makes compliance a byproduct of security operations, not a separate project. No competitor article currently walks through this full pipeline, so let me break it down.

⚙️ The 6-Step Pipeline: Alert to Audit Artifact

Here’s exactly how it works end-to-end:

- Detection: Security event detected across integrated tools, including SIEM, EDR, cloud, and identity, spanning 250+ integrations

- AI Enrichment: Context added automatically, including threat intel, user attribution, and asset classification

- Investigation Documented: Full audit trail captured, covering queries run, logs pulled, and correlations made. Every AI step is observable and auditable, not a black box

- Response Timestamped: Containment, remediation, and user verification via ChatOps (Slack/Teams/email), with each action logged with precise timestamps

- Framework Mapping: Evidence auto-mapped to compliance controls (SOC 2 CC6.1, ISO 27001 A.12.4, HIPAA §164.312, PCI-DSS Req 10)

- Stored Audit-Ready: Chain-of-custody metadata preserved in structured, exportable format

The key differentiator at step 3: “every investigative step is observable and auditable.” This isn’t automation hiding behind a curtain. It’s automation you can trace, question, and reproduce.

📝 What It Captures: Evidence Types with Multi-Framework Mapping

| Evidence Type | SOC 2 | ISO 27001 | HIPAA | PCI-DSS |

|---|---|---|---|---|

| Access control changes | CC6.1 | A.9 | §164.312(d) | Req 8 |

| Incident detection & response timelines | CC7.2–7.4 | A.16 | §164.308(a)(6) | Req 12.10 |

| Configuration monitoring | CC8.1 | A.12 | §164.310(d) | Req 2 |

| User verification records (ChatOps) | CC6.2 | A.9.4 | ||

| Encryption & data protection telemetry | CC6.7 | A.10 | §164.312(a)(2)(iv) | Req 3–4 |

💸 Why This Matters Operationally

Organizations shifting to automated evidence collection report 80–90% reduction in manual evidence collection hours. Audit prep time drops from four to six weeks down to days. The “evidence hunting” scramble during auditor fieldwork virtually disappears. Traditional SOC teams spend 300–500 hours manually assembling evidence per audit cycle; an AI SOC reduces this to roughly 110–170 hours.

Think of it like this: it’s the difference between a dashcam that records everything automatically versus asking a passenger to take a photo every time something happens on the road. One produces continuous, timestamped, admissible evidence. The other produces incomplete snapshots dependent on human memory and timing.

Q4: AI Collects, Humans Decide: Why the Human + AI Collaboration Model Is What Auditors Actually Trust

The Objection

“Our auditor might not accept AI-generated evidence. We’ve always assembled evidence manually, our audit firm knows our format, and we’re not comfortable replacing human judgment with automation right before an audit. How do we know auditors will trust this?”

⚠️ This Concern Is Legitimate, and Based on a False Premise

This is the most common objection from compliance-conservative organizations, and it’s a good one. Auditors are rightly skeptical of anything that removes human oversight from the evidence chain. Your caution reflects sound governance. But here’s the false premise: an AI SOC doesn’t remove humans from the process. It redefines what humans spend their time on. AI handles the machine-speed tasks: log correlation, enrichment, evidence mapping, and timestamping. Human analysts, Tier 3 and Tier 4, exercise judgment: threat validation, user verification via ChatOps, escalation decisions, and remediation choices.

✅ Why AI-Collected Evidence Scores Higher on Every Auditor Criterion

Auditors evaluate evidence on four dimensions. AI-collected evidence outperforms manual on all four:

- Completeness: Continuous, not periodic, with no gaps between review windows

- Accuracy: No manual transcription errors or mislabeled screenshots

- Timeliness: Real-time capture vs. retrospective assembly

- Chain of custody: Automated, tamper-evident logging with unbroken audit trails

ISO 27001:2022 Clause 7.5 makes no distinction between manual and automated evidence. PCI-DSS v4.0 now mandates automated mechanisms for daily log reviews. The regulatory direction is unambiguous.

⭐ The Proof: Fewer Exceptions, Not More

Organizations switching to AI-collected evidence consistently report fewer audit exceptions. The high exception rate in SOC 2 Type 2 reports is driven by evidence gaps from periodic collection, exactly what continuous automated collection solves. Early adopters report auditors requesting the automated format going forward. Our ChatOps user verification adds a layer competitors lack entirely: when the AI detects anomalous behavior, human analysts contact the actual user via Slack, Teams, or email to confirm, creating verified, human-validated evidence that auditors consider gold-standard.

“They’ve also made our audit process much less painful. The reports from their platform give us clear evidence of our security controls and incident response capabilities.”

— Verified User, Marketing and Advertising UnderDefense G2 – Verified Review

“Beyond the testing itself, UnderDefense also helped us navigate key compliance requirements, ensuring we met industry standards smoothly and efficiently.”

— Arman N., CTO UnderDefense G2 – Verified Review

“Building our cybersecurity from scratch felt like a daunting challenge. Their vCISO team was amazing in supporting us with ISO 27001.”

— Val R., Small-Business UnderDefense G2 – Verified Review

🔍 Invitation to Verify

Request a sample evidence package before your next audit cycle. Share it with your audit firm during planning. We provide sample compliance artifacts from UnderDefense MAXI Compliance that your auditor can pre-validate, because we expect you to verify before you commit. Show, don’t tell. That’s how trust gets built.

Q5: How Much Does an AI SOC Actually Save? A Cost and Value Breakdown by Compliance Framework

Here’s the thing most compliance cost analyses get wrong: they treat each framework as a separate project. Separate budgets, separate evidence sprints, separate fire drills. But when your SOC generates compliance evidence as an operational byproduct of security monitoring, a single security event can satisfy controls across SOC 2, ISO 27001, HIPAA, and PCI-DSS simultaneously. That’s the efficiency multiplier thesis, and it’s the single biggest cost lever most security leaders aren’t pulling.

The Multi-Framework Multiplier

Organizations maintaining three or more compliance frameworks report 40–60% reduction in total compliance effort when evidence collection is automated and unified versus siloed manual processes. The math is straightforward: if one access-change detection event maps to SOC 2 CC6.1, ISO 27001 A.9.2, HIPAA §164.312(d), and PCI-DSS Requirement 7.1, you collect evidence once and satisfy four frameworks. Traditional SOCs collect that same evidence four separate times: four different formats, four different review cycles, four different compliance personnel pulling from the same data.

Per-Framework Value Breakdown

| Framework | Traditional SOC Evidence Hours | AI SOC Evidence Hours | Traditional Audit Prep | AI SOC Audit Prep | Annual Cost Difference | Key AI SOC Advantage |

|---|---|---|---|---|---|---|

| SOC 2 Type 2 | 300–500 hrs/cycle | 110–170 hrs/cycle | 4–6 weeks | 3–5 days | $150K–$200K+ saved in compliance personnel/consulting | Continuous monitoring evidence generated as detection byproduct |

| ISO 27001:2022 | 200–350 hrs (surveillance) | 80–120 hrs | 3 weeks prep | 2–3 days | $80K–$130K saved | ISMS monitoring automated; clause-by-clause review replaced by control-mapped dashboards |

| HIPAA | 250–400 hrs (§164.308 audit logs) | 90–140 hrs | 3–4 weeks | 2–4 days | $100K–$160K saved | Audit log review per §164.308 eliminates 150+ hours of manual log analysis; risk assessment evidence generated continuously |

| PCI-DSS v4.0 | 200–350 hrs (Req. 10.4.1) | 70–110 hrs | 2–3 weeks | 1–3 days | $90K–$140K saved | Requirement 10.4.1 automated security event review; CDE monitoring evidence generated as operational byproduct |

Total Cost of Ownership: The Aggregate Numbers

✅ Manual evidence collection: $50K–$100K in consultant surge costs per audit cycle, eliminated

✅ FTE impact: 2–3 FTEs previously dedicated to compliance prep freed for security operations

✅ Audit duration: Fieldwork compressed from 4–6 weeks to 1–2 weeks

⚠️ Exception reduction: Continuous monitoring catches control failures in real-time, reducing exceptions that cost $10K–$50K each to remediate

💰 Standalone compliance tools replaced: Platforms like Vanta ($10K–$80K/year) or Drata ($12K–$100K/year) become redundant when compliance is embedded in your SOC workflow

How We Approach This at UnderDefense

UnderDefense MAXI Compliance is included with the AI SOC + MDR service at no additional cost. That’s a direct replacement for standalone compliance automation tools charging five to six figures annually. Our published pricing ($11–15/endpoint/month) makes total cost of ownership predictable from day one, and forever-free compliance kits for SOC 2, ISO 27001, and HIPAA are bundled with MDR. Across our client base, we’ve documented 830% ROI over three years when factoring in compliance cost avoidance, FTE reallocation, and audit cycle compression.

“They’ve also made our audit process much less painful. The reports from their platform give us clear evidence of our security controls and incident response capabilities. When auditors or clients ask questions about our security posture, we can pull up exactly what they need to see.”

— Verified User, Marketing and Advertising UnderDefense G2 – Verified Review

“UnderDefense MAXI helped us save money on security by automating tasks and making things run smoother. Their network tools helped us see what’s happening on our network better, which has helped us stop threats before they become big problems.”

— Julia K., Marketing Manager UnderDefense G2 – Verified Review

Q6: AI SOC vs. Traditional SOC for Compliance: The Head-to-Head Comparison

Both traditional SOCs and AI SOCs achieve compliance. Both produce valid audit evidence. Let me be direct about that: this isn’t a “right vs. wrong” conversation. The question is efficiency, cost, and the quality of compliance posture you maintain between audits. If you’re a compliance leader evaluating whether to upgrade, this comparison covers the dimensions that actually change the math.

Traditional SOC: Strengths and Limitations

Traditional SOCs deliver compliance through dedicated compliance personnel, manual evidence collection, periodic log reviews, and project-based audit preparation.

✅ These are proven, auditor-familiar processes with established workflows, and for organizations with a single framework and infrequent audit cycles, they work.

❌ The cost is real, though: 300–500 hours per cycle, temporal coverage gaps between reviews, compliance running as a separate workstream from security operations, and the annual audit “fire drill” that pulls analysts away from actual security work.

AI SOC: The Operational Byproduct Model

AI SOCs deliver compliance differently: every detection, investigation, and response action automatically generates timestamped, control-mapped evidence.

✅ Continuous evidence generation, multi-framework mapping, 80–90% reduction in manual effort, and a compliance posture maintained 365 days a year, not just during audit windows.

✅ The structural shift is that security operations and compliance become a single workflow. There’s no separate “compliance project” consuming your team for six weeks every year.

The 12-Dimension Comparison

| Dimension | Traditional SOC | AI SOC |

|---|---|---|

| Monitoring Approach | Periodic snapshots and scheduled reviews | Continuous 24/7 real-time monitoring |

| Evidence Collection | Manual screenshots, spreadsheets, exports | Automated API-driven with chain-of-custody metadata |

| Evidence Format | Ad-hoc files, PDFs, spreadsheets | Structured, standardized, auditor-ready artifacts |

| Audit Prep Time | 4–6 weeks | 1–3 days |

| Annual Evidence Hours | 300–500 hours per framework | 110–170 hours per framework |

| Multi-Framework Mapping | Siloed: separate collection per framework | Unified: single event maps to all applicable controls |

| Exception Rate | Reactive gap discovery during audit | Real-time prevention between audits |

| Compliance-Security Integration | Separate workstreams, separate teams | Unified: compliance is a byproduct of security ops |

| Dedicated Compliance FTEs | 2–3 FTEs needed | 0 dedicated compliance FTEs required |

| Between-Audit Posture | Degrades between review cycles | Consistent year-round |

| Scalability to New Frameworks | Linear effort increase per framework | Marginal effort: new framework maps to existing evidence |

| Human Oversight Model | Manual review and assembly | AI collects and organizes; human decides and validates |

Who Should Choose What

A traditional SOC compliance approach may suffice if your organization maintains a single framework, has dedicated compliance FTEs, and audit cycles are manageable. An AI SOC approach delivers decisive advantage if you maintain multiple frameworks, want to reduce compliance costs by 40–60%, need continuous audit readiness, or want to free compliance personnel for strategic GRC work. UnderDefense MAXI serves organizations in both positions, enhancing existing SOC operations with AI-driven compliance capabilities without requiring a rip-and-replace.

Q7: What Actually Changes in the Audit Room When You Run an AI SOC?

It’s week one of your SOC 2 Type 2 audit. The auditor requests “evidence of continuous monitoring for access control changes over the past 12 months.” Your compliance lead opens a spreadsheet, cross-references three SIEM exports, manually screenshots four different dashboards, and asks two analysts to pull historical logs from a server that was migrated in Q3. Three days later, the evidence package is assembled, and the auditor flags two gaps where monitoring documentation lapsed during the migration.

This scenario is painfully common. And it’s not because people aren’t trying. It’s because the architecture makes it almost inevitable.

Why This Problem Exists

Traditional SOC compliance separates security operations from evidence generation. The SOC detects and responds; a separate compliance function documents and packages evidence after the fact. This creates a temporal gap: events happen in real-time, but evidence is reconstructed retrospectively, introducing gaps, inconsistencies, and reliance on analyst memory and availability. When an analyst is on PTO, when a server gets migrated, when logs rotate and nobody exports them, that’s where the audit exceptions come from.

The Hidden Costs Nobody Budgets For

⏰ 4–6 weeks of audit prep consuming 2–3 FTEs who should be doing security work

❌ Analyst time diverted from threat detection to “evidence hunting”

💸 Auditor requests for “additional evidence” extending fieldwork by 1–2 weeks

⚠️ Exceptions generated by documentation gaps that didn’t exist operationally, but lacked proof

😤 The stress and overtime that makes audit season the most dreaded period of the year

How UnderDefense Changes This

Same auditor request, “evidence of continuous monitoring for access control changes over 12 months,” answered in minutes. UnderDefense MAXI Compliance dashboard exports a complete, timestamped, control-mapped evidence package covering every access change detected, investigated, and verified across the full observation period. Evidence includes AI-enriched investigation notes, ChatOps user verification records, automated control-mapping metadata, and response action documentation. No spreadsheets, no screenshots, no three-day assembly. The auditor reviews pre-formatted, verifiable evidence, not a manually compiled binder.

The Before and After

From three-day evidence assembly to three-minute export. From auditor exception flags to clean observations. From “audit season panic” to “any-day-is-audit-day” readiness. That’s what changes when your SOC generates compliance evidence as an operational byproduct, not a retrospective project.

“Really like using UnderDefense MAXI platform, as it has everything from early risk detection and compliance to incident response automation and 24/7 protection with MDR.”

— Serhii I., CEO UnderDefense G2 – Verified Review

“Building our cybersecurity from scratch felt like a daunting challenge. Enter UnderDefense MAXI and its 30-day impact report. For a marketing agency taking baby steps in security, these reports were our guiding star, clear, concise, and oh-so-relevant. Plus, their vCISO team was amazing in supporting us with ISO 27001.”

— Val R., Small-Business UnderDefense G2 – Verified Review

“Arctic Wolf provides solid detection and response capabilities, but overly relies on the client’s team for remediation, which really hurts the value of the service.”

— VP of Technology, Services Arctic Wolf – Gartner Verified Review

Q8: Is Your SOC Actually Audit-Ready Right Now? A Compliance Readiness Checklist

Score your SOC’s compliance readiness against these eight criteria. Each unchecked item represents a gap that increases audit risk, extends fieldwork duration, or generates costly exceptions. Be honest: this assessment is for your team, not your auditor.

The 8-Point Compliance Readiness Audit

- ☐ Does your SOC generate timestamped evidence for every security event automatically, without analyst intervention?

- ☐ Can you produce 12 months of continuous monitoring evidence within 24 hours of an auditor request?

- ☐ Does a single detection event map to controls across all your compliance frameworks simultaneously?

- ☐ Is your evidence collection independent of individual analyst effort, availability, or shift schedules?

- ☐ Can you demonstrate real-time gap detection and remediation between audit cycles, not just during them?

- ☐ Does your audit prep require less than one week of dedicated compliance team time?

- ☐ Are your evidence artifacts in a standardized, auditor-friendly format with chain-of-custody metadata?

- ☐ Does your compliance posture remain consistent whether it’s audit season or a random Tuesday in July?

What Your Score Tells You

| Score | Assessment | What It Means |

|---|---|---|

| ✅ 7–8 | Mature and likely AI-augmented | Your compliance engine is running. Focus on multi-framework expansion, optimization, and reducing marginal cost per additional framework. |

| ⚠️ 4–6 | Significant gaps exist | Extended audit timelines, elevated exception risk, and annual burnout for your compliance team. You’re spending more than necessary, and your between-audit posture has soft spots auditors will probe. |

| ❌ 0–3 | Reactive and project-based | You’re spending 3–5× more than necessary. Compliance is a fire drill, not a process. Between-audit posture has blind spots, and every audit cycle is a scramble that pulls security analysts off their actual work. |

Where UnderDefense Closes the Gaps

UnderDefense MAXI is engineered to turn every unchecked box into a ✅ within 30 days of onboarding. Continuous monitoring with bundled UnderDefense MAXI Compliance, not a separate tool, but built directly on the security operations platform, delivers automated multi-framework evidence mapping, ChatOps-verified investigation records, and AI-enriched audit artifacts with chain-of-custody metadata. We include forever-free compliance kits for SOC 2, ISO 27001, and HIPAA because compliance shouldn’t be a separate line item from security. Published pricing at $11–15/endpoint/month means no surprises when you calculate your total compliance cost of ownership.

The Honest Starting Point

Scored below 5? That’s where most organizations start before implementing an AI SOC, and the difference after is measurable. The goal isn’t perfection on day one. It’s building a system where compliance evidence is generated continuously, automatically, and in a format your auditor can trust without follow-up requests. That shift, from annual panic to operational readiness, is what separates teams that dread audit season from teams that barely notice it. If your score landed in the red zone, that’s not a failure. It’s the normal starting point, and it’s also exactly the gap an AI SOC is designed to close within your first audit cycle.

Q9: Making the Business Case: How to Justify the AI SOC Compliance Advantage to Your Board

You see the compliance value of AI SOC operations clearly. Your team lives the audit fire drills, the evidence-hunting marathons, the exception remediation cycles. But your CFO sees a budget line. Your board sees a technology investment. Convincing them requires translating operational advantages into the three things executives actually fund: cost reduction, risk reduction, and time recovery.

Don’t Lead with Technology

Here’s where most business cases fail: they open with “AI automates evidence collection.” Boards don’t fund technology. They fund risk elimination and cost reduction. Don’t present features; present financial outcomes. Don’t compare SOC types; compare compliance cost trajectories with and without the AI SOC investment. If your first slide has the word “automated” instead of a dollar figure, start over.

The 5-Pillar Business Case Framework

Build your internal justification around these five pillars, each one maps directly to a financial outcome your CFO can model:

- 💰 Cost Reduction: Current compliance spend vs. projected AI SOC compliance spend. Target 40–60% reduction by eliminating $50K–$100K in annual consultant surge costs, replacing standalone compliance tools (Vanta, Drata), and freeing 2–3 compliance FTEs for strategic work instead of evidence hunting.

- ⚠️ Risk Reduction: Project your exception trend. Continuous monitoring reduces audit exceptions by 70–90%, and each exception costs $10K–$50K to remediate. If you averaged 8 exceptions last cycle, that’s $80K–$400K in avoidable remediation risk, a number your board understands immediately.

- ⏰ Time Recovery: Quantify FTE hours returned from compliance tasks to security operations in salary-equivalent dollars. If two analysts spend six weeks on audit prep at a fully loaded cost of $85/hour, that’s roughly $40K–$50K in redirected capacity per audit cycle.

- 💸 Audit Efficiency: Fieldwork compression from 4–6 weeks to 1–2 weeks reduces external auditor billable hours directly. At $300–$500/hour for audit firms, shaving three weeks of fieldwork translates to $30K–$75K in direct audit cost savings.

- ✅ Scalability: Marginal cost of adding frameworks. With AI SOC multi-framework mapping, adding HIPAA or PCI-DSS to existing SOC 2/ISO 27001 compliance requires near-zero incremental effort. Traditional approaches demand 50–70% additional effort per framework, a compounding cost your CFO should see modeled over three years.

Apply the Framework with Your Numbers

Pull your organization’s actual data: current audit preparation hours (compliance team + analyst time diverted), compliance consulting and auditor fees, exception remediation costs, and productivity loss during audit season. Map each against the five pillars. The math typically justifies the investment within a single audit cycle, and the compounding effect across multiple frameworks makes the three-year case almost self-evident.

Where UnderDefense Stands on Each Pillar

| Pillar | UnderDefense MAXI | Why It Matters |

|---|---|---|

| Cost Reduction | $11–15/endpoint/month, UnderDefense MAXI Compliance bundled free | Eliminates separate Vanta/Drata costs ($10K–$80K/year) |

| Risk Reduction | 830% documented ROI over 3 years | Continuous monitoring catches control failures before auditors do |

| Time Recovery | 30-day onboarding, value realized within one quarter | No 6-month implementation draining resources |

| Audit Efficiency | Evidence export in minutes, not weeks | Auditor fieldwork compressed to 1–2 weeks |

| Scalability | Multi-framework mapping on unified platform | Adding frameworks = marginal effort, not linear cost increase |

The real question for your board isn’t “Should we invest in an AI SOC?” It’s “Can we afford to keep paying 3–5× more for compliance through manual processes while our security team spends six weeks a year hunting for evidence instead of hunting for threats?”

Q10: Ready to See What AI SOC Compliance Looks Like in Practice?

The compliance advantage of an AI SOC isn’t theoretical. It’s measurable in hours saved, exceptions eliminated, and audit cycles compressed. Whether you’re preparing for your first SOC 2 Type 2, maintaining ISO 27001 certification, or managing multi-framework compliance across HIPAA and PCI-DSS, the math favors AI-driven continuous monitoring over periodic manual workflows.

What to Evaluate Before You Move

If this article prompted a serious look at your compliance operations, here’s the shortlist of questions that should drive your next conversation:

- Is compliance evidence generated as a byproduct of security operations, or as a separate project consuming dedicated FTEs?

- Does your provider include compliance tooling in the MDR service, or charge $10K–$80K extra for standalone platforms?

- Can you produce 12 months of continuous monitoring evidence on demand, within hours, not weeks?

- Is your pricing transparent and predictable, or hidden behind “contact sales” gating?

- Does the platform map a single security event to multiple compliance frameworks simultaneously?

Each unchecked item is a cost multiplier hiding in your compliance budget. Each checked item is proof your security operations are already working double duty.

The Path from “Makes Sense” to “Board-Ready Proposal”

Operational insight without financial modeling stays on a CISO’s wishlist instead of the board agenda. The fastest way to move from “this makes sense” to “here’s the business case” is to model your current compliance costs against an AI SOC alternative. UnderDefense’s SOC Cost Calculator lets you input your environment size, framework requirements, and current compliance workflow to see projected cost reductions, no demo or sales call required. Plug in your numbers, screenshot the output, and attach it to the business case framework from Q9. That’s a board-ready proposal built in under 30 minutes.

What Happens After the Numbers

For organizations that need more than projections, real-world validation closes the gap. The case study below walks through exactly how unified AI SOC operations generated audit-ready compliance evidence while simultaneously preventing a $650K financial loss, the kind of dual-purpose outcome that makes compliance investment conversations straightforward. It’s one thing to project savings in a spreadsheet. It’s another to show your board a documented scenario where security and compliance operated as a single system with measurable outcomes.

The Bottom Line

This analysis is grounded in documented compliance outcomes across 500+ MDR deployments, zero ransomware cases in 6 years, and 830% ROI over 3 years. Compliance should be a byproduct of great security, not a separate project that drains your team for six weeks every year. The organizations that have already made this shift aren’t going back, and the evidence is available for anyone willing to look at the numbers.

1. How does an AI SOC generate compliance evidence differently than a traditional SOC?

Both SOC types produce valid compliance evidence that auditors accept. The difference is in how that evidence gets created. A traditional SOC relies on manual processes: analysts pull logs, take screenshots, export spreadsheets, and compile evidence packages during dedicated audit prep cycles. This consumes 300–500 hours per audit cycle and creates temporal gaps between review windows.

An AI SOC generates compliance evidence as an operational byproduct of daily security operations. Every alert triaged, every incident investigated, every user verification conducted via ChatOps becomes a timestamped, control-mapped artifact automatically. There’s no separate “compliance project.” We built UnderDefense MAXI around this principle: security operations and compliance evidence generation are a single workflow, not two separate workstreams competing for your team’s time.

The practical result is 80–90% reduction in manual evidence collection hours and audit prep compressed from 4–6 weeks down to days.

2. Why does continuous monitoring beat periodic log checks for compliance frameworks like SOC 2, HIPAA, and PCI-DSS?

Periodic log checks create evidence gaps that auditors flag as exceptions. The failure mode is predictable: a security event occurs on Day 1, the log gets reviewed on Day 30 during a scheduled monthly check, the exception is discovered on Day 31, and remediation starts on Day 45. The auditor flags a 29-day evidence gap, and your team spends weeks justifying why the control was actually in place but the documentation wasn’t.

Continuous monitoring eliminates this gap entirely. Events are detected in real-time, evidence is auto-captured, and control validation happens instantly. Every major framework now favors or mandates this approach. PCI-DSS v4.0 (Requirement 10.4.1) explicitly requires automated mechanisms for daily audit log reviews. ISO 27001:2022 Clause 9.1 mandates monitoring effectiveness of the ISMS. SOC 2 Type 2 requires evidence across the entire observation period, not snapshots.

The regulatory direction is unambiguous: continuous beats periodic for every framework that matters.

3. How much can an AI SOC save on compliance costs per audit cycle?

The savings compound across multiple dimensions. For SOC 2 Type 2 alone, organizations report $150K–$200K+ saved in compliance personnel and consulting costs when switching from manual to AI-driven evidence collection. ISO 27001 surveillance audits save $80K–$130K, HIPAA saves $100K–$160K, and PCI-DSS v4.0 saves $90K–$140K annually.

Beyond per-framework savings, there are aggregate cost reductions most teams don’t initially calculate:

-

$50K–$100K in consultant surge costs per audit cycle, eliminated

-

2–3 FTEs freed from compliance prep for actual security operations

-

Standalone compliance tools like Vanta ($10K–$80K/year) or Drata ($12K–$100K/year) become redundant

-

Auditor fieldwork compressed from 4–6 weeks to 1–2 weeks, reducing billable hours by $30K–$75K

Organizations maintaining three or more frameworks report 40–60% total compliance effort reduction when evidence collection is automated and unified versus siloed manual processes.

4. Do auditors actually trust and accept AI-generated compliance evidence?

Yes. Auditors evaluate evidence on four dimensions: completeness, accuracy, timeliness, and chain of custody. AI-collected evidence outperforms manual evidence on all four.

Continuous collection ensures completeness with no gaps between review windows. Automated capture eliminates manual transcription errors and mislabeled screenshots. Real-time logging provides immediate timeliness versus retrospective assembly. And tamper-evident, automated logging maintains an unbroken chain of custody.

ISO 27001:2022 Clause 7.5 makes no distinction between manual and automated evidence. PCI-DSS v4.0 now mandates automated mechanisms for daily log reviews. The regulatory direction actively favors automation. Organizations switching to AI-collected evidence consistently report fewer audit exceptions, not more.

At UnderDefense, our ChatOps user verification adds a layer that strengthens auditor trust further: when anomalous behavior is detected, human analysts contact the actual user via Slack, Teams, or email to confirm. This creates verified, human-validated evidence that auditors consider gold-standard. We recommend requesting a sample evidence package before your next audit cycle and sharing it with your audit firm during planning.

5. How does automated evidence collection work from alert to audit-ready artifact?

We built a 6-step pipeline that converts daily SOC operations into structured compliance artifacts:

-

Detection across 250+ integrated tools (SIEM, EDR, cloud, identity)

-

AI enrichment adding threat intel, user attribution, and asset classification automatically

-

Investigation documentation with a full, observable audit trail of every query, log pull, and correlation

-

Response timestamping of containment, remediation, and user verification via ChatOps

-

Framework mapping of evidence to compliance controls (SOC 2, ISO 27001, HIPAA, PCI-DSS simultaneously)

-

Audit-ready storage with chain-of-custody metadata in structured, exportable format

The critical differentiator is at step 3: every investigative step is observable and auditable. This isn’t automation hiding behind a curtain. It’s automation you can trace, question, and reproduce. A single detection event simultaneously satisfies controls across all applicable compliance frameworks, eliminating the traditional approach of collecting the same evidence four separate times in four different formats.

6. What changes in the actual audit room when you run an AI SOC instead of a traditional SOC?

The transformation is dramatic. In a traditional SOC audit scenario, when an auditor requests “evidence of continuous monitoring for access control changes over 12 months,” your compliance lead opens spreadsheets, cross-references SIEM exports, manually screenshots dashboards, and asks analysts to pull historical logs. Three days later, the evidence package is assembled, and the auditor often flags gaps where documentation lapsed.

With an AI SOC, that same auditor request gets answered in minutes. UnderDefense MAXI Compliance dashboard exports a complete, timestamped, control-mapped evidence package covering every access change detected, investigated, and verified across the full observation period. No spreadsheets, no screenshots, no three-day assembly.

The measurable changes: audit prep drops from 4–6 weeks consuming 2–3 FTEs to days requiring minimal dedicated effort. Auditor requests for “additional evidence” that typically extend fieldwork by 1–2 weeks virtually disappear. Exceptions generated by documentation gaps are eliminated. The shift is from “audit season panic” to “any-day-is-audit-day” readiness.

7. How do we justify the AI SOC compliance investment to our CFO and board?

Don’t lead with technology. Boards fund risk elimination and cost reduction, not features. We recommend building your business case around five financial pillars:

-

Cost reduction: Target 40–60% reduction by eliminating consultant surge costs, replacing standalone compliance tools, and freeing compliance FTEs

-

Risk reduction: Continuous monitoring reduces audit exceptions by 70–90%, and each exception costs $10K–$50K to remediate

-

Time recovery: Quantify FTE hours returned from compliance tasks to security operations in salary-equivalent dollars

-

Audit efficiency: Fieldwork compression from 4–6 weeks to 1–2 weeks saves $30K–$75K in auditor billable hours

-

Scalability: Adding new frameworks requires marginal effort versus 50–70% additional effort with traditional approaches

Use our SOC Cost Calculator to model your current compliance costs against an AI SOC alternative with your actual environment data. The math typically justifies the investment within a single audit cycle, and the compounding effect across multiple frameworks makes the three-year case self-evident.

8. Is our SOC actually audit-ready right now, and how do we assess compliance readiness?

We developed an 8-point compliance readiness assessment that scores your SOC’s current audit posture. The criteria include: whether your SOC generates timestamped evidence automatically, whether you can produce 12 months of continuous monitoring evidence within 24 hours, whether single detection events map to multiple frameworks, whether evidence collection is independent of individual analyst availability, and whether your audit prep requires less than one week.

Organizations scoring 7–8 are mature and likely AI-augmented. Scores of 4–6 indicate significant gaps with elevated exception risk and annual compliance team burnout. Scores of 0–3 mean you’re spending 3–5× more than necessary, and compliance is a fire drill rather than a process.

Most organizations start below 5 before implementing an AI SOC. That’s the normal starting point. UnderDefense MAXI is engineered to turn every gap into a pass within 30 days of onboarding, with bundled compliance kits for SOC 2, ISO 27001, and HIPAA included at no additional cost.

The post AI SOC Compliance Edge: How Continuous Monitoring Beats Periodic Log Checks appeared first on UnderDefense.