Q1. What Is an AI SOC, and Why Is It Replacing the Traditional Security Operations Model?

⚠️ The Paralysis of Fragmentation

Here is what I see across nearly every security environment we assess: CrowdStrike running on endpoints, Splunk ingesting logs, Okta managing identity, separate consoles for AWS and Azure, maybe a Palo Alto firewall throwing its own alerts into the mix. Each tool does its job. None of them talk to each other in a way that helps you actually respond to a threat. Alerts are everywhere, but understanding is nowhere.

This is the fragmented security reality most organizations live with today, and it is exactly the gap an AI SOC is designed to close.

An AI SOC is a security operations center where artificial intelligence, not just automation scripts, performs the investigation, triage, and correlation work traditionally done by Tier 1–2 human analysts, enabling continuous threat detection, enrichment, and response across identity, endpoint, cloud, network, and SaaS environments.

❌ The “Black Box Escalation” Trap

Let me be direct about what traditional MDR providers and legacy MSSPs actually deliver. Providers like Arctic Wolf and ReliaQuest operate as opaque alert factories: they show you what was detected, but not why it matters or what to do next. They push proprietary vendor lock-in, requiring you to abandon your existing SIEM and security investments to use their stack. Their pricing sits behind “contact sales” walls. Arctic Wolf’s median annual contract runs around $96K with no published per-endpoint rates.

Legacy MSSPs are a different flavor of the same problem: monitoring without intelligence. Checkbox coverage built on rigid, pre-written playbooks rather than real-time threat context. When a critical alert fires at 2 AM, that playbook doesn’t know whether it’s your IT admin or an attacker, and the MSSP doesn’t ask.

The numbers confirm the structural failure. The global cybersecurity workforce gap has hit approximately 4.8 million unfilled positions, representing roughly 47% of the total workforce needed. Budget reductions compound the shortage: 33% of organizations lack the budget to adequately staff security teams, while 29% cannot afford to hire staff with the skills they need. This model is not sustainable.

⏰ Detection Without Response Is Noise

Here is the thesis that drives everything we build: Detection without response is noise. Response without context is risk.

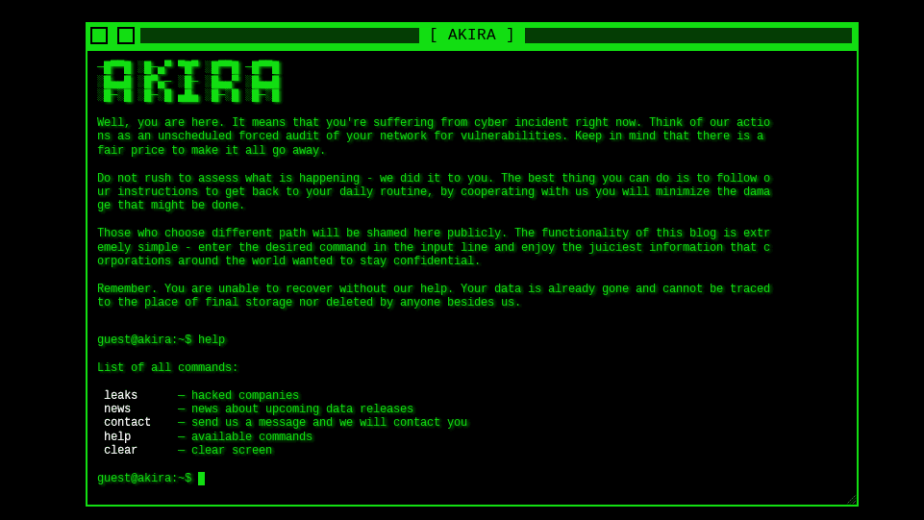

Threat actors have already weaponized agentic AI: reconnaissance that took days now happens in hours, adaptive malware rewrites itself to evade your defenses, and lower-skilled attackers are achieving higher success rates than ever before. For the first time in cybersecurity history, the barrier to entry for sophisticated attacks is collapsing while attack effectiveness is skyrocketing.

The traditional SOC model, human analysts responding to alerts at human speed, cannot match this pace. You are not just competing against human attackers anymore. You are competing against AI systems that never sleep.

✅ The AI SOC + Human Ally Model

We built UnderDefense MAXI as a next-generation agentic AI platform that delivers continuous threat detection, enrichment, and automated context gathering across the full stack, without vendor lock-in. The platform integrates with 250+ existing security tools, achieves 2-minute alert-to-triage and 15-minute escalation for critical incidents, and includes a capability no competitor offers: ChatOps user verification, where our analysts reach out directly to affected users via Slack or Teams to confirm suspicious activity.

UnderDefense maintains zero ransomware cases across all MDR customers over 6 years, with documented detection 2 days faster than CrowdStrike OverWatch, because AI-driven detection without human context still leaves gaps only analysts communicating directly with users can close.

Q2. How Does an AI SOC Actually Work? From Alert Ingestion to Autonomous Investigation and Response

The 5-Stage AI SOC Pipeline

Understanding how an AI SOC operates requires walking through the actual workflow, step by step, no hand-waving. Here is the operational lifecycle as a 5-stage pipeline:

- Data Ingestion & Normalization — Unified telemetry from SIEM, EDR, NDR, cloud, identity, and SaaS environments is collected and standardized into a common schema. Your Splunk logs, CrowdStrike alerts, Okta identity events, and Azure AD signals all flow into one correlated data layer.

- AI-Driven Triage & Prioritization — Machine learning models score and classify every alert by severity, organizational context, and kill-chain position. Instead of a human eyeballing 500 alerts, the AI surfaces the 3 that actually matter.

- Automated Enrichment & Correlation — AI queries threat intelligence feeds, pulls historical logs, cross-references MITRE ATT&CK techniques, and correlates signals across domains. An endpoint alert gets matched against identity anomalies and network traffic patterns in seconds.

- Agentic Investigation — AI agents reason across the assembled evidence, construct investigation narratives, identify root cause, and determine the next investigative step dynamically, not from a pre-written script.

- Response & Containment — Automated or human-approved actions execute: credential revocation, endpoint isolation, lateral movement blocking, and user notification.

⏰ A Real-World Scenario: Legacy SOC vs. AI SOC

Consider this side-by-side comparison when a suspicious OAuth grant triggers at 2:41 AM across Microsoft Defender and Okta simultaneously:

| Stage | Legacy SOC | AI SOC |

|---|---|---|

| Alert correlation | Separate queues, no cross-reference | Both signals correlated in <2 seconds |

| Context enrichment | Manual log pulling at 7:15 AM | Identity context, threat intel match instant |

| Investigation | Tier-1 analyst spends 45 min, escalates to Tier-2 | Agentic investigation: login location, device fingerprint, email rules checked |

| User verification | User contacted at 11:30 AM via email | ChatOps verification sent at 2:43 AM (“Did you authorize this OAuth app?”) |

| Containment | ~9 hours total response | Threat contained by 2:47 AM, 6 minutes total |

💡 Why This Is Not Just Better SOAR

The distinction matters. SOAR follows pre-scripted playbooks: if phishing, then block sender. An AI SOC reasons dynamically, adapts to novel attack patterns, and handles scenarios that no playbook anticipated. SOAR handles known-knowns; agentic AI handles unknown-unknowns. This distinction gets expanded further in Q4, but the architectural difference is fundamental: scripted automation breaks on novelty, while agentic AI treats novelty as its primary use case.

✅ How UnderDefense Operationalizes This

UnderDefense MAXI operationalizes this entire pipeline with 2-minute alert-to-triage and 15-minute escalation for critical incidents. Every investigative step is observable and auditable, no black box. The platform works on top of your existing SIEM (Splunk, Sentinel, Chronicle) without forcing vendor lock-in, because your business logic, your correlation rules, and your data should stay yours.

Q3. What Types of AI Power a Modern AI SOC? A Structured Taxonomy

The AI Taxonomy Table

An “AI SOC” is not one AI model running everything. It is multiple AI types working in concert, each handling what it does best across distinct layers of the SOC workflow. No competitor currently offers this structured mapping, and that gap is exactly how AI-washing thrives.

| AI Type | SOC Function | Workflow Stage | Real-World Example |

|---|---|---|---|

| Supervised ML | Alert classification, malware detection, known-threat scoring | Detection Layer | Classifying a file as malicious based on 10M+ labeled samples |

| Unsupervised ML | Anomaly detection, baseline deviation, insider threat identification | Detection Layer | Flagging a user who accessed 47 files at 3 AM when their baseline is 5/day |

| NLP | Log parsing, unstructured data analysis, threat intel extraction | Enrichment Layer | Extracting IOCs from a 40-page threat advisory in seconds |

| Generative AI (LLMs) | Investigation summarization, report generation, natural-language querying | Enrichment & Communication Layer | Summarizing a 200-event investigation into a 3-paragraph executive brief |

| UEBA (Behavioral Analytics) | User/entity behavior profiling, compromised credential detection | Detection Layer | Detecting that Jane’s account is being used by someone who types differently |

| Agentic AI | Autonomous multi-step investigation, dynamic reasoning, tool-calling | Orchestration Layer | Investigating a phishing chain across email, identity, and endpoint domains autonomously |

🧠 How These Layers Work Together

These AI types do not operate in isolation: they function as an integrated stack. ML and UEBA form the detection layer, catching threats and anomalies. NLP and Generative AI form the enrichment and communication layer, parsing data and translating findings into actionable language. Agentic AI sits on top as the orchestration layer, coordinating all others to drive multi-step investigations and response.

The analogy I use with security leaders: ML is the eyes, UEBA is the memory, GenAI is the voice, and Agentic AI is the brain that coordinates them all.

⚠️ Common Misunderstandings

Many vendors market rule-based SOAR playbooks as “AI-powered automation.” This is the AI-washing problem. True AI SOC requires multiple AI types working in concert, not a legacy tool with a chatbot bolted on top. According to Omdia research, 39% of early adopters deploy agentic AI primarily for reduced costs and increased productivity, representing genuinely “AI-native” security operations that differ from traditional automation through continuous learning, adaptive decision-making, and contextual reasoning.

✅ UnderDefense MAXI’s Full AI Stack

UnderDefense MAXI employs this full AI stack, from ML-driven detection to agentic investigation workflows, while keeping every step auditable. Detection Logic as Code means detection rules are written in flexible languages like Python, version-controlled, unit-tested, and deployed via CI/CD: the governance foundation for production AI in the SOC.

Q4. What Is Agentic AI in the SOC, and How Does It Differ From SOAR and Traditional Automation?

The Defining Capability Statement

Agentic AI in the SOC refers to AI agents that autonomously plan multi-step investigations, call external tools and APIs, reason over evidence, adapt based on findings, and take (or recommend) response actions, without pre-scripted playbooks. This is the single capability that separates a modern AI SOC from the SOAR-era automation most organizations still rely on.

SOAR vs. Agentic AI SOC: The Structural Difference

| Dimension | SOAR | Agentic AI SOC |

|---|---|---|

| Approach | If/then playbooks | Dynamic reasoning |

| Adaptability | Breaks on novel scenarios | Adapts to unknowns |

| Coverage | Known-knowns only | Unknown-unknowns |

| Maintenance | Requires constant playbook updates | Self-improving with each investigation |

| Investigation | Follows script | Reasons across evidence chains |

| Scale | Linear, more playbooks = more maintenance | Non-linear, learns across customer base |

Here is the contrast in practice: SOAR says if-phishing-then-block-sender. An agentic AI SOC says: this email has a suspicious link → domain registered 2 hours ago → same user clicked a similar link last week → credentials may be compromised → checking identity logs → confirming lateral movement → initiating containment. Each step is a decision, not a script.

⚙️ How Agentic AI Works: The OODA Loop

Agentic AI operates through a continuous reasoning cycle:

- Observe — Gather data from multiple sources (SIEM, EDR, identity, cloud, threat intel)

- Orient — Reason about what the evidence means in context

- Decide — Determine the next investigative step or response action

- Act — Execute, then loop back with new evidence

Multi-agent systems (MAS) use specialized agents, an identity agent, an endpoint agent, a cloud agent, coordinated by an orchestrator agent that delegates tasks based on what each investigation requires. Google’s SecOps Alert Triage Agent, for example, autonomously investigates alerts by gathering evidence, running analyses like decoding obfuscated scripts, and correlating signals across tools to deliver a clear verdict with explanation. Model Context Protocol (MCP) is emerging as the standard for AI agent-to-tool communication, enabling these agents to interact with security tools through a unified interface.

💡 Why This Matters for Your SOC

Agentic AI handles the 80% of investigation work that is mechanical, context collection, log correlation, enrichment, user verification, at machine speed. Investigations that took 45 minutes complete in seconds. Tier-1/Tier-2 analyst work is automated; human experts focus on novel threats and strategic decisions.

The other side of this equation is equally important: attackers are already using agentic AI. Automated reconnaissance maps infrastructure in minutes. Adaptive malware rewrites itself to evade defenses. Exploitation frameworks automatically chain vulnerabilities. If your SOC is still operating at human speed with manual processes, you have already lost the asymmetric race.

✅ UnderDefense MAXI: Augment, Don’t Replace

UnderDefense MAXI’s agentic AI doesn’t just automate, it augments. The platform collects context, queries your SIEM, enriches with threat intel, and reaches out to affected users via Slack/Teams to verify suspicious activity. But the human analyst always makes the final call on critical decisions. Every AI action is observable and auditable, because in security, explainability is not optional. We do not believe in black boxes. If you cannot see what the AI did and why, you cannot trust it in production.

Q5. AI SOC vs. Traditional SOC: What Changes When AI Runs Your Security Operations?

Why This Comparison Matters Now

If you are running a traditional SOC with 5 to 15 analysts handling thousands of daily alerts manually, you are facing a math problem that does not solve itself with headcount. The average enterprise SOC receives over 3,800 alerts daily, yet 62% of those alerts are ignored. The question is not whether AI belongs in your SOC, but whether your SOC can survive without it.

Both models have genuine strengths. This comparison lays out where each one breaks and where it holds, so you can make a decision based on operational reality, not vendor marketing.

✅ Traditional SOC: What It Does Well

The traditional SOC brings human judgment on complex, ambiguous threats. Analysts carry institutional knowledge, understand organizational context, and build relationship-driven response workflows. For highly nuanced investigations, such as insider threats tied to business processes, experienced human analysts remain irreplaceable.

❌ Where the Traditional SOC Breaks

The structural limitations are well-documented and worsening:

- Alert fatigue: 76% of security leaders report alert fatigue; 77% of organizations see rising alert volumes year over year.

- Analyst burnout: 71% of SOC analysts report burnout due to alert fatigue, with average tenure shrinking to under 18 months.

- Talent shortage: The global cybersecurity workforce gap hit 4.8 million in 2024, a 19% increase year-over-year, with 90% of teams reporting skills gaps beyond headcount.

- Inconsistent quality: Night shift vs. day shift variance means detection quality fluctuates based on who is on call.

- Reactive posture: Manual correlation across siloed tools leaves teams chasing alerts instead of hunting threats.

⚙️ Head-to-Head Comparison

| Dimension | Traditional SOC | AI SOC |

|---|---|---|

| Alert Triage Speed | Hours (queue-dependent) | Seconds (automated) |

| Investigation Depth | Single-tool surface review | Multi-source cross-domain correlation |

| Scalability | Linear headcount growth | Elastic AI processing |

| 24/7 Consistency | Shift-dependent, fatigue-prone | Always-on, fatigue-free |

| False Positive Handling | Manual review per alert | AI + ChatOps user verification |

| Threat Hunting | Reactive/ad-hoc | Continuous/proactive |

| Cost Per Alert | High and rising with headcount | Decreasing with scale |

| Human Role | Alert triage operator | Strategic decision-maker |

✅ How UnderDefense Bridges the Gap

UnderDefense MAXI bridges this divide: AI handles the 80% mechanical work (triage, enrichment, correlation, user verification), while dedicated Tier 3 to 4 analysts handle the 20% requiring human judgment, organizational context, and strategic decision-making. The result: 2-minute alert-to-triage, 15-minute critical escalation, and zero ransomware across 500+ clients over 6 years.

“Our IT team was overwhelmed by the sheer volume of security alerts and doesn’t have the resources for 24/7 monitoring.”

— Andriy H., Co-Founder and CTO UnderDefense G2 – Verified Review

“Not having to worry about ransomware, alert overload and reporting. Getting a clear view of my security posture, where the threats are coming from and how they are handled. They literally took care of all our problems.”

— Arlin O., Enterprise UnderDefense G2 – Verified Review

“Started out well but over the years the service has consistently not met expectations. The issues that we have experienced has greatly outweighed the benefits.”

— CISO, Manufacturing Arctic Wolf – Gartner Verified Review

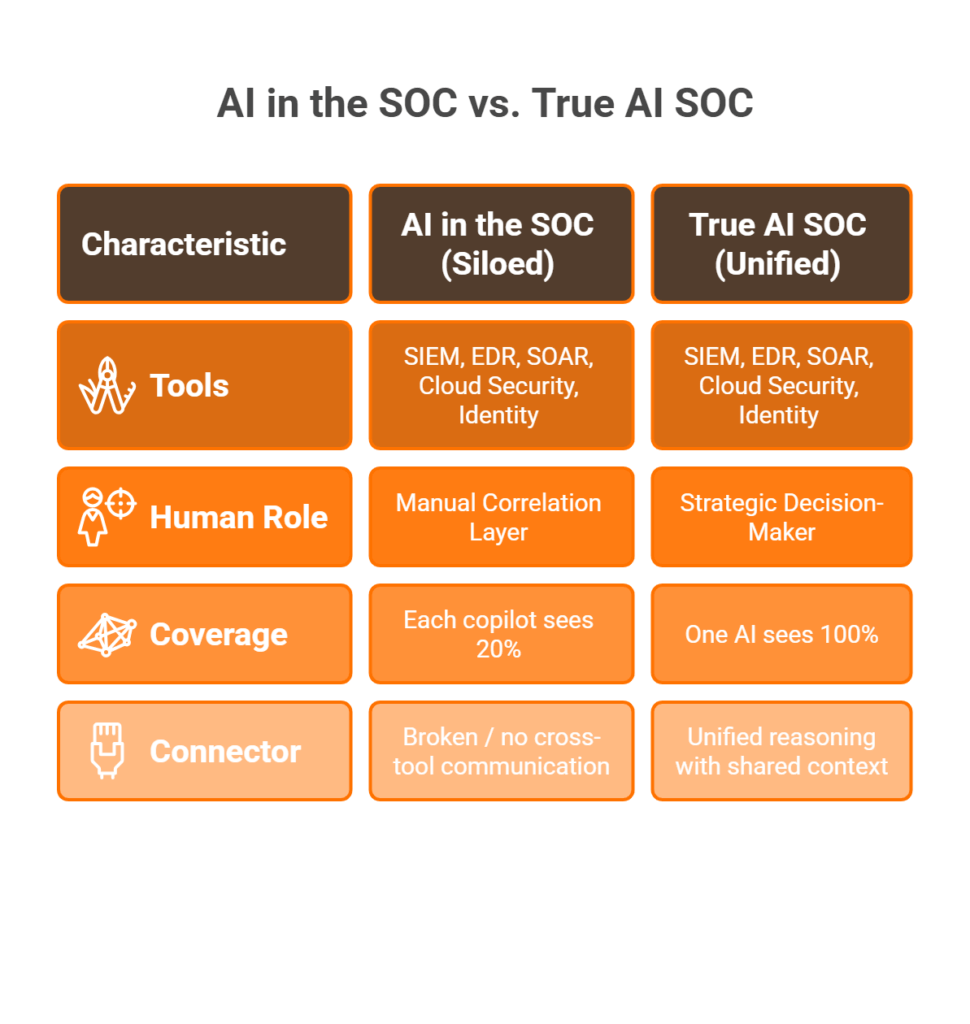

Q6. “AI in the SOC” vs. a True “AI SOC,” and How to Spot AI-Washing

⚠️ The Scenario Every Security Leader Faces

Your SIEM vendor just announced an AI copilot. Your EDR added a GenAI summary button. Your SOAR platform now has “AI-assisted” playbook suggestions. You have AI in your SOC, but you do not have an AI SOC. The difference matters more than any vendor will tell you.

The Conceptual Distinction

“AI in the SOC” = individual tools with siloed AI features, each operating within its own data and context. No single AI reasons across all of them. You remain the manual correlation layer, stitching together five different “AI-powered” dashboards that each see 20% of your environment.

A true “AI SOC” = an architectural paradigm where AI operates as a unified reasoning layer across your entire stack (endpoint, identity, cloud, network, SaaS) with shared context, cross-domain correlation, and end-to-end investigation capability.

📌 Quick Reference

- AI in the SOC → AI features added to individual tools (siloed)

- AI SOC → AI as the unified operating system across all tools (integrated)

💸 The Hidden Cost of “Fake AI”

Teams with “AI in the SOC” still spend 10 to 15 hours per week on manual correlation across tools. They still face 76% alert fatigue rates because each AI tool only sees its own slice. The illusion of AI coverage creates a dangerous false sense of security. You think you are protected by AI, but your detection gaps are exactly where the siloed AI models cannot see.

❌ AI-Washing Red Flags Checklist

Score your current vendor, or your next vendor, against these 7 criteria:

- Vendor calls SOAR playbooks “AI-powered automation.” If every action follows if/then logic, it is scripting, not AI.

- AI only works within one proprietary tool. A true AI SOC reasons across your entire stack.

- No explainability or audit trail for AI decisions. You cannot inspect what the AI did or why.

- “AI” is a GenAI chatbot summarizing alerts. Summarizing is not investigating.

- Vendor cannot describe which AI types (ML, NLP, agentic) are used where. Vague “AI-driven” claims with no specifics.

- Marketing says “AI-driven” but SOC still escalates “please investigate” tickets back to your team. Detection without response is noise.

- Platform forces you to replace existing tools. Vendor lock-in disguised as “unified AI.”

Scoring:

⭐ 0 to 2 flags = Likely genuine AI SOC

⚠️ 3 to 4 flags = Significant AI-washing risk

❌ 5 to 7 flags = Legacy tool with an AI label

✅ How UnderDefense Passes Every Criterion

UnderDefense MAXI passes all seven: agentic AI (not scripted playbooks), cross-stack reasoning (250+ integrations, no vendor lock-in), full audit trails on every AI action, multiple AI types deployed at specific workflow stages, and ChatOps that verifies alerts directly with users, not escalating tickets.

The shift is straightforward: from 5 separate AI copilots each seeing 20%, to one agentic AI SOC seeing 100%. Use this checklist in your next vendor evaluation call.

Q7. The AI SOC Maturity Model: Where Is Your Organization on the Journey From Manual to Fully Agentic?

The 4-Stage Progression Framework

Most organizations know they need AI in their SOC, but few can articulate where they are today or what the next step looks like. The Cloud Security Alliance recently formalized AI maturity levels for cybersecurity, ranging from manual operations (L0) through full AI delegation (L4). Here is a practical adaptation of that framework, mapped to real SOC operational stages:

| Stage | Name | Key Characteristics | Typical Tools | Limitations |

|---|---|---|---|---|

| 1 | Manual SOC | Human-driven triage, investigation, response; siloed tools; tribal knowledge | SIEM + EDR + manual processes | Does not scale; 70%+ alerts ignored; analyst burnout; reactive only |

| 2 | Automated (SOAR-Era) | Scripted playbooks for known scenarios; partial automation of repetitive tasks | SIEM + SOAR + EDR | Breaks on novel threats; constant playbook maintenance; still siloed |

| 3 | AI-Augmented | ML-driven detection + GenAI summarization + partial AI triage; humans still lead investigation | SIEM + XDR + AI copilots | AI features siloed per tool (“AI in the SOC”); cross-domain correlation still manual |

| 4 | Fully Agentic AI SOC | Agentic AI orchestrates end-to-end: triage, investigation, user verification, containment; humans make strategic decisions | Unified AI SOC platform | Requires trust in AI explainability; governance frameworks essential |

🔍 Self-Assessment: Identify Your Stage

- If your analysts manually investigate most alerts → Stage 1

- If you have SOAR playbooks but they break on novel threats → Stage 2

- If you have AI features in individual tools but still correlate manually across them → Stage 3

- If AI autonomously investigates across your full stack and humans focus on strategic decisions → Stage 4

⏰ The Industry Is Moving Fast

Most organizations today sit at Stage 2 to 3, and the industry is rapidly moving toward Stage 4. The jump from Stage 3 to Stage 4 requires an architectural shift, not just adding more AI features to existing tools. This is the “AI in the SOC vs. AI SOC” distinction from Q6, applied to organizational maturity: you cannot get to Stage 4 by bolting more copilots onto a fragmented stack.

As the CSA framework puts it, each maturity level reflects a shift not only in technology, but in people and processes. Analysts evolve from executors to strategic overseers.

✅ UnderDefense MAXI: Purpose-Built for Stage 4

UnderDefense MAXI is purpose-built for Stage 4: fully agentic investigation, cross-stack correlation across 250+ tools, ChatOps user verification, and human analyst oversight for strategic decisions. Organizations at Stage 1 to 3 can onboard in 30 days and leap directly to Stage 4 capabilities, without replacing their existing stack.

Q8. AI SOC Governance, Compliance, and Explainability: What CISOs Must Know

AI in your SOC introduces a new governance surface that most compliance frameworks have not caught up with yet. Every automated decision must be explainable, auditable, and aligned with your regulatory obligations. Here is what CISOs must address:

⚠️ Key Governance Requirements

- Explainability: Every AI investigation step must produce a human-readable audit trail: what data was analyzed, what reasoning was applied, what action was taken, and why. Without this, you cannot defend AI-driven decisions to auditors or regulators.

- Data Privacy: AI SOC platforms ingest sensitive telemetry across your entire environment. Data residency, encryption at rest and in transit, and access controls must meet regulatory standards (SOC 2, ISO 27001, HIPAA, GDPR).

- Regulatory Mapping: AI-driven detections must map to compliance control frameworks, not just find threats, but generate evidence that satisfies auditors automatically.

- Human Override: Critical containment actions require human approval gates. AI recommends; humans authorize for high-impact decisions. This is non-negotiable for regulated environments.

- Bias and False Positive Governance: ML models must be continuously validated to prevent detection drift, bias toward specific threat patterns, or systemic false positive generation.

✅ UnderDefense’s Governance-First Approach

UnderDefense MAXI is built for governance-first AI: every AI action is observable and auditable in real-time. The platform keeps logs and AI data in your data lake (on-prem or customer-specific cloud: Azure, GCP, AWS, Oracle). MAXI Compliance provides automated evidence collection, continuous control validation, and audit-ready reporting for SOC 2, ISO 27001, HIPAA, and PCI-DSS, built on actual security operations telemetry, not a standalone checklist tool.

💰 Compliance as a Byproduct, Not a Project

UnderDefense clients achieve full regulatory compliance across ISO 27001, SOC 2 Type 1 and 2, and HIPAA, with compliance kits included at no additional cost. Compliance evidence should be a byproduct of security operations, not a separate project requiring separate tooling and separate budget.

“UnderDefense also helped us navigate key compliance requirements, ensuring we met industry standards smoothly and efficiently. What stood out the most was their responsiveness and flexibility.”

— Arman N., CTO UnderDefense G2 – Verified Review

“Their reports from the platform give us clear evidence of our security controls and incident response capabilities. When auditors or clients ask questions about our security posture, we can pull up exactly what they need to see.”

— Verified User, Marketing & Advertising UnderDefense G2 – Verified Review

Q9. Will AI Replace Human SOC Analysts, or Redefine Their Role Entirely?

“If AI can triage alerts, investigate incidents, and contain threats autonomously, why would anyone need human SOC analysts?”

It’s the most common question I hear from CISOs and IT Directors evaluating an AI SOC. And I won’t dismiss it, because the concern is legitimate.

⚠️ The Honest Truth About Tier-1 and Tier-2 Work

AI SOC platforms are explicitly designed to automate Tier-1 and Tier-2 investigation work, the exact tasks consuming 80% of a junior analyst’s day. Context collection, log correlation, enrichment, and known-pattern matching are mechanical processes. If your entire value proposition is manually triaging alerts, AI will replace that function. That’s not a maybe, but something already happening.

But the “AI replaces analysts” narrative confuses the mechanical 80% with the strategic 20%. That 20% is where breaches actually get stopped.

The New SOC Org Chart

The SOC isn’t disappearing. It’s reorganizing:

| SOC Function | AI SOC Impact | Human Role |

|---|---|---|

| Tier-1 Alert Triage | ✅ Fully automated | Eliminated as standalone role |

| Tier-2 Investigation | ✅ AI-augmented (AI gathers evidence, human validates) | Validates, not investigates from scratch |

| Detection Engineering | ❌ Human-led (detection logic as code) | More critical than ever |

| Threat Hunting | ✅ Human + AI collaboration | Strategic, hypothesis-driven |

| Incident Command | ❌ Human leadership with AI-generated intel | Executive decision-making |

| AI Operations / Tuning | 🆕 Entirely new role | Managing AI SOC performance |

The net effect: fewer triage operators, more strategic roles, and total headcount needed for a mature SOC drops by 60 to 70%.

✅ The AI SOC + Human Ally Model in Practice

This is exactly why we built the AI SOC + Human Ally model at UnderDefense. UnderDefense MAXI‘s agentic AI collects context, correlates signals across 250+ tools, and verifies suspicious activity with users directly via Slack, Teams, or email. But dedicated Tier 3 to 4 human analysts make containment decisions, communicate with your team, and learn your organization’s context: VIPs, critical assets, and business processes no AI model can infer from logs alone.

AI finds patterns. Humans understand intent. That combination is why we maintain zero ransomware cases across 500+ MDR clients, and why our net dollar retention is 113%.

“UnderDefense has changed our approach to cybersecurity. At first, we hired them for managed SIEM service, but after they demonstrated the value of MDR, our management was motivated to act on it.”

— Yaroslava K., IT Project Manager UnderDefense G2 – Verified Review

“Their team is proactive in identifying and addressing threats, providing 24/7 oversight… it lets me focus on strategy, knowing the day-to-day security is managed effectively.”

— Oleg K., Director Information Security UnderDefense G2 – Verified Review

“Started out well but over the years the service has consistently not met expectations… Analysts provide little context, and when asked for more information in the investigation nothing is ever provided.”

— CISO, Manufacturing ⭐⭐ Arctic Wolf – Gartner Verified Review

Q10. Measuring AI SOC Success: Key Metrics, ROI Benchmarks, and a Vendor Evaluation Framework

Every vendor claims AI. Every demo looks impressive. But when the sales call ends and the SOC lights up at 2 AM, the only thing that matters is whether the system actually detects, investigates, and contains threats, measurably.

⏰ Six Critical AI SOC Metrics

| Metric | Definition | Traditional Benchmark | AI SOC Benchmark |

|---|---|---|---|

| MTTD | Time from threat entry to identification | 200+ days | < 24 hours |

| Alert-to-Triage Time | Alert firing to classification + enrichment | 30 to 60 min | < 2 min |

| MTTC | Confirmed threat to containment action | 4 to 24 hours | < 15 min |

| False Positive Reduction | % decrease in false alerts after AI tuning | Baseline | 80 to 99% reduction |

| Analyst Productivity | Alerts resolved per analyst per shift | 1x | 3 to 5x improvement |

| Cost Per Resolved Alert | Total SOC cost ÷ meaningful resolutions | Baseline | 60 to 70% reduction |

The ultimate metric remains breach prevention rate. Speed without accuracy creates faster wrong answers.

❌ The Wrong Way to Evaluate

Choosing based on brand recognition, integration count alone, or AI buzzword density in marketing materials will get you an expensive alert feed, not a security operations partner. The difference is whether the AI can reason across your stack, verify threats with users, and contain incidents, or whether it’s a chatbot on a dashboard.

7-Criteria Evaluation Framework (0 to 2 each, max 14)

- Cross-Stack AI Reasoning — Correlates across SIEM, EDR, identity, cloud, and SaaS?

- Agentic Investigation — Autonomous multi-step investigation or scripted playbooks?

- Human Analyst Access — Direct Tier 3 to 4 communication or ticket escalation?

- User Verification (ChatOps) — Verifies activity with affected users directly?

- Explainability & Audit Trail — Every AI decision observable and auditable?

- Vendor Agnosticism — Works with existing stack or forces proprietary replacement?

- Documented Outcomes — Published MTTD, alert-to-triage, and breach prevention metrics?

📊 Scoring: 12 to 14 = Genuine AI SOC with operational partnership. 8 to 11 = Partial AI SOC with gaps. Below 8 = “AI in the SOC” marketing, not a true AI SOC.

✅ UnderDefense Scorecard

| Criterion | Score | Evidence |

|---|---|---|

| Cross-Stack AI Reasoning | ✅ 2 | 250+ integrations, any SIEM |

| Agentic Investigation | ✅ 2 | Auditable autonomous workflows |

| Human Analyst Access | ✅ 2 | Dedicated Tier 3 to 4 concierge |

| User Verification | ✅ 2 | Slack / Teams / Email / SMS |

| Explainability | ✅ 2 | Every action observable real-time |

| Vendor Agnosticism | ✅ 2 | Preserves existing investments |

| Documented Outcomes | ✅ 2 | 2-min triage, 15-min escalation, 0 ransomware/6 years, 830% ROI |

| TOTAL | 14/14 |

“Their proactive threat hunting and rapid response have saved us from incidents that could have been incredibly costly.”

— Verified User, Program Development UnderDefense G2 – Verified Review

“We received little value from ArcticWolf. The product offered little visibility when we were using it… Anything you want to look at or changes you need to make must go through their engineering team.”

— Matt C., Manager, Cybersecurity Services ⭐½ Arctic Wolf – G2 Verified Review

Q11. Who Are the Best SOC-as-a-Service Providers for AI-Driven Security Operations?

The leading SOC-as-a-Service providers for AI-driven security operations in 2026 include UnderDefense, Arctic Wolf, CrowdStrike Falcon Complete, Expel, and ReliaQuest, each with distinct architectural approaches to AI integration, response capability, and pricing models.

What Separates AI-Native SOCaaS from Legacy Monitoring

- Integration approach: vendor-agnostic (works with your stack) vs. proprietary lock-in

- AI architecture: agentic investigation vs. AI features bolted onto legacy tools

- Response capability: full containment + remediation vs. detection-only with ticket escalation

- Human analyst access: direct Tier 3 to 4 communication vs. ticket-based support

- Pricing transparency: published per-endpoint rates vs. opaque “contact sales”

Choosing the Right Fit

Each provider excels in different scenarios: Arctic Wolf for single-vendor simplicity, UnderDefense for existing stack integration with the AI SOC + Human Ally model, and CrowdStrike for Falcon-native environments. The right choice depends on your current stack, team maturity, and whether you need detection-only or full detection + response.

📌 Methodology

This analysis is based on documented response times, G2 reviews, published pricing, MITRE ATT&CK coverage, and operational outcomes across 500+ MDR deployments.

Q12. Frequently Asked Questions About AI SOCs

Q: What does AI SOC stand for?

AI SOC stands for Artificial Intelligence Security Operations Center, a security operations center where AI performs investigation, triage, enrichment, and response tasks traditionally handled by human analysts. Rather than replacing the SOC entirely, AI transforms how alerts are processed and resolved at machine speed.

Q: How is an AI SOC different from a traditional SOC?

A traditional SOC relies on human analysts for alert triage and investigation. An AI SOC uses agentic AI to autonomously investigate, correlate across tools, and verify threats, reducing time-to-triage from hours to minutes. The key difference: AI SOCs reason across your full stack instead of investigating tool-by-tool.

Q: What is agentic AI in cybersecurity?

Agentic AI refers to AI agents that autonomously plan, reason, and execute multi-step security investigations, adapting dynamically rather than following pre-scripted playbooks like SOAR. Where SOAR runs “if X, then Y” logic, agentic AI determines what to investigate next based on findings in real time.

Q: Will AI SOC replace human security analysts?

AI SOC automates Tier-1/Tier-2 mechanical work (80% of analyst time) but cannot replace human strategic judgment, business context understanding, or novel threat hunting. The result is fewer triage operators, more strategic roles like Detection Engineers, Threat Hunters, and Incident Commanders.

Q: What types of AI are used in a SOC?

Modern AI SOCs use six AI types in layers: supervised ML (detection), unsupervised ML (anomaly detection), NLP (log parsing), generative AI (summarization), UEBA (behavioral analytics), and agentic AI (orchestration). Each layer handles a distinct function in the detection-to-response pipeline.

Q: How much does an AI SOC cost?

💰 In-house AI SOC buildout requires $1M to $3M+ annually (tools + staff). AI SOC-as-a-Service ranges from $11 to 15/endpoint/month with providers like UnderDefense, including 24/7 monitoring, Tier 3 to 4 analyst access, and compliance support, a fraction of the in-house cost.

Q: What is the difference between SOAR and AI SOC?

SOAR executes pre-scripted if/then playbooks for known scenarios. An AI SOC uses agentic AI that reasons dynamically, adapts to novel threats, and handles situations never previously encountered. SOAR is automation; AI SOC is autonomous investigation with human oversight.

Q: How do I evaluate if an AI SOC vendor is legitimate or AI-washing?

Demand a live investigation demo (not a dashboard walkthrough), ask which specific AI types are deployed, verify explainability and audit trails, and check whether the platform works with your existing tools or forces vendor lock-in. Use the 7-criteria evaluation framework in Q10 to score any vendor objectively.

1. What is an AI SOC, and how does it differ from adding AI features to existing security tools?

An AI SOC is a security operations center where artificial intelligence operates as the unified reasoning layer across your entire security stack, not as a feature bolted onto individual tools. The distinction matters because most vendors market “AI in the SOC,” which means siloed AI features inside separate products (your EDR has one AI, your SIEM has another, your SOAR has a third), but none of them communicate or reason together. You remain the manual correlation layer stitching dashboards together.

A true AI SOC, by contrast, ingests telemetry from endpoints, identity providers, cloud environments, network sensors, and SaaS platforms into a single reasoning engine. This engine correlates signals cross-domain, investigates autonomously through multi-step workflows, and verifies suspicious activity directly with affected users. We built UnderDefense MAXI on this principle: one agentic AI layer seeing 100% of your environment, not five separate copilots each seeing 20%. The operational difference shows up in triage speed (seconds vs. hours), investigation depth (cross-domain vs. single-tool), and consistency (always-on vs. shift-dependent).

2. What types of AI are used inside a modern AI SOC?

Modern AI SOCs deploy six distinct AI types in a layered architecture, each handling a specific function in the detection-to-response pipeline:

-

Supervised ML for known-pattern threat detection

-

Unsupervised ML for anomaly and outlier identification

-

NLP for log parsing, alert summarization, and unstructured data analysis

-

Generative AI for investigation summaries and analyst-readable reporting

-

UEBA (User and Entity Behavior Analytics) for behavioral baseline deviation

-

Agentic AI for autonomous, multi-step investigation orchestration

No single AI type handles the full workflow. The power comes from layering them. At UnderDefense, we deploy these AI types at specific stages within our SOC operations, so each model operates where it adds the most value. When a vendor claims “AI-driven” without specifying which types are used where, that is a red flag worth investigating before signing a contract.

3. How does agentic AI in a SOC differ from SOAR automation?

SOAR (Security Orchestration, Automation, and Response) executes pre-scripted if/then playbooks for known scenarios. If alert type equals X, then run enrichment Y, then notify Z. It works well for repetitive, predictable workflows but breaks on novel threats, requires constant playbook maintenance, and cannot adapt to situations it has never encountered.

Agentic AI operates fundamentally differently. Instead of following a script, agentic AI reasons dynamically: it plans investigation steps, executes them, evaluates findings, and decides what to do next based on what it discovers in real time. If the first data source reveals an anomaly, the agent autonomously pivots to correlate with identity logs, cloud activity, and endpoint telemetry without a human defining that path in advance.

We explain this distinction in depth in our AI SOC evaluation guide. The practical impact: SOAR handles the 20% of alerts that match known patterns, while agentic AI can investigate the remaining 80% that would otherwise sit in an analyst queue or get ignored entirely.

4. Will AI SOC replace human security analysts entirely?

AI SOC platforms automate Tier-1 and Tier-2 mechanical work, which consumes roughly 80% of a junior analyst’s day: context collection, log correlation, enrichment, and known-pattern matching. If an analyst’s entire role is manual alert triage, that function will be automated. That shift is already happening.

However, the strategic 20% of SOC work cannot be automated. Detection engineering (writing detection logic as code), hypothesis-driven threat hunting, incident command decisions, organizational context understanding, and executive communication require human judgment that no AI model can replicate from logs alone.

The SOC is not disappearing but reorganizing. Fewer triage operators, more strategic roles, and total headcount needed for a mature SOC drops by 60 to 70%. At UnderDefense, we operationalize this through our AI SOC + Human Ally model: UnderDefense MAXI handles automated investigation while dedicated Tier 3 to 4 analysts handle containment decisions, stakeholder communication, and business-context-dependent judgment calls. For a deeper perspective, we wrote about this in Does AI Kill or Save Your SOC Team?

5. How do we measure AI SOC success and ROI?

We track six critical metrics to evaluate AI SOC performance:

-

MTTD (Mean Time to Detect): from 200+ days (traditional) to under 24 hours

-

Alert-to-Triage Time: from 30 to 60 minutes to under 2 minutes

-

MTTC (Mean Time to Contain): from 4 to 24 hours to under 15 minutes

-

False Positive Reduction: 80 to 99% decrease after AI tuning

-

Analyst Productivity: 3 to 5x improvement in alerts resolved per shift

-

Cost Per Resolved Alert: 60 to 70% reduction

The ultimate metric remains breach prevention rate, because speed without accuracy creates faster wrong answers. We publish our operational benchmarks transparently: 2-minute alert-to-triage, 15-minute critical escalation, zero ransomware across 500+ clients over 6 years, and 830% ROI. For detailed SOC metrics and SLA frameworks, we have published a comprehensive breakdown of how each metric maps to real operational outcomes.

6. How can we tell if an AI SOC vendor is AI-washing?

We developed a 7-criteria checklist to identify AI-washing during vendor evaluations:

-

Vendor calls SOAR playbooks “AI-powered automation,” but every action follows if/then scripting logic

-

AI only works within one proprietary tool and cannot reason cross-stack

-

No explainability or audit trail exists for AI decisions

-

“AI” is a GenAI chatbot that summarizes alerts but does not investigate

-

Vendor cannot specify which AI types (ML, NLP, agentic) are deployed where

-

Marketing says “AI-driven” but the SOC still escalates unresolved tickets back to your team

-

Platform forces you to replace existing tools, disguising vendor lock-in as “unified AI”

Score 0 to 2 flags as likely genuine, 3 to 4 as significant AI-washing risk, and 5 to 7 as a legacy tool with an AI label. We detail these AI SOC red flags with real-world examples. Demand a live investigation demo, not a dashboard walkthrough, and verify whether the platform works with your existing stack or forces proprietary replacement.

7. What does an AI SOC cost compared to building an in-house SOC?

Building an in-house AI SOC requires $1M to $3M+ annually when you factor in SIEM licensing, EDR/XDR tools, AI platform costs, and a minimum team of 8 to 12 analysts for 24/7 coverage. The cybersecurity talent shortage (4.8 million unfilled positions globally) makes hiring at this scale both expensive and slow.

AI SOC-as-a-Service changes the economics fundamentally. Providers like UnderDefense offer fully managed AI SOC capabilities starting at $11 to 15 per endpoint per month, including 24/7 monitoring, Tier 3 to 4 analyst access, compliance support, and the AI platform itself. For a 500-endpoint organization, that translates to roughly $66K to $90K annually versus $1M+ for in-house buildout.

We break down the full cost comparison in our SOC cost calculator, including hidden costs like tool sprawl, analyst turnover (average tenure under 18 months), playbook maintenance, and the opportunity cost of slow detection. The math consistently favors outsourced AI SOC for organizations with fewer than 1,000 endpoints.

8. Where does our organization fall on the AI SOC maturity model?

We use a 4-stage framework adapted from the Cloud Security Alliance’s AI maturity levels for cybersecurity:

-

Stage 1 (Manual SOC): Human-driven triage, siloed tools, tribal knowledge. 70%+ alerts ignored; analyst burnout is the norm.

-

Stage 2 (Automated/SOAR-Era): Scripted playbooks for known scenarios. Breaks on novel threats; constant maintenance required.

-

Stage 3 (AI-Augmented): ML-driven detection and GenAI summarization in individual tools. AI features are siloed (“AI in the SOC”); cross-domain correlation is still manual.

-

Stage 4 (Fully Agentic AI SOC): Agentic AI orchestrates end-to-end triage, investigation, user verification, and containment. Humans focus on strategic decisions.

Most organizations sit at Stage 2 to 3 today. The jump to Stage 4 requires an architectural shift, not more copilots on a fragmented stack. We built UnderDefense MAXI for Stage 4: organizations at any prior stage can onboard in 30 days and leap directly to fully agentic capabilities without replacing their existing security stack.

The post What Is an AI SOC? A Complete Guide to How Artificial Intelligence Security Operations Work appeared first on UnderDefense.