Q1: Why Is 2026 the Year AI SOC Became the Category, Not Just a Feature Inside Your Traditional SOC?

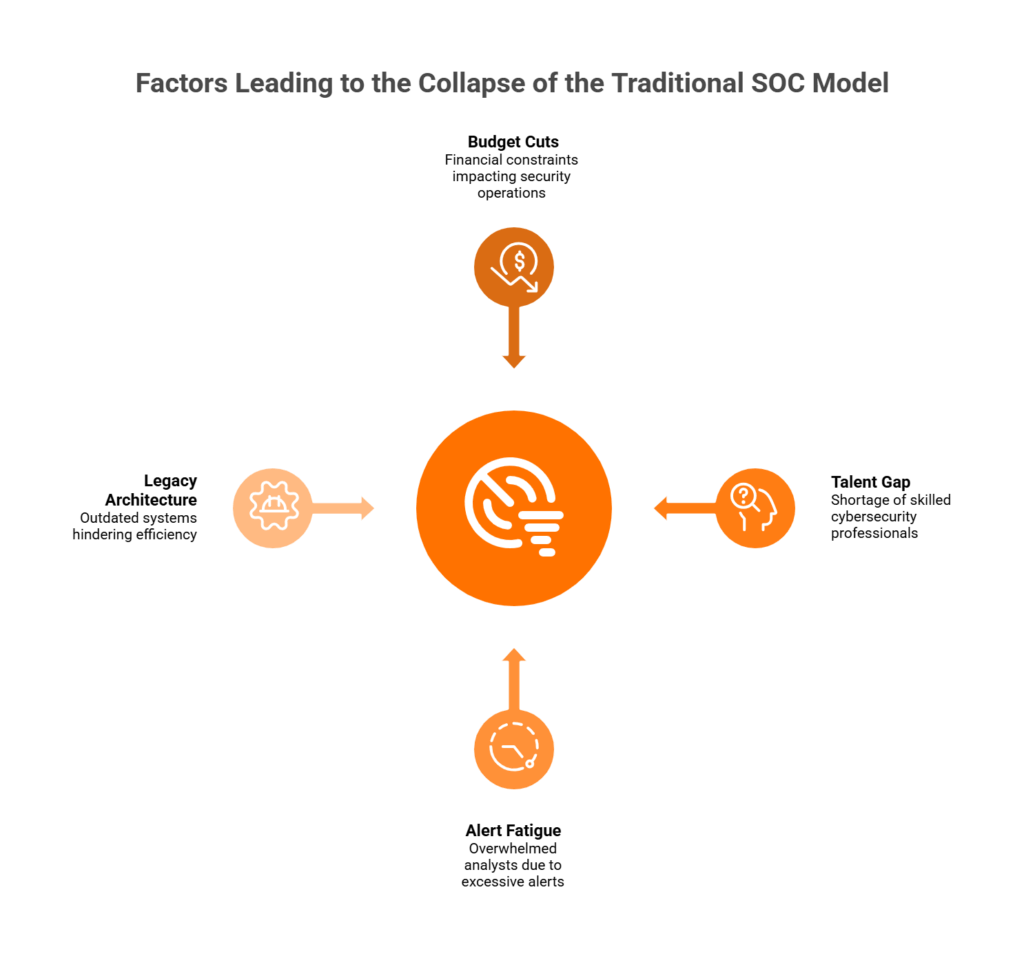

Here’s the operational reality nobody’s saying out loud: we’re not in a talent shortage anymore. We’re in a structural collapse of the old SOC model.

The ISC2 2024 Cybersecurity Workforce Study put the global gap at 4.8 million unfilled roles, a 19% year-on-year surge. But here’s the shift that matters more: for the first time, budget cuts overtook talent scarcity as the #1 barrier to adequate security staffing. Organizations aren’t just unable to find analysts. They can’t afford to hire them even when they’re available. Layer on Gartner’s prediction that 50% of SOCs will deploy AI-based decision support by 2026, and the picture becomes clear. AI SOC isn’t a feature bolted onto your existing SOC. It IS the SOC architecture. The analyst’s role has inverted: supervisor, not triage operator.

❌ The “Black Box Escalation” Trap Still Dominates

Traditional MDR providers, including Arctic Wolf, CrowdStrike, and ReliaQuest, along with legacy MSSPs, still treat AI as a bolt-on to human-first workflows. They offer “AI-assisted alerting” but leave triage, investigation, and response to understaffed human teams. The result? Alert fatigue persists, MTTR stalls, and the same staffing math that broke the model in 2022 still breaks it in 2026. The dominant pattern remains: detect → alert → escalate back to your team. That’s not managed detection and response. That’s managed detection and delegation.

✅ The Inversion That Actually Works

The organizations pulling ahead have flipped this entirely. AI handles triage, enrichment, correlation, and initial response autonomously. Humans supervise, verify, and handle exception cases. This is what we call the “AI SOC + Human Ally” architecture. Detection without response is noise. Response without context is risk. The analyst becomes the judgment layer, not the alert-processing engine.

How We Built This at UnderDefense

We designed the UnderDefense MAXI platform around this inversion: vendor-agnostic integration across 250+ security tools, AI-driven triage with concierge analyst response, 2-minute Alert-to-Triage and 15-minute escalation for critical incidents, and ChatOps-based user verification that closes the context gap competitors leave open. Our 96% MITRE ATT&CK coverage isn’t a marketing number. It’s an auditable, reproducible benchmark clients validate during onboarding.

⭐ The Proof Is in the Workflow

In documented case studies, UnderDefense detected threats 2 days faster than CrowdStrike OverWatch. Across 500+ MDR clients over 6 years, we’ve maintained a 100% ransomware prevention record, because AI-driven detection without human context still leaves gaps that only analysts communicating directly with affected users can close. That’s not a tagline. That’s an observable outcome you can audit.

Q2: What Do the 2026 AI SOC Benchmarks Actually Show, and How Should You Measure Your Own SOC?

If you can’t measure it, you can’t improve it. Most SOC teams track vanity metrics (tickets closed, alerts processed) that tell you nothing about actual security outcomes. Here’s a vendor-neutral SOC Efficiency Scorecard with six KPIs that separate operational noise from real progress.

📊 The 6-KPI SOC Efficiency Scorecard

| KPI | Industry Baseline (Manual SOC) | AI SOC Leader Benchmark | Source |

|---|---|---|---|

| Mean Time to Detect (MTTD) | 194 days | < 24 hours | IBM 2024 Cost of a Data Breach Report |

| Mean Time to Respond (MTTR) | 64 days containment | < 30 minutes (critical) | IBM 2024; UnderDefense published benchmarks |

| False Positive Rate | 80–95% of alerts are noise | < 1–5% after AI triage | CSA / Radiant Security CISO Guide |

| Analyst-to-Alert Ratio | 1:500 (manual) | 1:5,000+ (AI-augmented) | LinkedIn AI-Enabled SOC Framework |

| Cost per Investigation | $25–50 (manual triage) | $2–5 (AI-enriched) | Expel SOC cost analysis |

| MITRE ATT&CK Coverage | 40–60% (typical) | 90%+ (mature AI SOC) | MITRE / vendor benchmarks |

⏰ What the Numbers Actually Mean

At Level 4 SOC maturity, MTTR drops 50–90% compared to manual operations. False-positive reduction of 95–99% becomes achievable when you combine behavioral analytics with ChatOps verification, the verification step most providers skip entirely. The analyst-to-alert ratio shifts from 1:500 to 1:5,000+, meaning your existing team covers 10x the surface without burning out. Cost-per-investigation drops from $25–50 per manual triage event to $2–5 when AI handles enrichment, correlation, and initial classification before a human ever touches it.

Building a 24/7 SOC in-house? Expel’s analysis puts minimum analyst costs at $1.6–2.1 million annually just for staffing, before you add SIEM licensing, EDR tools, or management overhead. A “good” in-house SOC runs $2–2.5 million per year. True excellence demands $3 million+, and even then you face analyst turnover and burnout. Use the SOC cost calculator to benchmark your own numbers.

🔍 How to Self-Measure in 3 Steps

- Baseline your MTTR/MTTD across all alert sources for 30 consecutive days. Don’t cherry-pick. Include every source, including the noisy ones.

- Sample 100 consecutive alerts and classify each as true positive or false positive. This gives you your actual signal-to-noise ratio, which is probably worse than you think.

- Calculate cost-per-investigation: (total analyst hours × fully loaded hourly cost) ÷ total investigations per month. Compare against Expel’s $1.6–2.1M baseline for 24/7 coverage.

✅ How UnderDefense Publishes Transparent Benchmarks

We publish and stand behind our numbers: 2-minute Alert-to-Triage, 15-minute escalation for critical incidents, 96% MITRE ATT&CK coverage, and 99% alert noise reduction. Our 30-day onboarding is designed so organizations validate these claims against their own baseline before committing, because “trust but verify” should apply to your MDR provider, too.

Q3: What Are the Agentic AI Maturity Levels, and What Should Your 12-Month Roadmap Look Like?

Most organizations don’t know where they actually stand on AI SOC maturity. They think they’re at Level 3 because they bought an XDR license. In practice, they’re running Level 1 workflows with Level 3 tooling, and the gap is where breaches happen.

📊 The 6-Level AI SOC Maturity Model

| Level | Name | Characteristics | AI Use Cases | Typical Metrics | Business Outcome |

|---|---|---|---|---|---|

| L0 | Manual / Reactive | No centralized logging; ad-hoc response | None | MTTR: days to weeks | Breaches discovered externally |

| L1 | Rule-Based Automation | Basic SIEM rules; static playbooks | Signature matching only | MTTR: hours to days; high false positives | Checkbox compliance, minimal real detection |

| L2 | ML-Assisted | Centralized SIEM; AI-assisted alert suppression | Alert de-duplication, false-positive reduction | Alert volume ↓ 50–60%; triage time ↓ 30–40% | Analysts spend less time closing noise |

| L3 | Intelligent / Behavioral | UEBA, behavioral analytics, cross-source correlation | Anomaly detection, risk scoring, automated enrichment | MTTD: hours; MTTR still partially manual | Real threats surface faster; context improves |

| L4 | Predictive / Automated | High-confidence detections trigger automated containment; human-in-the-loop | Automated isolation, AI-driven incident summaries | MTTR ↓ 50–90%; containment in minutes | Threats contained before widespread impact |

| L5 | Adaptive / Business-Aligned | SOC measures business outcomes; continuous model tuning | Predictive risk scoring, board-level reporting, autonomous response | Near-zero dwell time; measurable risk reduction | Security directly tied to business resilience |

Synthesized from CSA AI Maturity Model, Radiant Security CISO Guide, LinkedIn AI-Enabled SOC Framework, and SRA AI Automation Levels.

✅ Self-Assessment Checklist

Score yourself honestly. Check every box that applies to your current state, not your roadmap:

- ☐ 24/7/365 monitoring without staffing gaps

- ☐ Critical threat containment within 30 minutes

- ☐ Alerts correlated across endpoint, identity, cloud, and network in one view

- ☐ AI triages autonomously without analyst initiation

- ☐ SOC measured by business outcomes (breach impact), not activity metrics (alerts handled)

- ☐ Suspicious user activity verified directly via ChatOps

- ☐ Compliance evidence generated automatically

- ☐ Threat hunting conducted proactively (not just reactive response)

🗺️ Your 12-Month Roadmap by Tier

Tier 1 (0–3 checks → L0–L2):

- Month 1–3: Baseline MTTR/MTTD metrics; audit tool gaps and integration coverage

- Month 4–6: Pilot AI triage on your highest-volume alert source

- Month 7–12: Expand coverage to additional sources; establish AI governance policies

Tier 2 (4–5 checks → L3):

- Month 1–3: Deploy agentic triage across all alert sources

- Month 4–6: Implement ChatOps verification and automated containment workflows

- Month 7–12: Shift analyst time from triage to proactive threat hunting

Tier 3 (6–8 checks → L4–L5):

- Continuous model tuning and business-risk alignment

- Predictive scoring integration with board-level reporting

- Measure security ROI against business KPIs, not alert volume

⚡ How UnderDefense Accelerates the Roadmap

Our 30-day turnkey onboarding is designed as the first 30 days of any roadmap tier: baseline current state, deploy AI triage on your existing stack, and validate results before committing. Most clients move from 2–3 checks to 7 out of 8 within the first month because we integrate with what you already have instead of ripping and replacing.

“The speed of onboarding was a delightful surprise. In times where integrating new systems can take weeks, UnderDefense had us up and running in no time. Their 24/7 detection and response service is fast and comprehensive, providing us with a granular, real-time view of our environment.”

— Valeriia D., Marketing Specialist UnderDefense G2 – Verified Review

“Underdefense is a great choice for teams like ours that are short on resources. It automates many tasks, plus, with 24/7 monitoring, we know we’re always protected. The platform seamlessly integrates our existing security tools, simplifying management. I used to work with many MDR solutions in the past, and so far Underdefense is the best one!”

— Inga M., CEO UnderDefense G2 – Verified Review

“Not having to worry about ransomware, alert overload and reporting. Getting a clear view of my security posture, where the threats are coming from and how they are handled. They literally took care of all our problems.”

— Arlin O., Enterprise UnderDefense G2 – Verified Review

96% MITRE ATT&CK coverage and 99% alert noise reduction within the first month, because comprehensive protection shouldn’t require a 20-person SOC.

Q4: Who Are the Early Adopters vs. Organizations Still Debating, and What Does the Adoption Curve Reveal?

The data is unambiguous: AI SOC adoption follows a steep size-class gradient, and the “still debating” cohort is paying a measurable cost every month they delay.

📊 AI Adoption by Organization Size (2025 Benchmarks)

| Organization Size | AI Adoption Rate | SOC-Specific Stage | Estimated % of Market |

|---|---|---|---|

| Large enterprises (250+ employees) | 55% | Early Adopters → AI-first triage with human oversight | 15–20% |

| Mid-market (50–249 employees) | 30% | Early Majority → Beginning AI SOC pilots | 25–30% |

| Small enterprises (10–49 employees) | 17% | Late Majority + Laggards → Still debating or manual/legacy MSSP | 40–50% |

Sources: Eurostat 2025 enterprise AI data; OECD AI adoption report; Alice Labs Global AI Adoption Index 2026

Mapped onto the classic technology adoption curve for SOC-specific AI: roughly 5% are running autonomous SOCs (Innovators), 15–20% have deployed AI-first triage with human oversight (Early Adopters), 25–30% are running pilots (Early Majority), and 40–50% are still debating or locked into manual/legacy MSSP contracts (Late Majority + Laggards).

🔍 Archetype Profiles

Early Adopter traits:

- Executive sponsor (CISO or CTO) with authority to act

- Existing SIEM/XDR investment they want to amplify, not replace

- 3–10 person security team seeking force multiplication

- Budget allocated for security modernization

- Willingness to trust AI with triage autonomy, verified by human oversight

“Still Debating” traits:

- Diffused decision-making (security is IT’s side job)

- No dedicated CISO, with security decisions filtered through competing priorities

- Budget constrained by non-security needs

- Risk aversion masquerading as prudence (“we’ve always done it this way”)

- Waiting for “more proof” while breach risk compounds monthly

💸 The Cost of Not Deciding

This isn’t abstract. IBM’s 2024 report puts the average breach cost at $4.88 million, a 10% year-on-year jump and the largest increase since the pandemic. Every month of delayed AI SOC adoption translates to measurable analyst hours lost to manual triage.

| Delay Duration | Estimated Manual Triage Cost (Analyst Time) | Cumulative Breach Risk Exposure |

|---|---|---|

| 3 months | $400K–525K in analyst labor | Dwell time increases ~3x when analysts are overwhelmed |

| 6 months | $800K–1.05M | Average breach lifecycle: 258 days (IBM 2024) |

| 12 months | $1.6M–2.1M (full 24/7 SOC staffing cost) | Equivalent to Expel’s minimum annual SOC analyst cost |

The “decision tax” is real: every month you spend evaluating is a month your team spends doing manual triage that AI could handle in seconds. The cost of NOT deciding is measurable and growing.

✅ How UnderDefense Eliminates the “Still Debating” Friction

Our 30-day turnkey deployment and $11–15/endpoint/month transparent pricing are specifically designed to collapse the evaluation-to-value timeline. Low-risk entry point. No proprietary tool replacement. Validate AI SOC value against your own baseline before full commitment, because the fastest way to stop debating is to see results on your own data.

Q5. How Much Does a 24/7 AI SOC Actually Cost, and Why Budget Approval (Not Talent) Is Now the Real Barrier?

Here’s the uncomfortable truth: budget cuts have overtaken talent shortage as the primary barrier to adequate security operations. ISC2’s 2025 Cybersecurity Workforce Study, surveying over 16,000 professionals, found that 37% of organizations faced budget cuts in 2024, while 33% said their organizations simply don’t have the resources to staff teams. The conversation has shifted from “we can’t find people” to “we can’t get budget approval for people or tools.”

⚠️ The Cost of Doing Nothing

Inaction carries its own price tag. IBM’s 2024 Cost of a Data Breach Report puts the global average at $4.88 million, a 10% spike year-over-year. Organizations with understaffed security teams and no 24/7 coverage see dwell times multiply by 3x or more. The risk of not investing is quantifiable, and it dwarfs the cost of investing.

Building a 24/7 in-house SOC requires 10–12 analysts at roughly $98K+ each (before benefits and overhead), a SOC manager at $120–150K, SIEM/SOAR/XDR licensing at $200–500K annually, plus facility costs, training, and perpetual churn. Realistic total: $2.5M–$4M+/year. That doesn’t account for the 6–12 month ramp-up, or the 3–6 month budget approval cycle itself.

💰 What AI SOC Coverage Actually Costs

At UnderDefense, we publish our pricing: $11–15/endpoint/month. For a 1,000-endpoint organization, that’s ~$132K–$180K/year, with 24/7 coverage from Day 1, 30-day deployment, and no requirement to rip out your existing SIEM or EDR. Compliance automation, ChatOps verification, and dedicated concierge analysts are included, not upsold.

Published pricing matters more than people realize. The “contact sales” friction dominating this market stalls executive approvals. When your CFO can model costs in a spreadsheet before the first vendor call, you’ve shortened procurement by weeks.

| Criteria | In-House 24/7 SOC | AI SOC (UnderDefense) |

|---|---|---|

| 💸 Annual Cost (1,000 endpoints) | $2.5M–$4M+ | $132K–$180K |

| ⏰ Time to Full Coverage | 6–12 months | 30 days |

| Staffing Requirement | 10–12 FTEs + management | 0 additional hires |

| Tool Integration | Manual, team-dependent | 250+ vendor-agnostic |

| Response Capability | Limited by shift coverage | 24/7 AI triage + human concierge |

| Compliance Support | Separate budget line | Included (forever-free kits) |

| Scalability | Linear cost increase | Per-endpoint, elastic |

✅ The CFO-Ready ROI Formula

A four-line business case for internal presentation: (1) Current annual security operations spend, (2) Projected AI SOC spend, (3) Risk reduction value = breach probability × $4.88M average cost, (4) Net savings + risk offset = (1) − (2) + (3). For most mid-market organizations, this produces a positive ROI before factoring in reduced burnout, faster response times, or compliance cost avoidance.

“It’s reassuring to know they’re always watching for threats, and it doesn’t cost a fortune. They catch and stop problems quickly, which is a huge relief.”

— Serhii B., Chief Information Security Officer UnderDefense – G2 Verified Review

“UnderDefense is surprisingly affordable considering the level of protection we get. Their proactive threat hunting and rapid response have saved us from incidents that could have been incredibly costly.”

— Verified User, Program Development UnderDefense – G2 Verified Review

“We received little value from Arctic Wolf. The product offered little visibility when we were using it… the sales and account management team is very pushy.”

— Matt C., Manager, Cybersecurity Services Arctic Wolf – G2 Verified Review

Build in-house if you have $3M+ annual security budget, 12+ month runway, and want complete internal control. Choose AI SOC if you need 24/7 coverage now, have a small team, and want to protect existing tool investments. Either way, the cost of another quarter debating exceeds the cost of a 30-day pilot.

Q6. Can a 3–5 Person Security Team Achieve True 24/7 Coverage, and What Does the Operational Model Look Like?

It’s 3:12 AM on a Tuesday. Your SIEM fires a critical alert, potential lateral movement from a compromised service account. Your four-person team is off-shift. The on-call analyst (who’s also the IT manager) wakes up, spends 40 minutes investigating, and discovers it’s a legitimate scheduled task. Third false-alarm wake-up this week. By Friday, they’ll start ignoring alerts entirely.

😓 Why the Gap Exists

The staffing math is unforgiving. True 24/7 coverage requires 8–12 FTEs to cover shifts, weekends, holidays, and PTO. A 3–5 person team physically cannot provide continuous monitoring, the gap isn’t capability but arithmetic. According to industry data, 71% of SOC analysts report burnout, with some SOCs seeing turnover cycles of less than 18 months. SOC turnover hit 28% annually in 2024, while 960 alerts per day flood the average team, and 40% never get investigated.

Night and weekend coverage gaps, alert fatigue, and the on-call burnout spiral drive what I call the “talent death spiral”: experienced analysts leave → remaining team absorbs workload → pressure accelerates burnout → morale collapses → institutional knowledge vanishes → replacements take months to ramp.

⏰ The Hidden Costs (in Time, Not Dollars)

This isn’t just a staffing headache. It’s an operational breakdown. Small teams lose 10–15 hours per week to manual triage. Critical alerts get ignored due to volume. Average dwell time increases 3x when analysts are overwhelmed. And every departure means 7 months to 2 years to fill the role, during which the remaining team is running even more exposed.

✅ How It Should Actually Work

The operational model we built at UnderDefense flips the daily workflow. AI handles triage, enrichment, and correlation 24/7, autonomously. When context is needed (“Did the DevOps engineer authorize that service account change at 2 AM?”), our analysts verify directly via Slack or Teams with the affected user. No escalation email. No ticket queue. Direct human verification through ChatOps.

Your 3–5 person team reviews morning incident summaries, confirmed threats with actions already taken, not 200 raw alerts. The analyst becomes the judgment layer, not the triage operator. You wake up to the answer, not the question.

We reduce customer-facing alerts by 99% through custom detection tuning and direct user verification. Your team should review confirmed incidents, not triage thousands of maybes.

“The biggest win for me was getting actual control over our security alerts. Before the guys from UD stepped in, we were getting bombarded with alerts… Their team cleaned up our configurations and got the noise under control within the first week.”

— Verified User, Marketing and Advertising UnderDefense – G2 Verified Review

“Underdefense is a great choice for teams like ours that are short on resources. It automates many tasks, plus, with 24/7 monitoring, we know we’re always protected.”

— Inga M., CEO UnderDefense – G2 Verified Review

“Honestly, some security tools are more complicated than the threats themselves. Underdefense isn’t just about catching bad stuff, they give proactive tips too. Feels like my IT department suddenly got way smarter.”

— Andriy H., Co-Founder and CTO UnderDefense – G2 Verified Review

Q7. What Separates Monitoring-Only Tools from a True AI SOC, and How Do You Handle Governance and Explainability?

You’re evaluating security operations models: legacy MSSP, traditional MDR, or AI SOC. Each promises 24/7 coverage, but their architectures produce fundamentally different outcomes. And now there’s a new dimension that most vendors aren’t ready for: can the AI explain its decisions to your audit team? Pick wrong, and you’re paying for alert noise without accountability.

❌ The Wrong Way to Decide

Choosing based on brand recognition, cheapest per-endpoint price, or “most integrations listed” leads to regret. The critical questions: Can they reason across your tools? Can they verify context with users? Can they contain threats without escalating back to your team? And critically, can the AI produce an audit trail your compliance officer accepts?

ISO/IEC 42001, introduced in December 2023, now establishes the global standard for AI management systems, requiring risk management, explainability, transparency, and continuous monitoring of AI decisions. Meanwhile, Akto’s 2025 report found only 21% of enterprises have full visibility into their AI agent activities. If your MDR provider uses AI but can’t show you the decision chain, you have a governance gap.

✅ The Right Evaluation Framework

Evaluate across these 8 criteria, and score each provider honestly:

| Criteria | Legacy MSSP | Traditional MDR (Arctic Wolf/CrowdStrike) | AI SOC + Human Ally (UnderDefense) |

|---|---|---|---|

| Detection-to-Response | Detect & alert only | Detect + escalate | ✅ Detect + contain + remediate |

| AI Autonomy Level | Rule-based | Copilot-assisted | ✅ Agentic triage, human-approved containment |

| Context Acquisition | Ticket-based escalation | Email escalation | ✅ Direct ChatOps user verification |

| Integration Model | Proprietary lock-in | Vendor-preferring | ✅ 250+ vendor-agnostic |

| Pricing Transparency | “Contact sales” | “Contact sales” | ✅ Published ($11–15/endpoint) |

| AI Explainability & Audit Trail | None | Limited | ✅ Documented decision chains, human-in-the-loop |

| Compliance Integration | Separate product/cost | Partial | ✅ Included, forever-free kits |

| Time to Value | 3–6 months | 2–4 months | ✅ 30-day deployment |

🔍 Why Governance Is an Evaluation Criterion, Not a Separate Project

Mature AI SOC uses tiered autonomy: triage is autonomous, containment requires human approval, remediation is human-executed. Every decision is logged with the reasoning chain, what data the AI analyzed, what correlations it found, and why it recommended a specific action. This isn’t optional transparency; it’s the architectural requirement that separates auditable AI from black-box automation.

A legacy MSSP sends you alerts with no context. A traditional MDR provider investigates but hands you a ticket to resolve. An AI SOC with a human ally detects the threat, verifies context with the affected user, contains the issue, documents every step, and delivers a complete incident record your compliance team can present to auditors.

The real question isn’t which vendor has the most features. It’s which model can respond to threats and explain those responses to your board, at a cost your organization can sustain. That’s the category shift AI SOC represents.

Q8. What Objections Are Stalling AI SOC Adoption, and How Should You Address Them Internally?

Every CISO or IT Director proposing an AI SOC investment faces the same three objections internally. I’ve heard them in dozens of conversations. Here’s how to address each one with specifics, not slides.

Objection 1: “We Already Have CrowdStrike/SentinelOne. Why Add Another Vendor?”

This is a fair concern. You’ve made a significant EDR investment, and adding complexity feels counterproductive. But here’s what EDR doesn’t do: investigate, verify user intent, or respond with organizational context. CrowdStrike detects threats on the endpoint, but someone still has to determine whether that flagged behavior is a real attack or a DevOps engineer running a legitimate script at midnight.

We layer on top of your existing investment. We don’t replace it. We’ve deployed CrowdStrike to 1,200 endpoints in 23 business days for clients, then provided the 24/7 analyst layer that turns detections into outcomes. The gap isn’t in your tooling; it’s in the operational layer between detection and resolution.

Objection 2: “AI Can’t Be Trusted with Autonomous Security Decisions”

⚠️ This is the most important objection, and the one least addressed by vendors. Only 21% of enterprises have full visibility into their AI agent activities. The concern is valid.

The resolution isn’t “trust the AI.” It’s tiered autonomy: triage and enrichment are autonomous (high volume, low risk), containment actions require human approval (medium risk), and remediation is human-executed (high impact). Every decision produces an auditable chain, what the AI saw, what it correlated, why it escalated, and what the human analyst did. ISO 42001 requires exactly this kind of transparency and continuous monitoring.

At UnderDefense, our analysts sit in the loop for every critical action. You can trace any incident from alert to resolution, see every decision point, and present the documentation to auditors. No black boxes.

Objection 3: “We Can’t Justify the Budget Right Now”

💸 This one deserves a reframe. ISC2 data confirms that 36% of organizations reported budget cuts in 2025, and 29% said they can’t afford to hire staff with necessary skills. AI SOC isn’t another line item. It’s the budget-cut response.

At $11–15/endpoint/month, a 1,000-endpoint deployment costs $132–180K/year vs. $2.5M+ for in-house 24/7 SOC. Present this internally as cost avoidance plus risk reduction, not new spend. Factor in IBM’s $4.88M average breach cost, multiply by your estimated breach probability, and the ROI case writes itself.

✅ Verify Before You Commit

We expect scrutiny, that’s how good decisions get made. Request the compliance documentation package, use the SOC Cost Calculator to build the internal business case, or start a 30-day pilot and measure results against your current operations. Show, don’t tell, that’s how we operate.

“Arctic Wolf provides solid detection and response capabilities, but overly relies on the client’s team for remediation, which really hurts the value of the service.”

— VP of Technology, Services Arctic Wolf – Gartner Verified Review

“Their experienced SOC engineers work closely with our team, providing continuous monitoring and threat detection… They delivered the CrowdStrike deployment to 1,200 endpoints in just 23 business days.”

— Oleksii M., Mid-Market UnderDefense – G2 Verified Review

“Analysts provide little context, and when asked for more information in the investigation nothing is ever provided or even communicated.”

— CISO, Manufacturing Arctic Wolf – Gartner Verified Review

100% ransomware prevention record across 500+ MDR clients over 6 years, organizations who asked the same questions you’re asking now.

Q9. Ready to Calculate Your AI SOC ROI? Tools and Resources for Your Next Step

If you’ve scored your SOC maturity, mapped your adoption stage, and quantified the cost gap between in-house and AI-augmented operations, the next step is translating analysis into action. Two resources close that gap: a SOC cost calculator that models your specific environment, and a ranked comparison of SOC-as-a-Service providers evaluated against the criteria covered in this article.

📊 What the SOC Cost Calculator Reveals

- Fully loaded cost of in-house 24/7 SOC for your exact endpoint count, including analyst salaries, benefits, and overhead

- Tool licensing projections: SIEM, SOAR, XDR, and cloud monitoring at current market rates

- Hidden costs most budget models miss, including turnover replacement (18-month average analyst tenure), training ramp-up (6–12 months to full productivity), and facility overhead

- AI SOC alternative pricing side-by-side, with published per-endpoint rates and no “contact sales” gatekeeping

📋 What the SOCaaS Comparison Covers

- Provider-by-provider evaluation across integration flexibility, response capability, pricing transparency, and compliance inclusion

- Scoring against the 8-criteria framework from Q7, so you can apply the same decision matrix to real vendors

- Published response time SLAs, documented case study outcomes, and deployment timelines for each provider

Analysis based on documented response times, G2 Spring 2025 rankings, published pricing, and operational outcomes across 500+ MDR deployments.

Q10. AI SOC FAQ: Quick Answers to the Questions Security Leaders Ask Most

What is an AI SOC?

An AI SOC (Security Operations Center) is an operations model where artificial intelligence handles alert triage, enrichment, and correlation across security tools, while human analysts supervise critical decisions, verify context, and execute containment. It’s not a product but an architectural shift from reactive alert management to proactive, AI-augmented threat response that works 24/7 without scaling headcount linearly.

Can AI replace SOC analysts?

No, and it shouldn’t. AI replaces the mechanical grunt work: pulling logs, enriching alerts, correlating across SIEM and EDR, and generating investigation reports in seconds. The analyst’s role shifts from triage operator to supervisor and decision-maker. Automation scales routine work; humans handle edge cases, context validation, and judgment calls that require organizational knowledge.

How much does an AI SOC cost vs. a traditional SOC?

💰 The Short Answer

- In-house 24/7 SOC: $2.5M–$4M+/year (10–12 analysts, management, tooling, and facilities)

- AI SOC (e.g., UnderDefense): $132K–$180K/year for 1,000 endpoints ($11–15/endpoint/month)

- Savings: 80–90% cost reduction with equivalent or better coverage from Day 1

What are the AI SOC maturity levels?

Organizations typically progress through six stages:

- L0, Manual: All triage and investigation performed by humans; no automation

- L1, Alert-Assisted: Basic SIEM rules and signature-based detection; high false positives

- L2, Partially Automated: SOAR playbooks handle repetitive tasks; analysts still drive investigations

- L3, AI-Augmented: AI performs triage, enrichment, and correlation; humans approve and execute response

- L4, Agentic SOC: AI autonomously investigates end-to-end; humans supervise critical containment

- L5, Autonomous + Human Ally: Full AI-driven detection with concierge analysts handling edge cases, user verification, and remediation

How does AI SOC reduce false positives?

AI SOC reduces false positives through two mechanisms: behavioral analytics that baseline normal activity across endpoints, identity, and cloud, so deviations are contextual rather than rule-based, and ChatOps user verification, where analysts confirm suspicious activity directly with affected users via Slack or Teams. UnderDefense reduces customer-facing alerts by 99% through this combination of AI-driven correlation and human verification.

⚕️ Is AI SOC safe for regulated industries (healthcare, finance)?

Yes, when the provider supports compliance frameworks natively. Look for SOC 2 Type II certification, HIPAA readiness, ISO 27001 alignment, and ISO 42001 AI governance standards. UnderDefense includes forever-free compliance kits and automated audit evidence collection bundled with MDR, not sold as a separate add-on.

⏰ What response times can AI SOC achieve?

AI SOC reduces response times by 50–90% compared to manual operations. UnderDefense documents 2-minute alert-to-triage and 15-minute escalation for critical incidents, with response times 2 days faster than CrowdStrike OverWatch in published case studies. A US government organization achieved 9-minute threat response time with UnderDefense MDR.

How fast can you deploy an AI SOC?

In-house SOC buildout takes 6–12 months for staffing, tooling, and process development. UnderDefense deploys in 30 days, turnkey, with custom detection tuning, integration across your existing 250+ supported tools, and 24/7 coverage active from Day 1. No SIEM replacement required; no stack migration needed.

1. How much does an AI SOC cost compared to building a 24/7 in-house SOC?

Building a 24/7 in-house SOC requires 10–12 analysts at roughly $98K+ each, a SOC manager at $120–150K, SIEM/SOAR/XDR licensing at $200–500K annually, plus facility costs, training, and perpetual churn. The realistic total runs $2.5M–$4M+ per year, and that does not account for the 6–12 month ramp-up period.

An AI SOC alternative, like the one we deliver at UnderDefense, costs $11–15 per endpoint per month. For a 1,000-endpoint organization, that translates to approximately $132K–$180K per year, with 24/7 coverage active from Day 1 and a 30-day deployment window.

We publish our pricing because transparency shortens procurement cycles. When your CFO can model costs in a spreadsheet before the first vendor call, you have shortened budget approval by weeks. The 80–90% cost reduction makes AI SOC not a new budget line but a budget-cut response.

Use our SOC Cost Calculator to model your specific environment and build the internal business case.

2. Can a 3–5 person security team achieve true 24/7 monitoring coverage?

The staffing math is unforgiving. True 24/7 coverage requires 8–12 FTEs to cover shifts, weekends, holidays, and PTO. A 3–5 person team physically cannot provide continuous monitoring. The gap is arithmetic, not capability.

Industry data shows 71% of SOC analysts report burnout, SOC turnover hit 28% annually in 2024, and 960 alerts per day flood the average team with 40% never getting investigated. Night and weekend coverage gaps drive what we call the “talent death spiral,” where experienced analysts leave, the remaining team absorbs workload, burnout accelerates, and institutional knowledge vanishes.

The operational model we built at UnderDefense flips this dynamic. AI handles triage, enrichment, and correlation 24/7 autonomously. Your small team reviews morning incident summaries of confirmed threats with actions already taken, not 200 raw alerts. We reduce customer-facing alerts by 99% through custom detection tuning and direct user verification via ChatOps.

3. What are the AI SOC maturity levels, and where should my organization be?

Organizations typically progress through six stages of AI SOC maturity:

-

L0, Manual: All triage and investigation performed by humans with no automation.

-

L1, Alert-Assisted: Basic SIEM rules and signature-based detection with high false positives.

-

L2, Partially Automated: SOAR playbooks handle repetitive tasks while analysts still drive investigations.

-

L3, AI-Augmented: AI performs triage, enrichment, and correlation; humans approve and execute response.

-

L4, Agentic SOC: AI autonomously investigates end-to-end with humans supervising critical containment.

-

L5, Autonomous + Human Ally: Full AI-driven detection with concierge analysts handling edge cases, user verification, and remediation.

Most mid-market organizations today operate between L1 and L2. The Under Defence MAXI platform enables organizations to jump directly to L4–L5 without building internal capabilities from scratch, compressing what would normally take years of incremental investment into a 30-day deployment.

4. What separates a true AI SOC from monitoring-only MDR tools?

The distinction comes down to three architectural differences: autonomy level, context acquisition, and response capability.

A legacy MSSP sends you alerts with no context. A traditional MDR provider like Arctic Wolf or CrowdStrike investigates but hands you a ticket to resolve. A true AI SOC detects the threat, verifies context with the affected user via ChatOps, contains the issue, documents every step, and delivers a complete incident record your compliance team can present to auditors.

When evaluating providers, we recommend scoring across eight criteria: detection-to-response capability, AI autonomy level, context acquisition method, integration model, pricing transparency, AI explainability and audit trail, compliance integration, and time to value. We built our MDR service to score across all eight because the real question is which model can respond to threats and explain those responses to your board at a cost your organization can sustain.

5. How does AI SOC handle governance, explainability, and audit compliance?

ISO/IEC 42001, introduced in December 2023, now establishes the global standard for AI management systems, requiring risk management, explainability, transparency, and continuous monitoring of AI decisions. Only 21% of enterprises have full visibility into their AI agent activities, according to Akto’s 2025 report.

At UnderDefense, we solve this with tiered autonomy: triage and enrichment are autonomous (high volume, low risk), containment actions require human approval (medium risk), and remediation is human-executed (high impact). Every decision produces an auditable chain documenting what the AI analyzed, what correlations it found, why it escalated, and what the human analyst did.

Our compliance services include forever-free compliance kits and automated audit evidence collection bundled with MDR. You can trace any incident from alert to resolution, see every decision point, and present the documentation to auditors without additional cost or tooling.

6. Why is budget approval, not talent shortage, the real barrier to AI SOC adoption?

ISC2’s 2025 Cybersecurity Workforce Study, surveying over 16,000 professionals, found that 37% of organizations faced budget cuts in 2024, while 33% said their organizations simply do not have the resources to staff teams. The conversation has shifted from “we can’t find people” to “we can’t get budget approval for people or tools.”

The cost of inaction is quantifiable. IBM’s 2024 Cost of a Data Breach Report puts the global average at $4.88 million, a 10% spike year-over-year. Organizations with understaffed security teams see dwell times multiply by 3x or more.

We recommend a four-line CFO-ready ROI formula: (1) current annual security operations spend, (2) projected AI SOC spend, (3) risk reduction value equals breach probability multiplied by $4.88M average cost, (4) net savings plus risk offset. Use our 2026 cybersecurity budget playbook to build the internal case with numbers your CFO can validate before the first vendor call.

7. What objections stall AI SOC adoption, and how do we address them internally?

We hear three objections consistently:

“We already have CrowdStrike/SentinelOne.” EDR detects threats on the endpoint, but someone still has to determine whether flagged behavior is a real attack or a DevOps engineer running a legitimate script. We layer on top of your existing investment and have deployed CrowdStrike to 1,200 endpoints in 23 business days for clients. The gap is not in your tooling but in the operational layer between detection and resolution.

“AI can’t be trusted with autonomous decisions.” The resolution is tiered autonomy, not blind trust. Triage is autonomous, containment requires human approval, and remediation is human-executed. Every decision produces an auditable chain aligned with ISO 42001 requirements.

“We can’t justify the budget.” At $11–15 per endpoint per month, a 1,000-endpoint deployment costs $132–180K per year vs. $2.5M+ for an in-house SOC. Present this as cost avoidance plus risk reduction, not new spend.

8. How fast can an AI SOC be deployed, and what response times should we expect?

In-house SOC buildout takes 6–12 months for staffing, tooling, and process development. UnderDefense deploys in 30 days, turnkey, with custom detection tuning, integration across your existing 250+ supported tools, and 24/7 coverage active from Day 1. No SIEM replacement required; no stack migration needed.

On response times, we document 2-minute alert-to-triage and 15-minute escalation for critical incidents. In published case studies, our team responded 2 days faster than CrowdStrike OverWatch, and a US government organization achieved 9-minute threat response time with UnderDefense MDR.

Deployment speed and response time SLAs are the two metrics that separate vendors who deliver from vendors who promise. We recommend requesting documented case study outcomes and published SLAs from any provider before signing.

The post AI SOC Trends 2026: Benchmarks, Maturity Levels, and What Separates Early Adopters appeared first on UnderDefense.